Table of Links

Abstract and 1 Introduction

2 Background

2.1 Large Language Models

2.2 Fragmentation and PagedAttention

3 Issues with the PagedAttention Model and 3.1 Requires re-writing the attention kernel

3.2 Adds redundancy in the serving framework and 3.3 Performance Overhead

4 Insights into LLM Serving Systems

5 vAttention: System Design and 5.1 Design Overview

5.2 Leveraging Low-level CUDA Support

5.3 Serving LLMs with vAttention

6 vAttention: Optimizations and 6.1 Mitigating internal fragmentation

6.2 Hiding memory allocation latency

7 Evaluation

7.1 Portability and Performance for Prefills

7.2 Portability and Performance for Decodes

7.3 Efficacy of Physical Memory Allocation

7.4 Analysis of Memory Fragmentation

8 Related Work

9 Conclusion and References

7.4 Analysis of Memory Fragmentation

Table 8 shows the block size (defined as the minimum number of tokens in a page), and how much physical memory can be (theoretically) wasted due to over allocation in the worstcase. The worst-case occurs when a new page is allocated but remains completely unused. Further, we show each model under two TP configurations – TP-1 and TP-2 – to highlight the effect of TP dimension on block size.

vAttention allocates physical memory equivalent to the page size on each TP worker whereas the per-token physical memory requirement of a worker goes down as TP dimension increases (because KV heads get split across TP workers).

Therefore, block size increases proportionally with the TP dimension. Table 8 shows that this results in the smallest block sizes of 32 (Yi-34B TP-1) to 128 (Yi-6B TP-2). In terms of the amount of physical memory, 64KB page size results in a maximum theoretical waste of only 4-15MB per request which increases to 16-60MB for 256KB page size. Overall, the important point to note is that by controlling the granularity of physical memory allocation, vAttention makes memory fragmentation insignificant. Recall that serving throughput saturates at about 200 batch size for all of our models (Figure 6). Hence, even at such large batch sizes, the maximum theoretical memory waste is at most a few GBs. Therefore, similar to vLLM, vAttention is highly effective in reducing fragmentation and allows serving using large batch sizes. However, if required, page size can be reduced further to as low as 4KB which is the minimum page size supported in almost all architectures today, including NVIDIA GPUs [28].

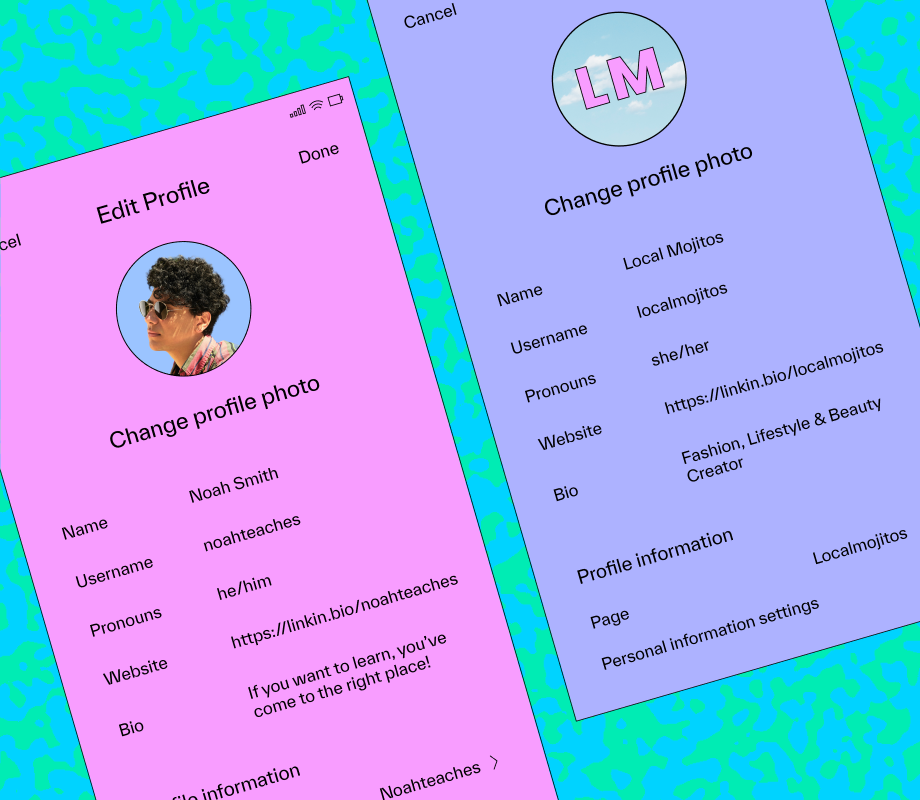

Implementation Effort

The primary advantage of vAttention is portability: it enables one to seamlessly integrate new attention kernels without having to having to write a paged version of it or change the serving framework. For example, switching between the prefill or decode kernels of FlashAttention and FlashInfer requires only a few lines of code changes as shown in Figure 12. In contrast, in PagedAttention, the developers first need to write a paged attention kernel and then make significant changes in the serving framework. For example, integrating FlashInfer decode kernels in vLLM required more than 600 lines of code changes spread over 15 files [21, 22, 24]. Implementing the initial paging support in FlashAttention GPU kernel also required about 280 lines of code changes [20] and additional efforts to enable support for smaller block sizes [16]. Given the rapid pace of innovation in LLMs, we believe it is important to reduce programming burden: production systems should be able to leverage new optimizations of the attention operator without re-writing code – similar to how optimized implementations of GEMMs are leveraged by deep learning frameworks without programmer intervention.