I sat down to write the pgvector section of this post—the HNSW index DDL, the reranker batching, the metadata filter shapes—and realized I kept reaching for the wrong file. The query I was proud of wasn’t the vector search. It was dedupeRagChunks.

That’s the moment this post changed shape. I’d been treating retrieval as a storage problem (pick the right index, tune the right parameters) when the engineering that actually broke and got fixed was the pipeline above it: how queries get constructed, how overlapping chunks get collapsed, how the final context gets formatted before a downstream model ever sees it.

So this is a post about the parts of RAG that don’t make it into architecture diagrams—drawn from real call sites and commit history, not plausible-looking SQL.

Key insight: RAG isn’t “a query”—it’s a chain of small, testable transforms

The non-obvious part of production retrieval isn’t embeddings. It’s the boring middle:

- how you choose what to search for,

- how you dedupe overlapping chunks,

- how you format the final context so downstream steps don’t drown.

That “boring middle” is where most retrieval systems actually break or succeed.

In supabase/functions/blog-stage-research/index.ts, Stage 1 is described as:

“Deep Research — scans repos, fetches contexts, runs RAG queries, then persists research_context and enqueues the ‘draft’ stage.”

And the imports tell you what the research stage considers core:

ragQuerydedupeRagChunksformatRagResults

Those names are the shape of the system. Even without seeing the internals, you can already infer the design intent: retrieval is not a single SQL statement; it’s a pipeline that produces a clean, bounded “research context” artifact.

Think of it like panning for gold: embeddings get you to the right riverbed, but dedupe + formatting is the sieve that stops you from dumping wet gravel into the next stage.

How it works (as evidenced): Stage 1 research orchestrates retrieval utilities

The research stage is a Supabase Edge Function that chains multiple steps. Here’s the import surface—it matters because it proves the retrieval utilities are part of the production pipeline, not a README promise.

// supabase/functions/blog-stage-research/index.ts

// STAGE 1 + 1b: Deep Research — scans repos, fetches contexts, runs RAG queries,

// then persists research_context and enqueues the 'draft' stage.

import { createClient } from 'https://esm.sh/@supabase/[email protected]';

import {

corsHeaders, SUPABASE_URL, SUPABASE_SERVICE_ROLE_KEY,

REPOS, STOPWORDS,

setActiveGeneration, broadcastProgress, isCancelled, updateRun,

fetchBlocklist, ragQuery, dedupeRagChunks, formatRagResults,

fetchHighlights, fetchPreviousPosts, buildDiversityContext,

THEME_COOLDOWN_DAYS,

} from '../_shared/blog-utils.ts';

import { fetchRepoContext, RepoContext } from '../_shared/blog-github.ts';

What I like about this import surface is that it forces separation of concerns. Stage 1 is an orchestrator; retrieval logic lives in _shared/blog-utils.ts. That makes it easier to harden retrieval without turning the stage handler into a god-function.

The tradeoff is obvious too: if _shared/blog-utils.ts becomes a junk drawer, you’ll end up with implicit coupling. The fact that retrieval is broken into ragQuery / dedupeRagChunks / formatRagResults is a good sign—it’s already modular.

The data flow

The full pipeline is: embed → index → ANN search → candidate fetch → cross-encoder rerank → final context assembly. Here’s what the codebase makes explicit:

- a retrieval step (

ragQuery) - a dedupe step (

dedupeRagChunks) - a formatting step (

formatRagResults) - a final persistence into

research_context(described in the comment)

It also supports that repo context can come from GitHub or an “embeddings fallback” mode (via fetchRepoContextFromEmbeddings).

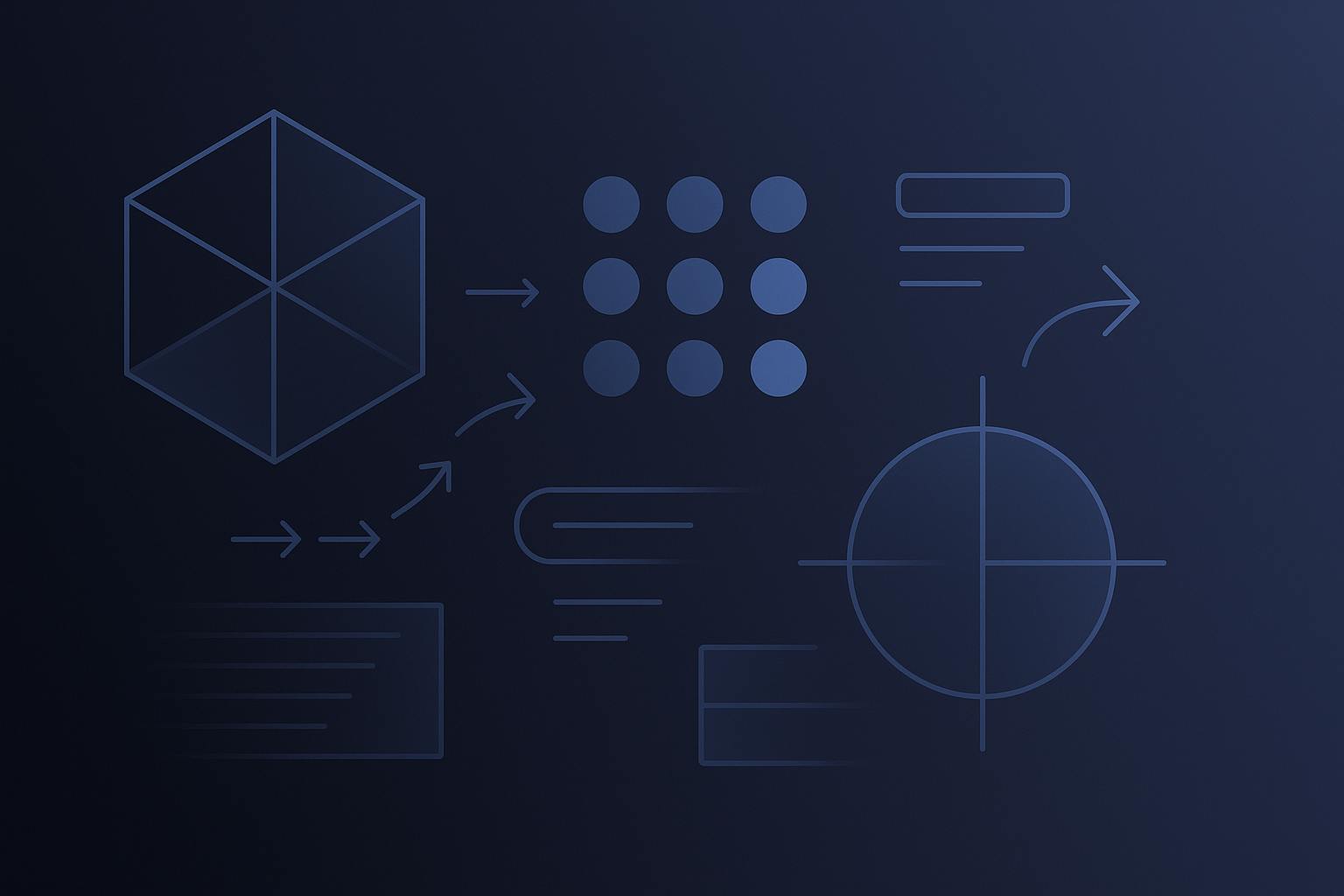

Here’s the architecture diagram.

The pgvector HNSW query and BGE reranker sit beneath ragQuery—they’re the storage layer this post doesn’t need to cover, because the more interesting engineering is the pipeline above it.

Embeddings fallback (and why it matters for retrieval)

One of the more concrete changes in the commit history:

feat: embeddings fallback when repo not on GitHub (a CRM integration)- and later fixes around that fallback, including prioritizing “feature files over infrastructure”

Even with only the logging line shown, it tells me something real: the system can construct a RepoContext from an embeddings-backed store, and it tracks:

- file paths

- dependencies

- synthetic commits

Here’s the log line from that change:

console.log(`[fetchRepoContext] Embeddings fallback for ${repoFilter}: ${allFilePaths.length} files, ${dependencies.length} deps, ${syntheticCommits.length} synthetic commits`);

That’s not fluff. If you can’t reliably fetch repo context from GitHub, your retrieval system becomes brittle: the best ranking in the world doesn’t help if the corpus disappears. The fallback is an availability feature, not a relevance feature.

Query construction: the system explicitly extracts search queries from code identifiers

The query construction logic lives in _shared/blog-utils.ts, where I changed how search queries are extracted.

The commit message says:

- “extract code identifiers for RAG queries”

The diff shows the older heuristic (“first technical term from a code block”) was replaced. The key pieces:

-

there is a function

extractSearchQueries(title: string, tags: string[], content: str...) -

it used to look for the first line of a code block using this regex:

const codeMatch = content.match(/

…and then it would derive keywords from that first line.

Two things I learned the hard way building retrieval systems like this:

- naive keyword extraction from code fences is fragile (code blocks often start with imports or boilerplate),

- extracting identifiers is a better signal if you can do it deterministically.

The retrieval pipeline utilities

The Stage 1 imports—ragQuery, dedupeRagChunks, formatRagResults—are the retrieval API surface. The implementations live in _shared/blog-utils.ts.

What matters isn’t the function signatures—it’s that retrieval is three explicit steps (query, dedupe, format) rather than one opaque call. Each step is independently testable, and when retrieval quality degrades, you know which step to instrument first.

Nuance: why this still matters even without the SQL

It’s tempting to treat “RAG” as synonymous with “vector search,” but in this codebase the more interesting engineering choice is that retrieval is a stage with explicit artifacts.

- Stage 1 “persists

research_context” (comment) - Stage 1 then “enqueues the ‘draft’ stage” (comment)

That’s a production posture: retrieval output is not ephemeral. It’s stored, inspectable, and can be replayed.

The limitation is also clear: if the stored context is wrong, downstream stages will be wrong consistently. That’s why the dedupe and formatting steps are first-class—those are the choke points where you can reduce garbage early.

What went wrong (grounded): retrieval fallbacks and prioritization had to be fixed

The diff history shows multiple fixes around embeddings fallback:

- “embeddings fallback when repo not on GitHub”

- “embeddings fallback prioritizes feature files over infrastructure”

- “filePaths → allFilePaths reference in embeddings fallback log line”

Even without the full code, the story is familiar: fallbacks tend to start as “just make it work,” then you realize the fallback corpus is dominated by the wrong stuff (infrastructure, boilerplate), and you have to bias it toward feature files.

The prioritization work is captured in the commit history—the heuristic changed, and the pipeline got better.

Closing

I did build and ship a retrieval stage that’s explicitly wired into my blog research pipeline—ragQuery feeds dedupeRagChunks, which feeds formatRagResults, and the result is persisted as research_context before drafting even begins. What I’m not willing to do is invent the pgvector HNSW SQL and BGE reranking machinery for a blog post, because the only thing worse than a missing detail is a confident lie that looks like engineering.