The GDC, or Game Developers Conference, is a very special video game event. It is not focused on the announcement of new titles, but on holding presentations and roundtable talks between those who create video games. Lovers of the most technical issues in the industry consider it an unmissable event, and the one who does not skip an edition is NVIDIA. And in this GDC 2026 it has arrived with all its muscle and a clear idea.

The future of gaming goes through artificial intelligence.

DLSS 4.5, the umbrella. Leaving aside the current situation of the PC market due to the requirements of artificial intelligence and the unprecedented crisis in which we find ourselves, AI applied to video games is something that NVIDIA has been pushing for several generations. A lot has happened since the RTX 2000 and the arrival of real-time ray tracing along with a solution to make performance more sustained: DLSS.

Deep Learning Super Sampling is a scaling tool that allows the GPU to render the game at a low resolution and then scale it to the native resolution of our monitor. This allows for improved frame rates in performance while maintaining image quality. Over the generations, DLSS has evolved into a complete neural rendering suite involving several technologies.

It is no longer just scaling through deep learning, but another series of techniques to improve both image and performance. For its core work, DLSS 4.5 features greater scene understanding, improving both image quality and performance at higher resolutions. But he has more things up his sleeve.

Frame Generation. One of them, perhaps the most notable, is the enhanced frame generation mode. If in the previous generation DLSS could multiply the frames per second of the image by up to four (through this deep learning, four frames were “invented” for each native one provided by the GPU), with DLSS 4.5 the figure increases to 6x.

This is crucial to maintain fluidity in games with extreme graphical loads if we want to play at 4K. Because at 1,440p, the power of the GPU is more than enough, but to play in 4K with all the current effects activated, generating frames seems key if we want to take advantage of the high refresh rates of the monitors. According to NVIDIA data, the step from 4x to 6x increases performance in titles with path tracing in 4K by up to 35% on RTX 50 GPUs.

It uses Reflex technology, also from NVIDIA, so that latency is minimal, and the scenario is most curious because we can be playing a game in which most of the frames are reconstructed, and not native, without us realizing the latency.

Multi Frame Gen, the “magic”. Within that frames per second multiplier, there is a very interesting technology: DLSS 4.5 Dynamic Multi Frame Gen. Its name is quite self-explanatory and,. Basically, it consists of an algorithm that establishes the best frame multiplier for each moment depending on the image, the performance of the GPU and even whether we have vertical synchronization activated or not.

It is an automatic change that changes all the time between 2x and 6x (passing through intermediate multipliers) with the objective of always maintaining the highest possible frame rate per second, but without spending resources foolishly. That is to say: if we have a 120 Hz monitor, the GPU changes the multiplier depending on the situation to always try to guarantee those 120 FPS, but without wasting resources.

If in a game we are in a phase with low graphic load (an interior, for example), only a 4x multiplier may be necessary. If we go outside, maybe we need that 6x push, and what the system does is change automatically. The next time we are inside it will go back to 4x and so on constantly. The explanation is simple: the aim is to make the experience as consistent as possible, but without generating frames foolishly to prioritize native frames over those generated by the AI.

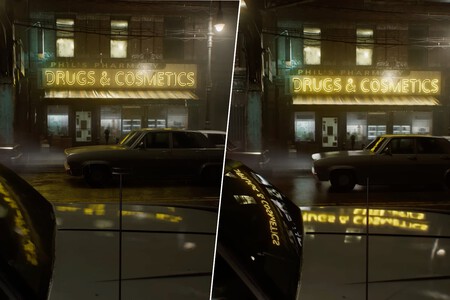

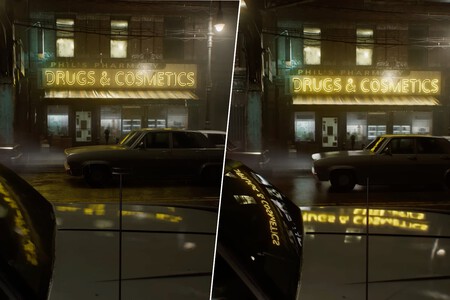

“New” word: path tracing. And all these technologies to give life to games that will soon begin to consume more and more of the PC’s native resources. Because if ray tracing is already demanding, we are going to have to get used to a new term: path tracing. It’s not new, but it’s basically a more complete form of ray tracing that attempts to even more realistically simulate how light impacts game geometry.

Ray tracing can be applied to everything (shadows, reflections or global illumination) or separately, but path tracing is a unified solution. In short: it is like applying all possible ray tracing, but at the same time. This consumes a lot of resources, something that we can see in games like ‘Cyberpunk 2077’ or ‘Resident Evil Requiem’, and is the reason for the DLSS 4.5 rendering techniques and the generation of 6x frames.

The games are ready. In the end, it’s about AI allowing you to achieve performance that the GPU, on its own, might not achieve. In top-of-the-range graphics like the RTX 5080 or the RTX 5090 we may want to ‘pull’ the native resources, but with others like the 5070 or the 5060, these AI ‘helps’ allow us to further stretch the visual quality of a game while maintaining good performance.

And all these tools together will be necessary if we take into account what is to come. Because we have already mentioned some games, but over the next few months others will arrive such as ‘007 First Light’, ‘Control Resonant’, ‘Star Wars Galactic Racer’ or ‘Directive 8020’ that promise to be visual marvels and that will integrate these technologies.

In WorldOfSoftware | Nintendo has not been just a video game company for thirty years. But it is now when it is showing it with dividends