In a university hostel in Nairobi, a 19-year-old founder has assembled what he describes as a full-stack artificial intelligence company, featuring a large language model (LLM) trained on Kenyan dialects, a voice agent already handling customer queries at a savings and credit cooperative (SACCO), and a prompt tool aimed at everyday users.

The company, Map Maven GMB, founded in 2025, claims it is worth millions, based on a formal valuation that leans on projected revenue growth in an expanding AI market.

The pitch lands at a moment when global AI systems still struggle with many African languages, particularly those with limited digital data. That gap is not just a technical problem but also a market opportunity for Mapmavengmb. The question is whether the company’s products can move from early promise to measurable performance before larger players decide the same problem is worth solving.

Building a model around what global systems miss

The company’s key offering is Kaya, a language model built on Meta’s LLaMA architecture at 70 billion parameters. Rather than competing broadly with large language models like OpenAI’s GPT-4, the company has chosen to specialise, layering locally relevant data onto a powerful open-source base.

The training process, according to the founder and chief executive, Abraham Muka, who spoke to me on March 23, combines open datasets from platforms like Kaggle and Hugging Face with a proprietary dataset, Swaweb, which the company says it built to capture Kenyan language patterns and dialectal nuances. Native speakers were involved in labelling, an effort to ground the model in how language is actually used rather than how it is formally structured.

“The combination of LLaMA’s 70 billion parameter architecture with domain-specific Kenyan language training data is what gives Kaya its capability on local dialects,” Muka said.

Yet the strength of that bet remains difficult to assess. Kaya has not yet been benchmarked publicly. There are no comparative results showing how it performs against global models on the same tasks, nor any detailed breakdown of where it succeeds or fails. The company describes the model as being in a pre-deployment phase, with formal evaluations expected as rollout continues.

Until those results are available, Kaya exists largely as a claim; plausible, but untested in ways that matter to customers deciding whether to rely on it.

What remains unclear is how the model is actually trained and deployed, beyond the high-level description. The company says it builds on LLaMA’s 70B base but does not specify whether Kaya is fully fine-tuned or adapted using lighter methods such as parameter-efficient tuning. That distinction matters because it affects both performance and cost.

There is also no detail on dataset size, token distribution across dialects, or how the model handles code-switching, which is common in Kenyan language use. Without that, it is difficult to tell whether Kaya is optimised for real conversational input or narrower, structured prompts.

Data as an asset, but how strong is it?

The company frames its proprietary dataset as a key advantage, arguing that while anyone can access open datasets, the nuances of Kenyan dialects require data not available in public repositories.

“This is actually one of the structural advantages of having built our own dataset,” Muka said. “Swaweb is an asset that belongs to us and cannot be replicated by simply downloading the same public sources.”

In language models, data quality and relevance often determine performance more than model size alone. Owning that data can also reduce legal risk and give a company control over how its systems evolve.

But ownership alone does not guarantee defensibility. The strength of Swaweb depends on factors the company has not disclosed in detail: its size, its diversity across regions and contexts, and how frequently it is updated. A dataset that captures a wide range of real-world interactions and continues to grow with usage can become difficult to replicate. A smaller or static dataset, even if proprietary, offers less protection against competitors with greater resources.

There is also the question of coverage as Kenyan dialects vary not just by language but by region, age and context. A dataset that captures formal usage but misses slang, hybrid speech, or urban code-switching will struggle in real deployments.

A working product in a small but telling market

While Kaya is still being evaluated, Sauti, the company’s voice agent, is already in use. At Natcon Sacco (a savings and credit union) with just over 280 members, the system handles routine queries that would otherwise take up staff time, such as loan eligibility, interest rates, branch information, and other basic requests.

The problem it addresses is familiar to small financial institutions. Limited staff must manage a steady flow of repetitive inquiries, often outside standard working hours and in multiple languages. Sauti offers a way to absorb that load, providing responses in English and, in its pilot phase, Swahili.

Natcon does not depend on broad claims about artificial intelligence, but on whether members get answers faster and whether staff time is freed for more complex work. That is where the product will be judged. If Sauti can demonstrate clear reductions in workload and consistent user satisfaction, it has a path to expansion across similar institutions.

The economics of running a large model

Kaya is hosted on Hugging Face, an open-source AI platform and community, with the company estimating a cost of about $0.20 per query. The model is being deployed, and a public version is expected in the near term.

At this stage, costs are easier to manage because usage is limited. The challenge comes with scale. Large models at 70 billion parameters require significant computational resources, and costs can rise quickly as demand increases or as clients expect faster response times.

The company can continue relying on third-party infrastructure, which offers speed and flexibility but limits control, or invest in its own systems, which require capital but can reduce long-term costs. That decision has not yet been tested because the model has not yet faced sustained, high-volume use.

Funding the present while betting on the future

The company’s financial base is its services business, not its AI products. Website development, including SEO-focused builds and AI-powered features, accounts for most of its revenue. Prices range from KES 19,500 ($150) to KES 45,000 ($345), with an average client value of KES 45,000 ($345).

This model provides immediate cash flow, which is critical for a company operating without external funding. It also creates a balancing act because services demand time and deliver predictable income; products require sustained investment and offer uncertain returns.

The company’s newer products are still in early stages. Daraja, a recently launched prompt tool, attracted 94 users on its first day without paid promotion. Kaya is still being deployed. Sauti is live but in a limited setting.

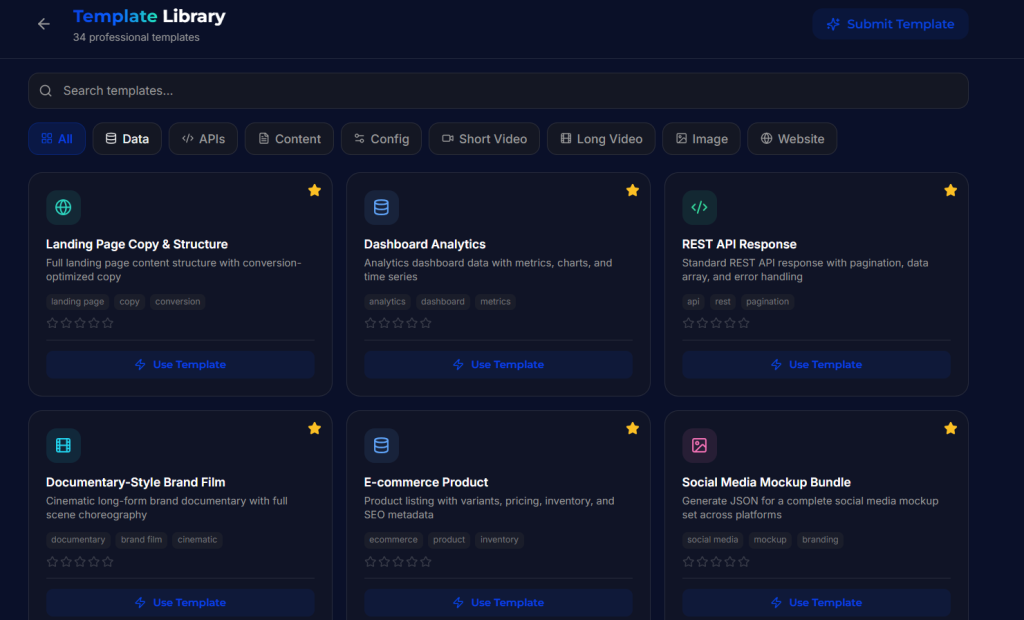

How does Daraja Connect work?

Daraja Connect adds a layer between the user and an LLM. You write a prompt as usual. The system reads intent and returns structured JSON, a text format that organises data into key-value pairs and nested fields, making it easy for machines to parse and use. That output is what you feed into a model or workflow that needs strict formatting.

In use, the flow is simple. You sign in, type a request, and wait for the conversion. A loose instruction like “create a product catalogue with pricing and stock levels” returns a defined schema with fields, types, and nested objects. You copy the JSON and move to the next step.

Many LLM workflows break at this stage, where inconsistent prompts lead to malformed outputs. Daraja narrows that gap by standardising inputs upfront.

There is a cost. For quick prompts, the added layer can feel slow. You move from one interface to another, which interrupts the flow.

Growth through visibility, and its limits

Customer acquisition has so far relied on a mix of direct outreach, social media, and early media attention. Muka and his team have leaned heavily into building in public, sharing the company’s progress across multiple platforms and drawing interest from local media.

Visibility can bring attention, but it does not guarantee retention or revenue. Turning early interest into sustained growth will depend on whether the products consistently solve real problems enough for users to return and pay.

A race against attention

The company’s core argument is that global AI systems have not yet been solved for many African languages, particularly those with limited digital representation. That gap, it argues, creates an opportunity for a focused local player.

“Global models including GPT-4 and Gemini perform well on standard Swahili but degrade significantly on low-resource Kenyan dialects like Luo and Luhya,” Muka said.

That observation aligns with broader patterns in AI development. Models tend to perform best on languages with abundant data and commercial demand. Large AI companies have the resources to expand into new languages quickly once they see sufficient demand. The window for a smaller company, then, is defined by how quickly it can build better data, improve its models, and secure users before competition intensifies.

A valuation built on what comes next

The company’s valuation, calculated using a mix of methods and weighted toward discounted cash flow, assumes that its products will grow into a larger share of its business. The projections include revenue rising from $342,000 in the first year to more than $640,000 within five years, driven by growth in AI-powered services and products.

At present, most revenue comes from services rather than scalable products. The valuation assumes that it will change. That shift has yet to happen.

The gap between current revenue and projected growth is where most of the risk sits. Today, the company is a services business funding early-stage products. The valuation assumes those products will scale into a larger share of revenue within a few years.

That transition is not guaranteed. It depends on whether Kaya can prove its performance, whether Sauti can scale beyond a single deployment, and whether Daraja can retain users beyond early curiosity.

Until then, the valuation reflects potential more than operating reality.