Anthropic, the AI research company behind the Claude family of models, has demonstrated an unprecedented pace of innovation and deployment throughout 2026. This year has seen a continuous stream of advancements, with new models and features rolling out at a remarkable speed. The company’s commitment to rapid iteration is evident in its release schedule, which has averaged a major update every two weeks. March 2026 alone witnessed over 14 distinct launches, ranging from new model versions to significant feature enhancements across its product ecosystem. This aggressive development cycle underscores Anthropic’s ambition to lead the frontier AI space, offering increasingly capable and versatile tools to both developers and end-users.

The core of Anthropic’s 2026 strategy revolves around a tiered model architecture designed to cater to diverse use cases and performance requirements. This includes the flagship Claude Opus, the versatile Claude Sonnet, and the efficient Claude Haiku. Each model is meticulously engineered to excel in specific domains, offering a balance of intelligence, speed, and cost-effectiveness. The continuous improvements across these models, coupled with enhancements to the broader Claude ecosystem, position Anthropic as a formidable player in the competitive AI landscape. Understanding the nuances of each model and the evolving feature set is crucial for maximizing their utility in various applications, from complex research tasks to high-volume operational workflows.

Claude Opus 4.6: The Pinnacle of Frontier Intelligence

Launched on February 5, 2026, Claude Opus 4.6 stands as Anthropic’s most advanced and capable model to date. It represents a significant leap forward in AI reasoning, contextual understanding, and task execution. Opus 4.6 is engineered for highly complex, multi-step reasoning tasks that demand the utmost in cognitive ability. Its performance metrics are truly industry-leading, making it the go-to choice for applications requiring robust intelligence and reliability.

A standout feature of Opus 4.6 is its monumental 1 million token context window. This allows the model to process and retain an extraordinary amount of information within a single interaction, equivalent to approximately 2,500 pages of text. Such a vast context window enables Opus 4.6 to handle extensive documents, entire codebases, or protracted conversations without losing coherence or missing critical details. This capacity is particularly beneficial for tasks like comprehensive legal analysis, in-depth scientific literature review, or developing complex software architectures.

In terms of raw intelligence, Claude Opus 4.6 achieved an impressive 78.3% on the Multi-turn Reasoning and Context Recall (MRCR) v2 benchmark. This score is the highest among all frontier models evaluated, indicating its superior ability to maintain context, reason across multiple turns, and accurately recall information from vast input. This benchmark is a crucial indicator of a model’s capacity for sustained, complex cognitive work, where understanding the entire conversation history and intricate relationships between pieces of information is paramount.

Beyond its cognitive prowess, Opus 4.6 introduces an extended task completion window of 14.5 hours. This allows the model to execute long-running, asynchronous tasks, such as generating detailed reports based on continually updated data streams or managing multi-stage project plans. This feature significantly expands the scope of automation possible with AI, moving beyond instantaneous responses to sustained, intelligent work over extended periods. The pricing for Opus 4.6 reflects its premium capabilities, set at $5 per million tokens for input and $25 per million tokens for output. This positions it as a high-value tool for critical applications where accuracy, depth of understanding, and complex problem-solving are non-negotiable.

Opus 4.6 also boasts a maximum output length of 128,000 tokens, enabling it to generate extensive, coherent responses, summaries, or creative content. This is particularly useful for generating long-form articles, comprehensive code documentation, or detailed strategic analyses. A novel feature in Opus 4.6 is its adaptive thinking with an “max” effort level. This allows users to instruct the model to dedicate maximum computational resources and time to a given problem, leading to more thorough and nuanced solutions, even for the most challenging queries. This “max” effort setting is a testament to Anthropic’s focus on pushing the boundaries of AI problem-solving capabilities.

Claude Sonnet 4.6: The Workhorse with Enhanced Intelligence

Following closely on the heels of Opus, Claude Sonnet 4.6 was launched on February 17, 2026, quickly establishing itself as the versatile workhorse of the Claude family. Sonnet 4.6 strikes an excellent balance between high performance, speed, and cost-effectiveness, making it suitable for a wide array of business applications. Its broad applicability and improved capabilities have led to its rapid adoption across various industries.

As of March 13, 2026, Sonnet 4.6 also benefits from the general availability of the 1 million token context window, aligning it with Opus 4.6 in its capacity for extensive information processing. This significant upgrade allows Sonnet to handle larger documents and more complex conversational threads than its predecessors, greatly expanding its utility for tasks like advanced data extraction, comprehensive content summarization, and detailed customer support interactions. This enhancement, offered without a surcharge, democratizes access to large-context processing capabilities for a broader user base.

User feedback and internal evaluations have consistently shown a strong preference for Sonnet 4.6. It is preferred over Sonnet 4.5 in 70% of comparison cases, indicating substantial improvements in its output quality, relevance, and overall utility. More remarkably, Sonnet 4.6 is preferred over the previous generation’s flagship, Opus 4.5, in 59% of cases. This highlights the significant advancements made in Sonnet 4.6, demonstrating that it can outperform even older premium models for a substantial portion of tasks, offering a compelling value proposition.

Speed is another critical advantage of Sonnet 4.6. It is 30-50% faster than its predecessor, Sonnet 4.5. This increased processing speed is crucial for applications requiring quick turnaround times, such as real-time content generation, rapid data analysis, or interactive user experiences. The combination of enhanced intelligence and speed makes Sonnet 4.6 an ideal choice for operational workflows where efficiency is key. Its pricing is set at $3 per million tokens for input and $15 per million tokens for output, making it a highly competitive option for businesses seeking advanced AI capabilities without the premium cost of Opus.

Sonnet 4.6 has been designated as the default model for both Anthropic’s Free and Pro plans. This strategic decision means that a vast number of users, including those exploring AI for the first time, will experience the high-quality outputs and robust performance of Sonnet 4.6. This accessibility is crucial for driving widespread adoption and familiarizing users with the capabilities of Anthropic’s advanced models. For users interested in optimizing their interactions with Sonnet 4.6, exploring resources on ChatGPT prompts for coding can provide valuable insights into structuring effective prompts for various tasks, including code generation and debugging assistance.

Claude Haiku 4.5: Speed, Efficiency, and Security

Claude Haiku 4.5 represents the third tier in Anthropic’s model hierarchy, specifically designed for high-volume, low-latency applications where speed and cost-efficiency are paramount. While it may not possess the intricate reasoning capabilities of Opus or the broad versatility of Sonnet, Haiku 4.5 excels in its designated niche, providing rapid, reliable performance for essential tasks.

Haiku 4.5 is the ideal choice for API pipelines that require processing a massive number of requests quickly and economically. Use cases include basic content moderation, simple data classification, summarizing short texts, or powering quick chatbot responses. Its design prioritizes throughput and minimal computational overhead, making it incredibly cost-effective for large-scale operations. The pricing for Haiku 4.5 is the most economical of the family, at $0.25 per million tokens for input and $1.25 per million tokens for output, making it accessible for even the most budget-conscious deployments.

A notable feature of Haiku 4.5 is its robust zero prompt injection protection. This advanced security measure helps safeguard against malicious inputs designed to manipulate the model’s behavior or extract sensitive information. In an era where AI security is increasingly critical, Haiku’s built-in defenses provide an essential layer of protection for high-volume API integrations, particularly in scenarios where external, untrusted inputs are common. This makes it a preferred choice for applications handling sensitive customer interactions or processing user-generated content.

Unified Context Window and Enhanced Multimodality

A significant ecosystem-wide upgrade rolled out on March 13, 2026, was the general availability of the 1 million token context window across all eligible models (Opus 4.6 and Sonnet 4.6) without any surcharge. This move democratizes access to massive context processing, allowing developers and users to leverage extensive documents and complex data sets without incurring additional costs. This strategic decision by Anthropic signals a commitment to making advanced AI capabilities more accessible and affordable, fostering innovation across a broader range of applications.

Accompanying this context window expansion is a substantial increase in multimodality capabilities. Users can now submit requests containing up to 600 images or PDF pages per request, a six-fold increase from previous limits. This enhancement significantly boosts the models’ ability to process and understand visual information alongside text. This is transformative for industries like healthcare (analyzing medical images and reports), manufacturing (inspecting product designs and schematics), and education (interpreting complex diagrams and textbook pages). The ability to seamlessly integrate visual data with textual context unlocks new possibilities for AI-driven analysis and insight generation.

The Expanding Claude Product Ecosystem

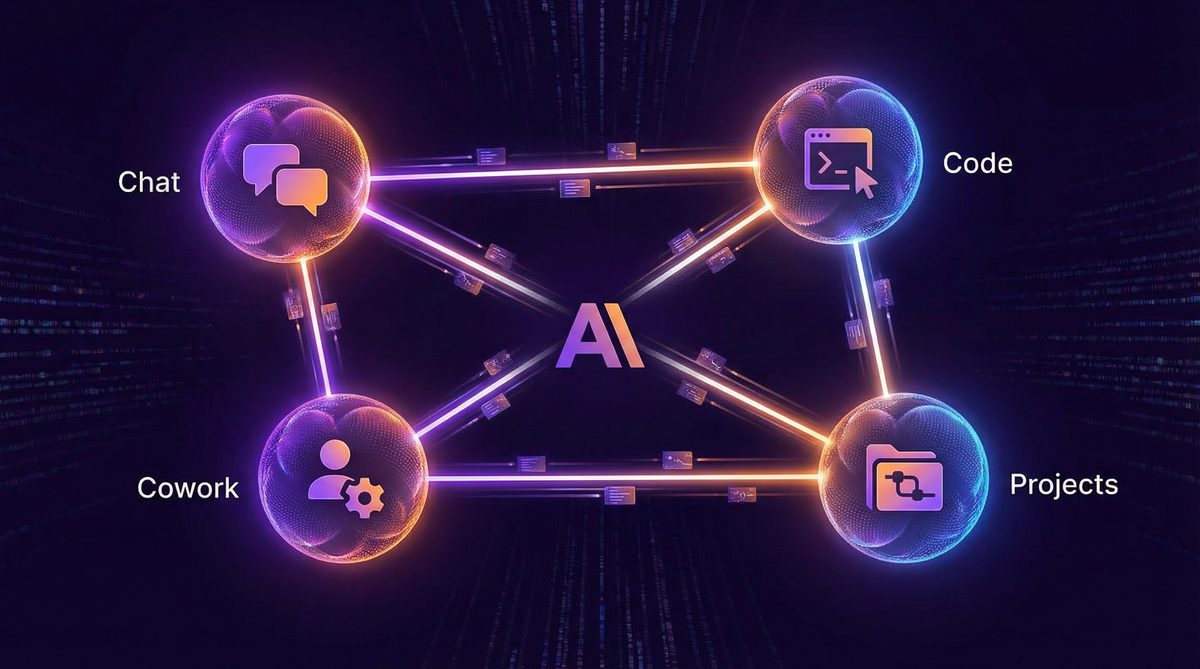

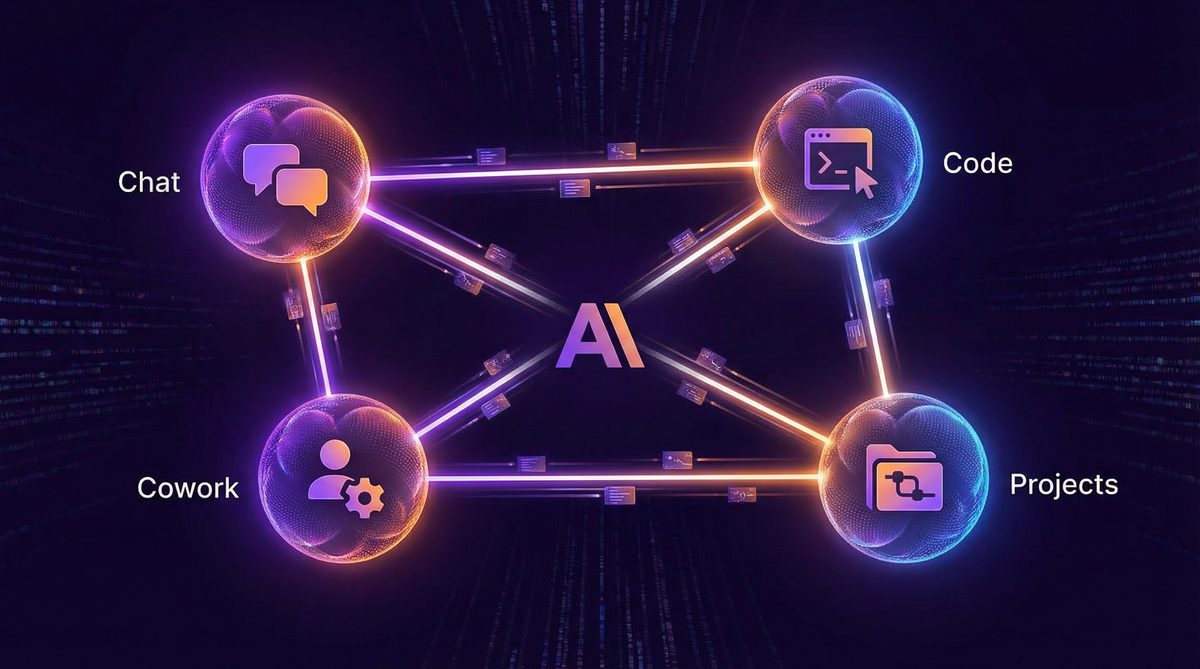

Anthropic’s vision extends beyond just powerful models to a comprehensive product ecosystem designed to integrate AI seamlessly into various workflows. This ecosystem currently includes several distinct interfaces and tools, each tailored for specific user needs:

- Claude Chat: The primary conversational interface, accessible via the web, for direct interaction with Claude models. It’s designed for general-purpose querying, content generation, summarization, and brainstorming.

- Claude Cowork (Desktop Agent): A sophisticated desktop agent designed to act as an AI assistant across a user’s operating system. Cowork can interact with various applications, manage files, automate routine tasks, and provide context-aware assistance directly on the user’s computer. This represents a significant step towards more integrated and proactive AI assistance.

- Claude Code (Terminal): A specialized terminal interface for developers, providing an AI-powered coding assistant. Claude Code can generate code snippets, debug programs, refactor code, explain complex functions, and assist with version control, all within the developer’s command-line environment. This tool aims to enhance developer productivity and streamline software development workflows.

- Claude Projects: A collaborative workspace designed for managing complex, multi-stage projects with AI assistance. Projects can help with planning, task breakdown, resource allocation, progress tracking, and generating project documentation, acting as an intelligent project manager.

Claude Memory: Personalized and Persistent AI

A groundbreaking feature rolled out to all users, including those on the free tier, on March 2, 2026, is Claude Memory. This feature allows Claude models to retain long-term memory of past conversations, preferences, and learned information. Instead of starting each interaction from scratch, Claude can now build a personalized understanding of each user over time. This leads to more coherent, context-aware, and helpful interactions. For example, if a user frequently asks for summaries in a specific format or works on a particular type of coding project, Claude Memory will allow the AI to recall these preferences and adapt its responses accordingly.

Claude Memory significantly enhances the user experience by reducing repetitive prompts and enabling more natural, ongoing dialogues. It allows the AI to learn and evolve with the user, becoming a more effective and personalized assistant. Users have full control over their memory settings, with options to clear specific memories or manage what information Claude retains. This ensures user privacy and control while still leveraging the benefits of persistent learning. For those looking to get the most out of personalized AI interactions, learning how to use Claude AI effectively, especially with its memory features, can greatly enhance productivity and the quality of AI-generated content.

When to Use Each Claude Model: A Decision Framework

Choosing the right Claude model is crucial for optimizing performance, cost, and efficiency. Here’s a decision framework to guide your selection:

- Use Claude Opus 4.6 when:

- You require the absolute highest level of intelligence, reasoning, and problem-solving capabilities.

- Tasks involve complex, multi-step reasoning, logical deduction, or deep analytical work.

- You are working with extremely long documents (e.g., entire books, extensive legal briefs, scientific papers) that demand a 1 million token context window for comprehensive understanding.

- Accuracy and nuance are paramount, and you cannot compromise on output quality.

- The task requires sustained, long-duration processing (up to 14.5 hours) or adaptive “max” effort for optimal results.

- Examples: Advanced research, strategic planning, complex code generation, legal analysis, medical diagnosis support, creative writing requiring deep character development.

- Use Claude Sonnet 4.6 when:

- You need a strong balance of intelligence, speed, and cost-effectiveness for a wide range of business applications.

- Tasks involve detailed summarization, content generation, data extraction, customer support, or internal knowledge management.

- You require a large context window (1 million tokens) but don’t necessarily need the absolute frontier reasoning of Opus.

- Speed is important, as Sonnet 4.6 is 30-50% faster than its predecessor.

- It’s your default choice for general-purpose AI assistance, especially on Free and Pro plans.

- Examples: Marketing content creation, email drafting, report generation, advanced chatbot interactions, medium-complexity coding tasks, data analysis for business intelligence.

- Use Claude Haiku 4.5 when:

- You need a fast, cheap, and efficient model for high-volume API pipelines.

- Tasks are straightforward, requiring quick responses and minimal complex reasoning.

- Cost-efficiency is the primary concern for large-scale operations.

- Security against prompt injection is a critical requirement for handling external or untrusted inputs.

- Examples: Basic content moderation, simple data classification, quick summarization of short texts, powering high-throughput chatbots for FAQs, internal search indexing.

Pricing Comparison Table

Understanding the cost structure of each model is essential for effective resource allocation. The following table provides a clear comparison of input and output pricing per million tokens for each Claude model:

| Model | Input Price (per 1M tokens) | Output Price (per 1M tokens) | Max Context Window | Max Output Length | Key Features |

|---|---|---|---|---|---|

| Claude Opus 4.6 | $5.00 | $25.00 | 1M tokens | 128K tokens | Highest reasoning (78.3% MRCR v2), 14.5hr task window, “max” effort level |

| Claude Sonnet 4.6 | $3.00 | $15.00 | 1M tokens | 128K tokens | Versatile, 30-50% faster than 4.5, default for Free/Pro plans, preferred over Opus 4.5 in 59% cases |

| Claude Haiku 4.5 | $0.25 | $1.25 | 200K tokens | 4K tokens | Fastest, most cost-effective, high-volume API, zero prompt injection protection |

Access 40,000+ ChatGPT Prompts — Free!

Join our community of AI practitioners and get instant access to our comprehensive Notion Prompt Library, curated for developers, marketers, writers, and business owners.

Get Free Access Now

Anthropic’s Business Momentum in 2026

Anthropic’s aggressive product development and strategic market positioning have translated into significant business success in 2026. The company has reportedly achieved an impressive Annual Recurring Revenue (ARR) of $14 billion, underscoring the strong demand for its advanced AI models and services. This financial performance is a testament to the value proposition that Claude models offer to enterprises and individual users alike. The market has responded positively, with Anthropic’s valuation soaring to an estimated $380 billion, placing it among the most valuable AI companies globally.

A key driver of this success is Anthropic’s strong enterprise focus. The company boasts over 500 customers generating more than $1 million in annual revenue each. This indicates deep integration of Claude models into critical business operations across a diverse range of industries, from finance and healthcare to technology and media. These large enterprise contracts are not merely transactional but represent strategic partnerships where Claude AI is leveraged to drive innovation, improve efficiency, and create competitive advantages. This robust customer base, coupled with continuous technological advancements, positions Anthropic for sustained growth and leadership in the evolving AI landscape. For businesses exploring how AI can transform their operations, staying updated on best AI tools 2026 can provide a competitive edge.

About the Author

Markos Symeonides is the founder of ChatGPT AI Hub, where he covers the latest developments in AI tools, ChatGPT, Claude, and OpenAI Codex. Follow ChatGPT AI Hub for daily AI news, tutorials, and guides.