For several weeks, a wave of panic blew through the community of developers using the tools ofAnthropic. Concurring reports on GitHub, X and Reddit described a notable degradation performances, a phenomenon called “ AI shrinkflation ».

The model seemed less capable of complex reasoning, more prone to repetition, and consumed usage credits at an abnormal rate. After an internal investigation, the company published a report detailing the causes, confirming that the problem did not come from the background model, but from the software “overlay” surrounding it.

All fixes have been deployed and subscriber usage limits have been reset.

What are the three technical errors that degraded Claude?

The perceived degradation of Claude Code arises from three separate technical incidents which, combined, created a regression print widespread.

The direct answer is therefore simple: it was a domino effect unfortunate. The first issue, initiated on March 4, was to reduce the default reasoning effort from “high” to “medium” to reduce latency.

The intention was good, but the result was a model perceived as significantly less intelligent.

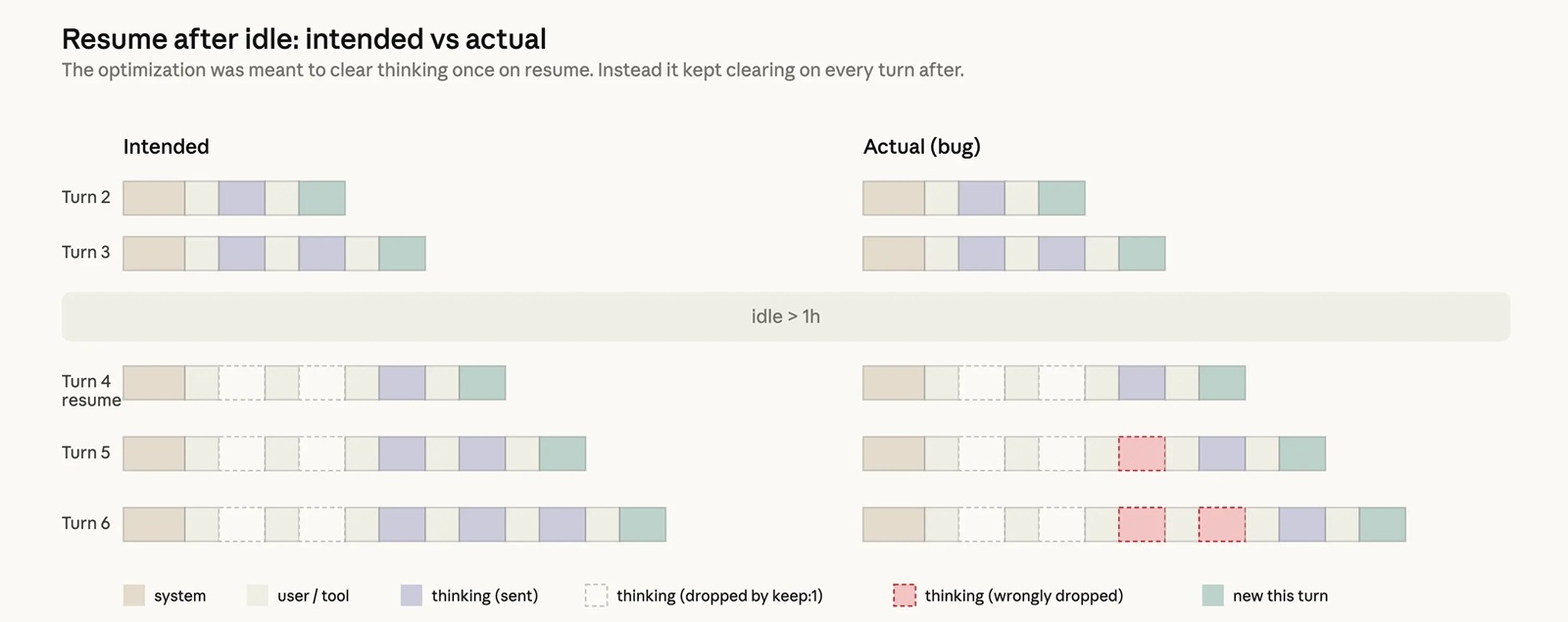

The second culprit was a bug critique in a cache optimization, deployed on March 26. Instead of purging the reasoning history after an hour of inactivity, a code error cleared it with each new interaction.

Consequence: Claude lost his short-term memorybecame repetitive and forgot the context. Finally, on April 16, a new system instruction aimed at reducing the verbosity of the model had a perverse effect, causing a 3% drop in quality on coding tasks.

Why did users believe in “AI shrinkflation”?

Users called it “AI shrinkflation” because the combined symptoms perfectly mimicked intentional reduction in quality to save resources.

The reduction in reasoning effort gave the impression that Claude was choosing more “lazy” solutions. The cache bug manifested itself by a blatant loss of contextforcing users to repeat their instructions and wasting their usage credits. These quality problems fueled distrust.

This cascade of glitches has been validated by external analyses, such as that of Stella Laurenzo (AMD), which documented a drop in reasoning depth, and third-party benchmarks which saw Claude’s ranking plummet.

The cumulative effect of these errors, affecting different user groups at different times, made the initial diagnosis difficult, leaving doubt about a possible attempt to reduce the performance of the model to manage explosive demand.

How does Anthropic plan to restore trust?

To regain the trust of its community, Anthropic deployed a multi-point action plan. The first measure, immediate and symbolic, was to reset usage limits of all subscribers as compensation for the inconvenience and excessive consumption of tokens.

But the most important thing lies in the overhaul of quality control process to prevent such incidents from happening again. Concretely, the company will strengthen its “ dog food » (using its own products internally), forcing more employees to use Claude’s exact public versions.

In addition, each modification of the system prompt will now have to pass a assessment test battery much broader and specific to each model. Finally, Anthropic is committed to more transparency via a new X account, @ClaudeDevsto communicate openly about product decisions.

Does this fiasco reveal a deeper crisis in AI?

This incident highlights a reality often hidden by marketing discourse: the performance of a artificial intelligence cutting-edge technology does not only depend on its base model.

It is based on a complex scaffolding of tools, caches and system instructions. When this scaffolding seizes up, even the most brilliant of models can appear to fail. This is the hidden side of the iceberg, revealing an inherent fragility of AI systems in production.

More broadly, this affair is symptomatic of the immense pressure exerted by the shortage of computing power (the “ compute crunch “) across the entire industry. Anthropic’s attempt to reduce latency was not trivial; it meets a vital need to optimize extremely expensive and rare GPU resources.

This race for optimization at all costsshared by OpenAI and others, creates a permanent risk of sacrificing quality on the altar of efficiency and forcing trade-offs to be handled with caution or risk a violent blowback.