Authors:

(1) Yuwei Guo, The Chinese University of Hong Kong;

(2) Ceyuan Yang, Shanghai Artificial Intelligence Laboratory with Corresponding Author;

(3) Anyi Rao, Stanford University;

(4) Zhengyang Liang, Shanghai Artificial Intelligence Laboratory;

(5) Yaohui Wang, Shanghai Artificial Intelligence Laboratory;

(6) Yu Qiao, Shanghai Artificial Intelligence Laboratory;

(7) Maneesh Agrawala, Stanford University;

(8) Dahua Lin, Shanghai Artificial Intelligence Laboratory;

(9) Bo Dai, The Chinese University of Hong Kong and The Chinese University of Hong Kong.

Table of Links

Abstract and 1 Introduction

2 Work Related

3 Preliminary

- AnimateDiff

4.1 Alleviate Negative Effects from Training Data with Domain Adapter

4.2 Learn Motion Priors with Motion Module

4.3 Adapt to New Motion Patterns with MotionLora

4.4 AnimateDiff in Practice

5 Experiments and 5.1 Qualitative Results

5.2 Qualitative Comparison

5.3 Ablative Study

5.4 Controllable Generation

6 Conclusion

7 Ethics Statement

8 Reproducibility Statement, Acknowledgement and References

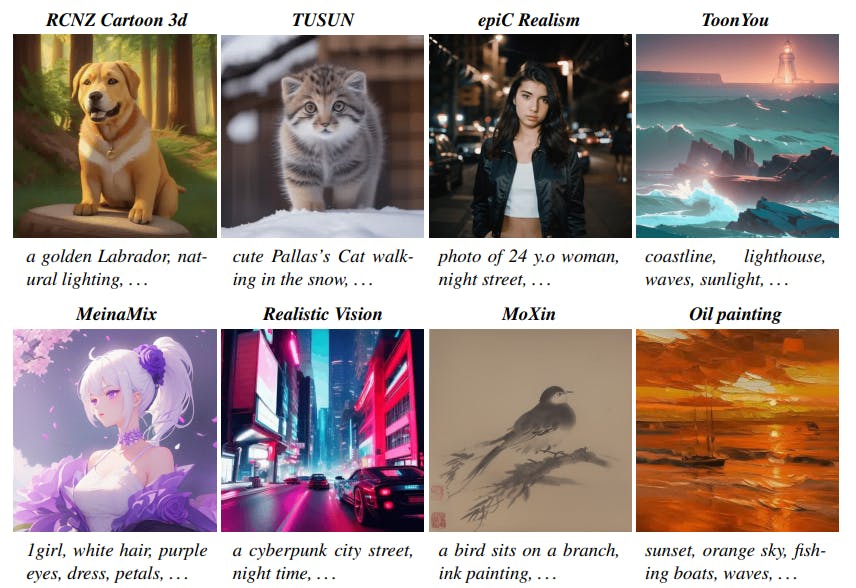

4.4 ANIMATEDIFF IN PRACTICE

We elaborate on the training and inference here and put the detailed configurations in supplementary materials.

It’s worth noting that when training the domain adapter, the motion module, and the MotionLoRA, parameters outside the trainable part remain frozen.

Inference. At inference time (Fig. 2), the personalized T2I model will first be inflated in the same way discussed in Section 4.2, then injected with the motion module for general animation generation, and the optional MotionLoRA for generating animation with personalized motion. As for the domain adapter, instead of simply dropping it during the inference time, in practice, we can also inject it into the personalized T2I model and adjust its contribution by changing the scaler α in Eq. (4). An ablation study on the value of α is conducted in experiments. Finally, the animation frames can be obtained by performing the reverse diffusion process and decoding the latent codes.