Anthropic PBC is taking the leash off its popular artificial intelligence coding tool Claude Code, introducing a new feature called “auto mode” that lets it decide for itself which permissions it’s allowed to use.

The launch accelerates a trend that has seen AI tools become increasingly autonomous, performing more actions without waiting for approval from humans, in order to increase productivity. But there’s a delicate balancing act between speed and control.

Like many AI coding tools, Claude Code was designed with guardrails that prevent it from doing its own thing. It needs to ask users for approval to use new tools that it hasn’t yet been permitted to use. But this can dramatically slow it down, especially if the developer needs to go somewhere.

To get around this, most developers try to guess beforehand which tools it will need to use to complete an assigned task, and grant it permission before they walk away. But doing so is risky, because some tools could allow Claude to do things that the developer definitely doesn’t want it to do, resulting in a messy code that takes weeks to clean up. But on the other hand, there’s the risk that the user might head off to lunch, come back and see that Claude has done nothing because it’s waiting for permission.

Auto mode, now in research preview, is Anthropic’s effort to get around this challenge. Not surprisingly, auto mode uses AI safeguards that review each action Claude wants to take, before it does so, in order to check it won’t end up doing something the user hasn’t requested. It will also check for signs of a prompt injection attack, or malicious instructions in the content it’s processing, that might cause it to take unintended actions.

If the guardrails deem an action to be safe, Claude Code will go ahead and do it, but if they decide it’s unsafe, that action will be blocked.

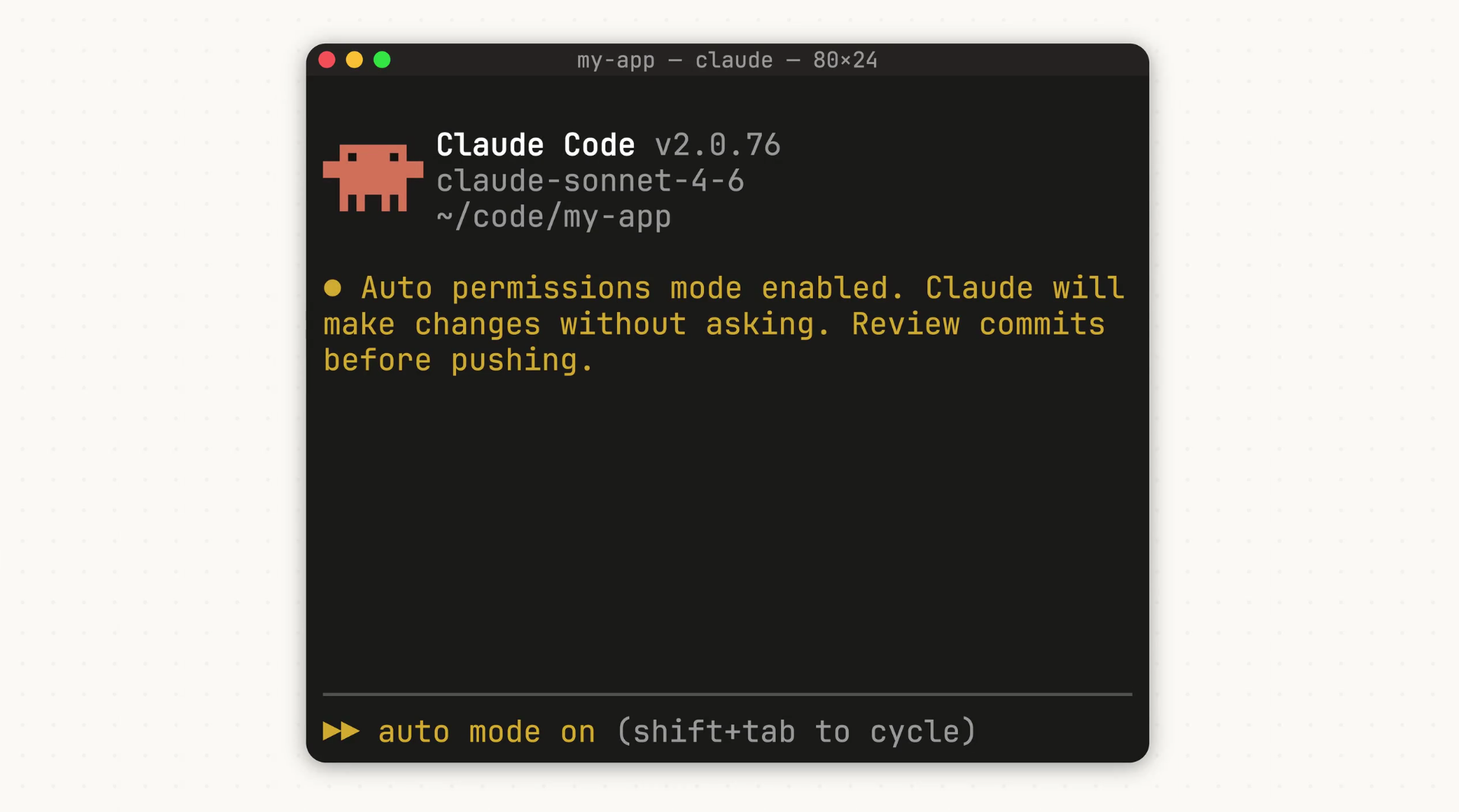

New in Claude Code: auto mode.

Instead of approving every file write and bash command, or skipping permissions entirely, auto mode lets Claude make permission decisions on your behalf.

Safeguards check each action before it runs. pic.twitter.com/kHbTN2jrWw

— Claude (@claudeai) March 24, 2026

The new feature is really an extension of the existing “dangerously-skip-permissions” command, which can be used to stop Claude Code asking for permission and just get on with things, but it goes further by adding a safety layer on top.

Strangely, Anthropic didn’t talk about how these safety guardrails work, so there’s no way of knowing how it determines which actions are safe or not. The lack of transparency is odd, because that’s something developers will likely want to understand better before deciding if they want to proceed with this. What the company did say is that for now, while it’s still in preview, it’s probably not going to be perfect.

“The classifier may still allow some risky actions: for example, if user intent is ambiguous, or if Claude doesn’t have enough context about your environment to know an action might create additional risk,” the company wrote. “It may also occasionally block benign actions. We’ll continue to improve the experience over time.”

The feature is another example of how Anthropic appears to be racing ahead of its rival OpenAI Group PBC in the AI coding market segment. In the last few weeks, the company has introduced a flurry of new features that aim to enhance its “vibe coding” capabilities. Last week it debuted Claude Code Review, which is a tool that automatically reviews code generated by Claude to check for bugs, before it’s deployed. It also launched a new feature called Dispatch for Cowork, which lets users send tasks to Claude from a mobile device, so it can get to work while they’re away from their computer.

Anthropic said it will be rolling out Claude Code’s auto mode to Enterprise and API users in the next few days. It will only work with the Claude Sonnet 4.6 and Claude Opus 4.6 models for now. The company recommends that developers only implement auto mode in sandboxed environments that are isolated from production systems to minimize the damage that might occur if the newly unbounded Claude goes haywire.

Image: Anthropic

Support our mission to keep content open and free by engaging with theCUBE community. Join theCUBE’s Alumni Trust Network, where technology leaders connect, share intelligence and create opportunities.

- 15M+ viewers of theCUBE videos, powering conversations across AI, cloud, cybersecurity and more

- 11.4k+ theCUBE alumni — Connect with more than 11,400 tech and business leaders shaping the future through a unique trusted-based network.

About News Media

Founded by tech visionaries John Furrier and Dave Vellante, News Media has built a dynamic ecosystem of industry-leading digital media brands that reach 15+ million elite tech professionals. Our new proprietary theCUBE AI Video Cloud is breaking ground in audience interaction, leveraging theCUBEai.com neural network to help technology companies make data-driven decisions and stay at the forefront of industry conversations.