Amazon Web Services Inc. will make Cerebras Systems Inc.’s WSE-3 artificial intelligence chip available to its customers.

The companies announced the initiative today. It’s part of a multiyear partnership that will also see AWS and Cerebras develop a “disaggregated architecture” for AI inference workloads. The technology is expected to increase the speed at which AI models generate output by a factor of five.

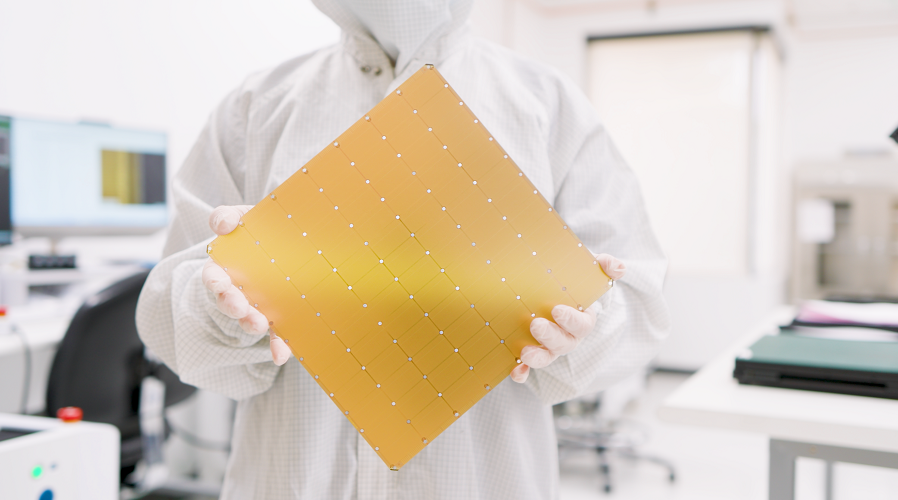

Cerebras’ WSE-3 chip includes 900,000 cores and 44 gigabytes of on-chip SRAM. The company ships the processor as part of a water-cooled appliance called the CS-3. The system, which is about the size of a mini-fridge, combines one WSE-3 with external memory, network equipment and other auxiliary components.

The newly announced partnership will see AWS deploy CS-3 appliances in its data centers. The systems will be made available to customers via the cloud giant’s AWS Bedrock service, which provides access to internally developed and third-party foundation models. CS-3 enables neural networks to generate prompt responses at a rate of several thousand tokens per second.

The disaggregated architecture that AWS and Cerebras are developing will combine the WSE-3 with AWS Trainium, the cloud giant’s line of custom AI chips. The goal of the integration is to speed up customers’ inference workloads.

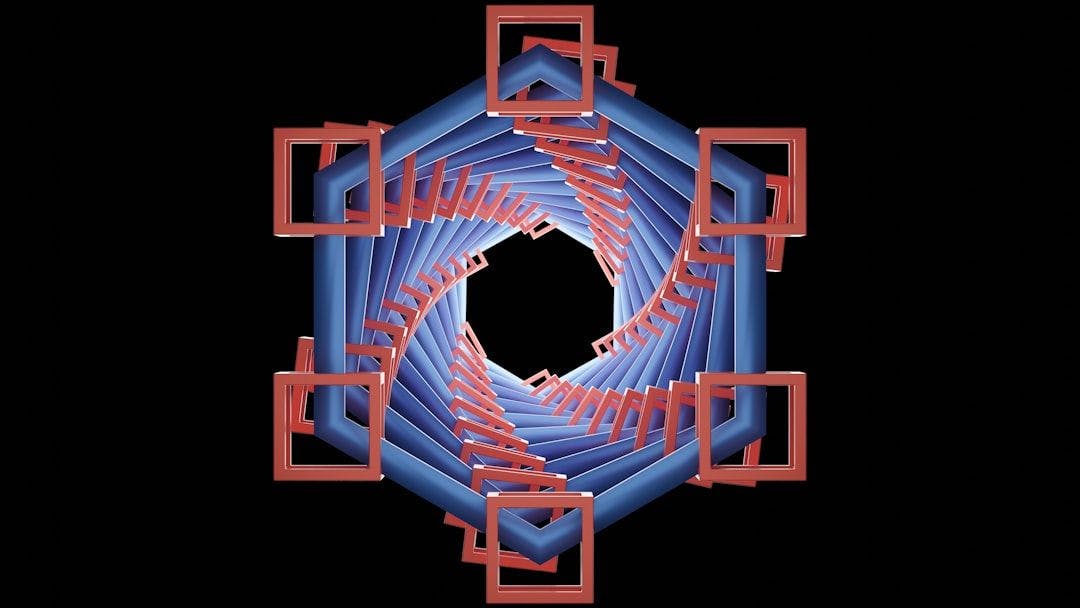

A large language model processes prompts by splitting them into small units of data called tokens. Each token contains a few letters or numbers. The LLM generates three mathematical objects called the key, value and query for every single token in a prompt. Those objects help the model determine what parts of a prompt are important and which details can be deprioritized.

The process through which an LLM processes a prompt is known as the prefill stage. It’s followed by the decode phase, which is when the model generates its answer to the user’s question.

Prefill and decoding tasks are usually performed by the same chip. In AWS’ disaggregated architecture, Trainium processors will power the prefill stage while the WSE-3 will perform decoding.

Decoding involves a similar set of calculations as the prefill stage, but requires significantly more data movement. Information regularly travels between the underlying chip’s logic circuits and memory. The faster the chip can move the information, the faster prompt responses are generated.

One of the WSE-3’s main selling points is that it can move data between its logic and memory circuits faster than many other chips. According to Cerebras, the processor provides 27 petabytes per second of internal memory bandwidth. That’s more than 200 times the amount offered by Nvidia Corp.’s NVLink graphics card interconnect.

AWS will link together the Trainium and WSE-3 chips in its data centers using an internally developed network device called the Elastic Fabric Adapter, or EFA. Packets usually go through the host server’s operating system when they move between chips. The EFA skips that step to speed up connections and automatically mitigates network congestion.

“Disaggregated is ideal when you have large, stable workloads,” Cerebras Director of Product Marketing James Wang wrote in a blog post. “Most customers run a mix of workloads with different prefill/decode ratios, where the traditional aggregated approach is still ideal. We expect most customers will want access to both.”

The partnership comes a few weeks after Cerebras won another high-profile chip supply deal. OpenAI Group PBC agreed to purchase 750 megawatts worth of computing infrastructure from the company through 2028. The deal, which is reportedly worth over $10 billion, was announced between two funding rounds that together netted Cerebras more than $2 billion.

The chipmaker is expected to file for an initial public offering as soon as the second quarter. The deals with AWS and OpenAI may help increase investor interest in the listing.

Photo: Cerebras

Support our mission to keep content open and free by engaging with theCUBE community. Join theCUBE’s Alumni Trust Network, where technology leaders connect, share intelligence and create opportunities.

- 15M+ viewers of theCUBE videos, powering conversations across AI, cloud, cybersecurity and more

- 11.4k+ theCUBE alumni — Connect with more than 11,400 tech and business leaders shaping the future through a unique trusted-based network.

About News Media

Founded by tech visionaries John Furrier and Dave Vellante, News Media has built a dynamic ecosystem of industry-leading digital media brands that reach 15+ million elite tech professionals. Our new proprietary theCUBE AI Video Cloud is breaking ground in audience interaction, leveraging theCUBEai.com neural network to help technology companies make data-driven decisions and stay at the forefront of industry conversations.