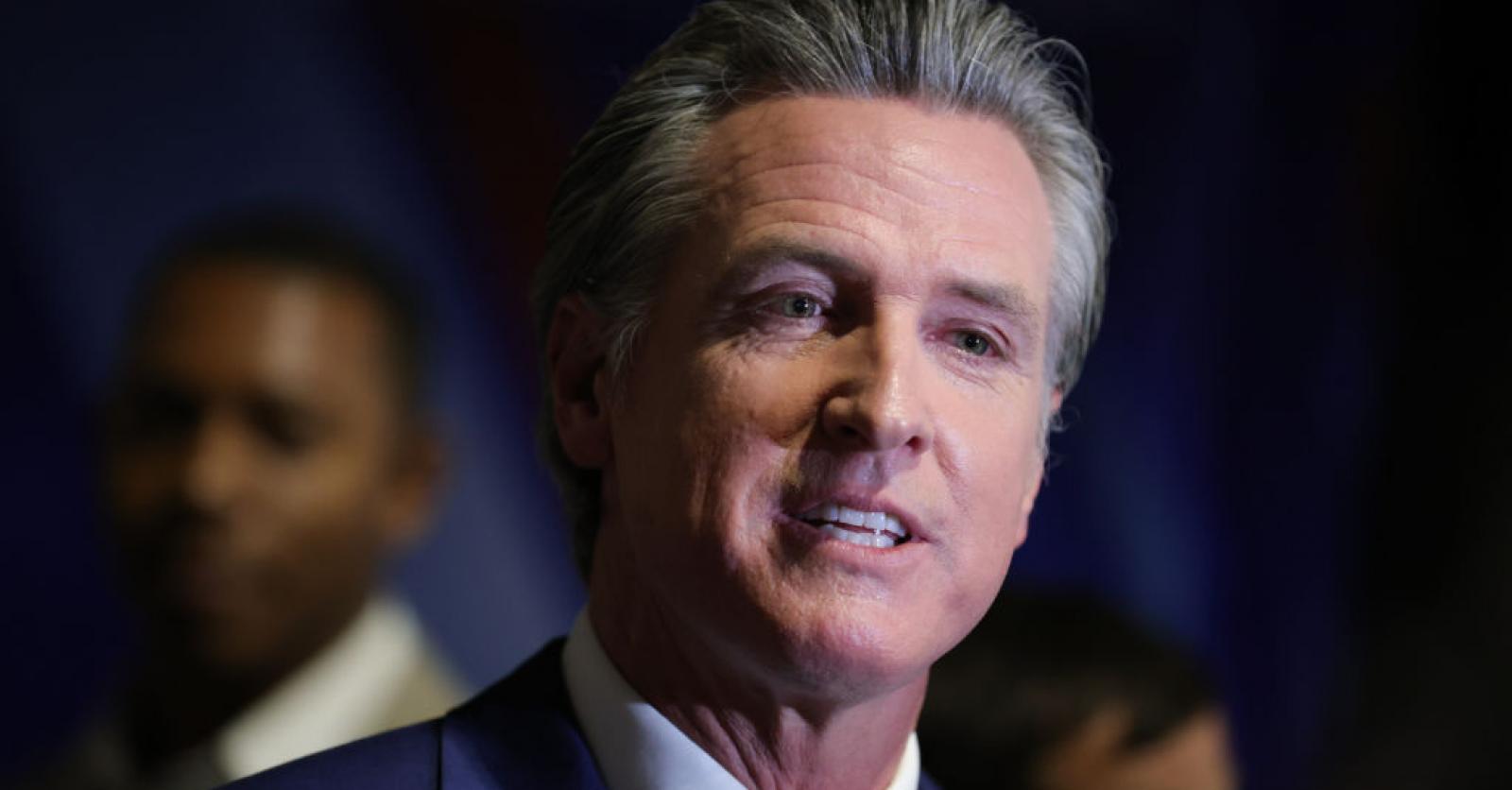

In the US state of California, home to both Hollywood and Silicon Valley, a wave of regulation is underway regarding artificial intelligence. A series of new legislative initiatives from Governor Gavin Newsom could have a profound impact on how AI will be allowed to be used in politics, the arts and other essential areas of society.

With the rise of artificial intelligence, the entertainment and tech industries are increasingly finding each other. Just this week, Lionsgate Entertainment, the film studio behind the likes of John Wick-films, with the news that it is making its content library available to New York-based AI startup Runway, in exchange for a Runway-developed AI model that the studio can use in its editing and production process.

It’s the kind of deal that’s making actors, visual artists and other parties in the entertainment industry pretty nervous (Runway is facing a number of lawsuits from artists claiming the company is violating their copyrights for training its algorithms). And it adds to a host of other concerns about AI.

Eight-way AI betting

About the use of deepfakes in political communication, for example. Or about the possibility that even smarter artificial intelligence can turn against humans. But in California, the state where Hollywood and Silicon Valley are only 600 kilometers apart, a far-reaching legislative initiative is underway to regulate the development of artificial intelligence. Governor Gavin Newsom (D) has already turned eight bills and drafts into state law, with 30 more on the way. The state is “proactively promoting transparent and trustworthy AI,” Newsom said in a statement.

Nude deepfakes become election content

Among the eight bills that have since received Newsom’s signature is a law that makes it illegal to blackmail someone with AI-generated deepfakes of nudes. Another requires companies that create AI content to indicate that fact in a digital watermark. Under three new laws, both politicians and social media platforms must clearly indicate when election content is AI-generated. And under a final bill just signed into law, movie studios must get the consent of actors before creating an AI-generated replica of their voice or likeness.

Regulatory breath in the neck

And there is more to come. In addition to the eight recently signed laws, there are another thirty legislative initiatives in the pipeline. The most contested is the now well-known SB 1047 among tech companies, the Safe and Secure Innovation Act for Groundbreaking Artificial Intelligencewhich provides for broader regulation of advanced AI systems, with big tech companies beginning to feel hot regulatory breath on their necks.

“There’s one bill that’s been magnified in terms of public discourse and awareness: SB 1047,” Newsom said Tuesday at the Dreamforce conference in San Francisco, in an on-stage discussion with Salesforce CEO Marc Benioff. “What are the demonstrable risks with AI, and what are the hypothetical risks? I can’t fix everything. But what can we fix? That’s the approach we’re taking here across the spectrum.”