Authors:

(1) Anthi Papadopoulou, Language Technology Group, University of Oslo, Gaustadalleen 23B, 0373 Oslo, Norway and Corresponding author ([email protected]);

(2) Pierre Lison, Norwegian Computing Center, Gaustadalleen 23A, 0373 Oslo, Norway;

(3) Mark Anderson, Norwegian Computing Center, Gaustadalleen 23A, 0373 Oslo, Norway;

(4) Lilja Øvrelid, Language Technology Group, University of Oslo, Gaustadalleen 23B, 0373 Oslo, Norway;

(5) Ildiko Pilan, Language Technology Group, University of Oslo, Gaustadalleen 23B, 0373 Oslo, Norway.

Table of Links

Abstract and 1 Introduction

2 Background

2.1 Definitions

2.2 NLP Approaches

2.3 Privacy-Preserving Data Publishing

2.4 Differential Privacy

3 Datasets and 3.1 Text Anonymization Benchmark (TAB)

3.2 Wikipedia Biographies

4 Privacy-oriented Entity Recognizer

4.1 Wikidata Properties

4.2 Silver Corpus and Model Fine-tuning

4.3 Evaluation

4.4 Label Disagreement

4.5 MISC Semantic Type

5 Privacy Risk Indicators

5.1 LLM Probabilities

5.2 Span Classification

5.3 Perturbations

5.4 Sequence Labelling and 5.5 Web Search

6 Analysis of Privacy Risk Indicators and 6.1 Evaluation Metrics

6.2 Experimental Results and 6.3 Discussion

6.4 Combination of Risk Indicators

7 Conclusions and Future Work

Declarations

References

Appendices

A. Human properties from Wikidata

B. Training parameters of entity recognizer

C. Label Agreement

D. LLM probabilities: base models

E. Training size and performance

F. Perturbation thresholds

5.2 Span Classification

The approach in the previous section has the benefit of being relatively general, as it only considers the aggregated LLM probabilities and predicted PII type. However, it does not take into account the text content of the span itself. We now present an indicator that includes this text information to predict whether a text span constitutes a high privacy risk, and should therefore be masked.

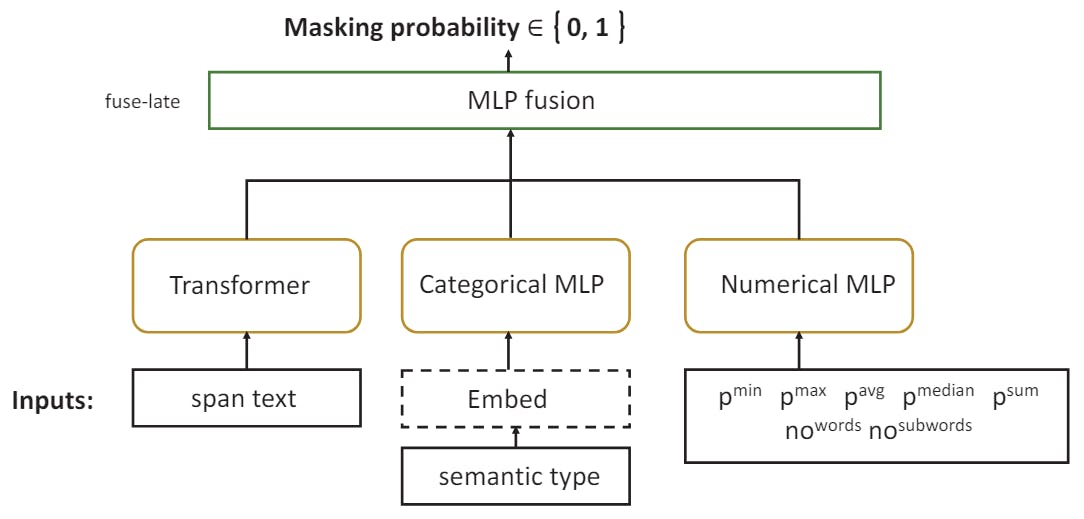

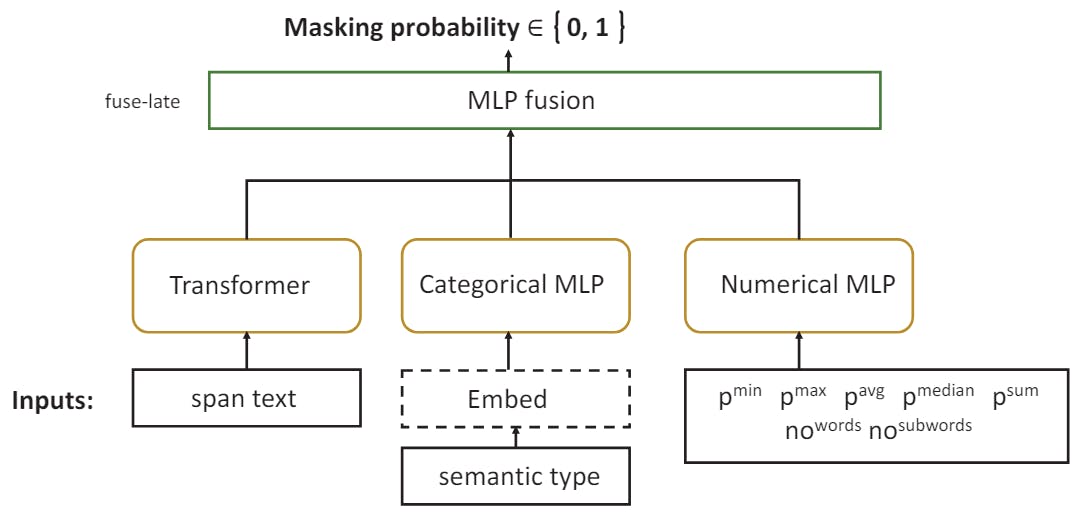

Concretely, we enrich the classifier from the previous section by adding to the features a vector representation of the text span derived from a large language model. The classifier thus relies on a combination of numerical features (the aggregated LLM probabilities discussed above, to which we add the number of words and subwords of the span), a categorical feature (an encoding of the PII type), and a vector representation of the text span. Crucially, the transformer-based language model that produces this vector representation is fine-tuned to this prediction task.

The resulting model architecture is illustrated in Figure 2. Concretely, the model training is done using AutoGluon’s Multimodal predictor (Shi et al., 2021), making it easier to incorporate a wide range of feature types, including textual content processed with a neural language model. We employ here the Electra Discriminator model due to its good encoding performance (Clark et al., 2020; Shi et al., 2021). The categorical and numerical features are processed by a standard MLP. After each model is trained separately, their output is pooled (concatenation) by a MLP model using a fuse-late strategy near the output layer. Table 4 provides some examples of input.

For our experiments, we again fit the classifier using the training datasets of the annotated collection of Wikipedia biographies and the TAB corpus.

5.3 Perturbations

The two previous risk indicators use features extracted from the text span to emulate masking decisions from human annotators. They are not, however, able to quantify how much information a PII span can contribute to the re-identification. They also consider each PII span in isolation, thereby ignoring the fact that quasi-identifiers constitute a privacy risk precisely because of their combination with one another. We now turn to a privacy risk indicator that seeks to address those two challenges.

This approach is inspired by saliency methods (Li et al., 2016; Ding et al., 2019) used to explain and interpret the outputs of large language models. One such method is iwhen one changes the input to the model by either modification or removal, and observes how this change affects the downstream predictions (Kindermans et al., 2019; Li et al., 2017; Serrano and Smith, 2019). In our case, we perturb the input by altering one or more PII spans and analyse the consequences of this change on the probability produced by the language model for another PII span in the text.

Consider the following text:

To assess which spans to mask, we use a RoBERTa large model (Liu et al., 2019) and all possible combinations of PII in the text in a perturbation-based setting. We begin by calculating the probability of a target span with the rest of the context available in the text. We then mask in turn every other PII span in the document and recompute the probability of the target span to determine how the absence of the PII span affects the probability of the target span.

To obtain sensible estimates, we must mask all spans that refer to the same entity while calculating the probabilities. In Example 9, Michael Linder and Linder refer to the same entity. We use in our experiments the co-reference links already annotated in the TAB corpus and the collection of Wikipedia biographies.