Reconciliation at scale is the difference between trusting your financial records and spending nights chasing mismatches across multiple parties. At Lead Bank, we build the infrastructure that collects, processes, and reconciles data for card and deposit programs to support correctness, auditability, and compliance obligations.

Reconciliation verifies consistency across the bank core, card network files, issuing processor reports, and fintech program data. Because it spans multiple parties, the workflow is inherently multi-legged, and a single mismatch can trigger costly investigation and elevate compliance risk.

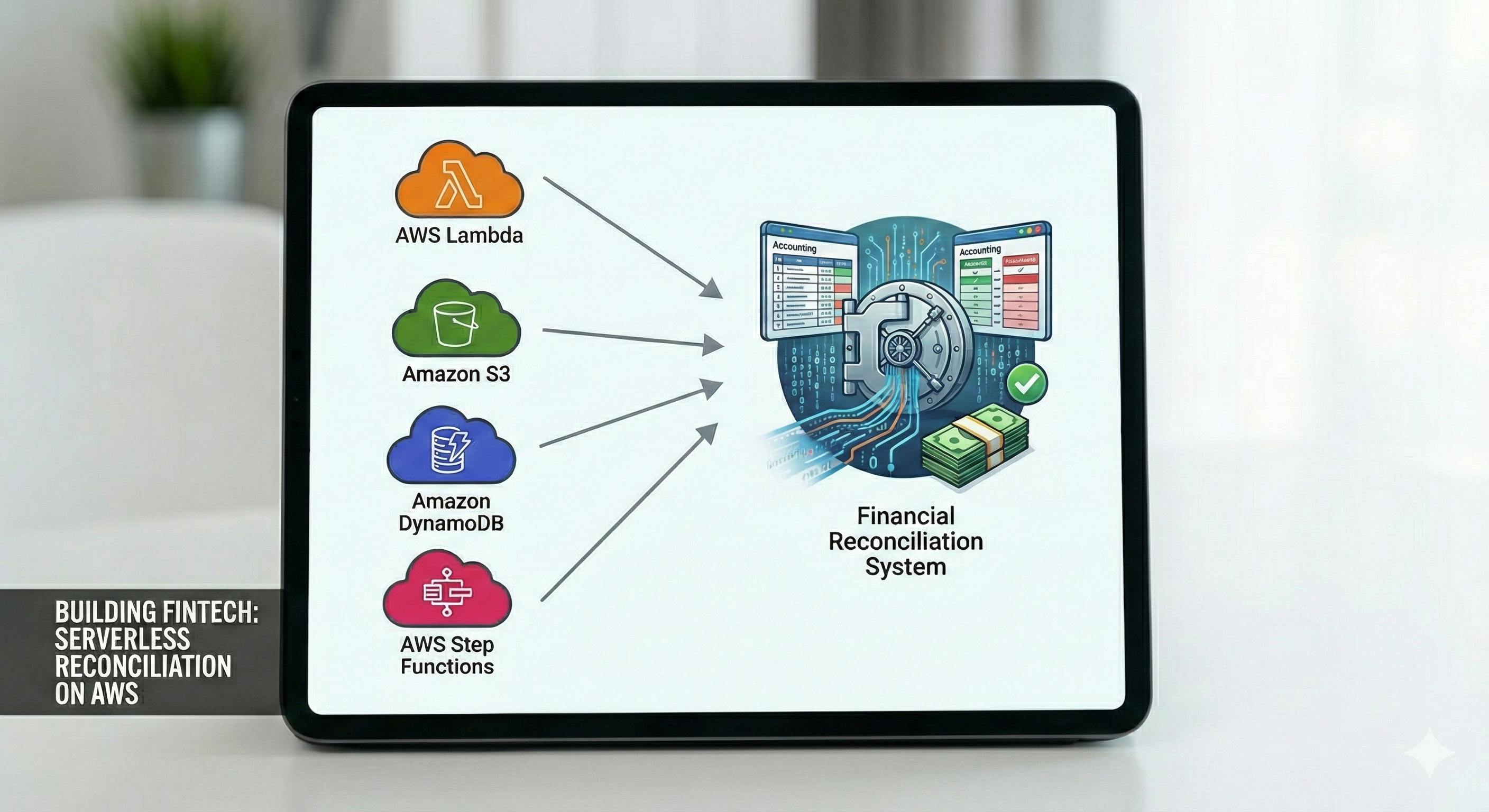

This post walks through the AWS serverless architecture we built for multi-party reconciliation, the two production bottlenecks we encountered first (file processing limits and Amazon DynamoDB write hot spots), and the patterns we used to mitigate them. It is written for software engineers and architects who want a practical blueprint for building a serverless reconciliation engine.

I. Context and technical constraints for reconciliation at scale

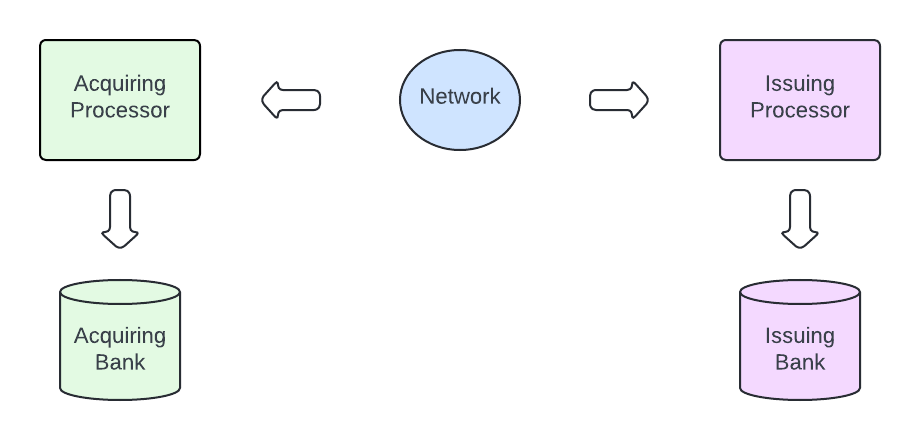

In Banking-as-a-Service (BaaS) card programs, fintechs typically operate through a Bank Identification Number (BIN) sponsor, an FDIC-insured issuing bank that holds the card network license and is accountable for settlement and program oversight. Programs commonly involve four roles: the card network (for routing and rules), the issuing bank (legal issuer and settlement counterparty), the fintech program manager (end-user experience and operations), and an issuing processor (real-time authorization and card account operations).

A card purchase begins with an authorization request, typically carried in an ISO 8583 message, a common standard for real-time card-transaction messages exchanged between acquirers and issuers over card networks. The network uses the card’s BIN to route the request to its issuer for an approve or decline decision. Authorization does not move funds

Actual funds settlement occurs the next day, when the network distributes network settlement files to all participants.

This separation between real-time authorization and batch settlement is a primary reason reconciliation is file-driven and operationally critical.

Reconciliation is the control that verifies consistency across internal records and external sources of truth, including the bank core, card network or processor files, and fintech program data. Because these systems differ in identifiers, timing, and adjustment semantics, reconciliation is inherently multi-party and multi-step. A single mismatch can trigger costly investigation and elevate compliance risk.

In this post, the Entity is the end consumer for the fintech program. An account is an object that holds a lot of metadata about the entities relationship with the fintech program. Balance is an object that holds a value denominated in various currencies. A transaction is an object that describes a financial event, such as a purchase, payment, or refund that changes the value of a given balance. A card is one of the instruments that can be used to perform a transaction and change the balance. A Bank core is the ledger that holds the program’s money.

In practice, reconciliation breaks into four recurring workflows, each with distinct technical constraints:

- Verify transactions that constitute the balance.

- Prove that the sum of customer-level balances equals the bank core’s omnibus For-Benefit-Of (FBO) balance.

- Match posted card activity to network or processor settlement files despite volume, format variability, and mismatched identifiers or timing across parties.

- Validate identity, card status, and account authorization reference data that can invalidate financial matching if it is stale or inconsistent.

Although many workflows run through application programming interface’s (API), reconciliation is still dominated by end-of-day files because they provide an auditable snapshot and support deterministic reprocessing. At scale, these constraints shape the architecture more than the business logic does:

- Imperfect file delivery and replays: files arrive late, out of order, duplicated, or corrected, so the pipeline must be idempotent and safe to re-run.

- Identifiers do not line up cleanly: the same transaction can carry different IDs across parties, so matching often requires composite keys and correlation logic.

- Adjustment-heavy settlement semantics: refunds, disputes, fees, and corrections change matching rules over time and increase write amplification.

- Two production bottlenecks appear early: long-running file processing can exceed short-lived compute limits, and high write volume can create uneven access patterns and hot partitions in the reconciliation datastore.

In this post, we focus on the technical challenges of financial reconciliation, specifically balance checks and network settlement matching, and how we designed an AWS serverless pipeline around these constraints.

II. The Serverless Architecture: Decoupling and Orchestration

With these constraints in mind, we committed to a serverless-first architecture to minimize operational overhead, maximize availability, and support automatic scaling. This approach allowed our small team to iterate quickly and focus our engineering effort on complex financial business logic rather than infrastructure maintenance and patching, significantly reducing our dev-ops and infrastructure cost profile. Our primary tools are Amazon Simple Storage Service (Amazon S3), Amazon EventBridge, Amazon Simple Queue Service (Amazon SQS), Amazon DynamoDB, AWS Lambda, Amazon Elastic Container Service (Amazon ECS), and AWS Step Functions. To keep the article simple and focused, we will only focus on financial files.

A. Ingestion and Event Routing (Amazon S3 and Amazon EventBridge)

The process begins when one of the files (Transaction, Balance, or Network Settlement) is dropped into an Secure File Transfer Protocol (SFTP) folder that is synced with S3 buckets. In our design, we routed S3 object-created events through EventBridge instead of using direct S3 notifications. This made the ingestion path easier to evolve because producers and consumers remained loosely coupled. It also gave us a consistent place to apply filtering and routing rules across multiple event sources.

B. Durable Processing Queue (Amazon SQS)

In our design, the EventBridge rule targets an SQS standard queue to provide durable buffering and decouple ingestion from processing. This queue serves three vital purposes:

- Throttling/Load Leveling: It smooths out ingestion spikes. If three massive files arrive simultaneously, the queue absorbs the load, helping to ensure our downstream compute services pull messages at a steady, sustainable rate rather than being overwhelmed.

- Durability: The messages persist in SQS until they are successfully processed, guaranteeing at least once delivery.

- Dead Letter Queue (DLQ): Every SQS queue is configured with a DLQ. If a processing Lambda fails repeatedly (e.g., exceeds the maximum retry count of 5), the message is moved to the DLQ for manual inspection, so that no transaction is silently lost.

C. Compute Layer (AWS Lambda and Amazon ECS)

Our compute infrastructure is strategically split to optimize for both cost and duration:

- AWS Lambda: Handles the lightweight, bursty, and orchestration work, such as message parsing and triggering the Step Function’s parallel map. Lambda offers extremely fast cold starts and low cost for ephemeral tasks that are guaranteed to complete within 15 minutes.

- AWS ECS Fargate: Reserved for reconciliation jobs that require high memory or exceed the 15-minute Lambda timeout (a common bottleneck, as detailed in Section III). We containerized the core reconciliation logic, enabling us to migrate these longer-running, compute-intensive jobs to Fargate without rewriting code.

D. Workflow Orchestration (AWS Step Functions)

Reconciliation is a long-running, often multi-day workflow, so AWS Step Functions is a good fit for serverless orchestration to coordinate the individual compute steps. We chose Step Functions specifically because it provides the durability and fault tolerance that are non-negotiable for financial compliance, without requiring us to write complex retry and state management code.

The reasons for this selection are rooted in architectural necessity:

- Managed State and Durability: Reconciliation involves dozens of sequential and parallel steps (splitting, normalizing, matching, aggregating). Step Functions inherently manage state between steps when a downstream service fails (e.g., a Lambda error). The State Machine pauses, tracks the exact point of failure, and allows the workflow to be resumed or restarted from that point, which is critical for auditability and recovery.

- Massive Parallelism via Map State: Depending on our use case we ran certain tasks in parallel by using the Parallel state and similarly, when we wanted to iterate over the same task with a different input, we used the Map state

- Visual Debugging and Auditability: For compliance and operations, the ability to visualize the entire workflow is invaluable. Step Functions provides a graphical view of each step, including its inputs, outputs, and failure reasons. This makes it simple for on-call team to track the state of a particular task and start debugging.

E. Persistence Layer (Amazon DynamoDB)

We chose DynamoDB as our core database for storing immutable transaction and balance records due to its characteristics essential for a massive card program:

- High Volume, Low Latency: DynamoDB is designed for predictable, single-digit millisecond latency at a high volume, which is needed for financial workflows.

- Serverless and Managed: By leveraging its fully managed nature, we eliminated the overhead of provisioning, patching, and sharding a traditional relational database (MySQL/Postgres), allowing us to focus entirely on application-level challenges such as partition key design.

- Streams Integration: DynamoDB Streams are vital, providing a near-real-time stream of changes that trigger downstream compliance, analytics, and data warehousing (Snowflake) systems.

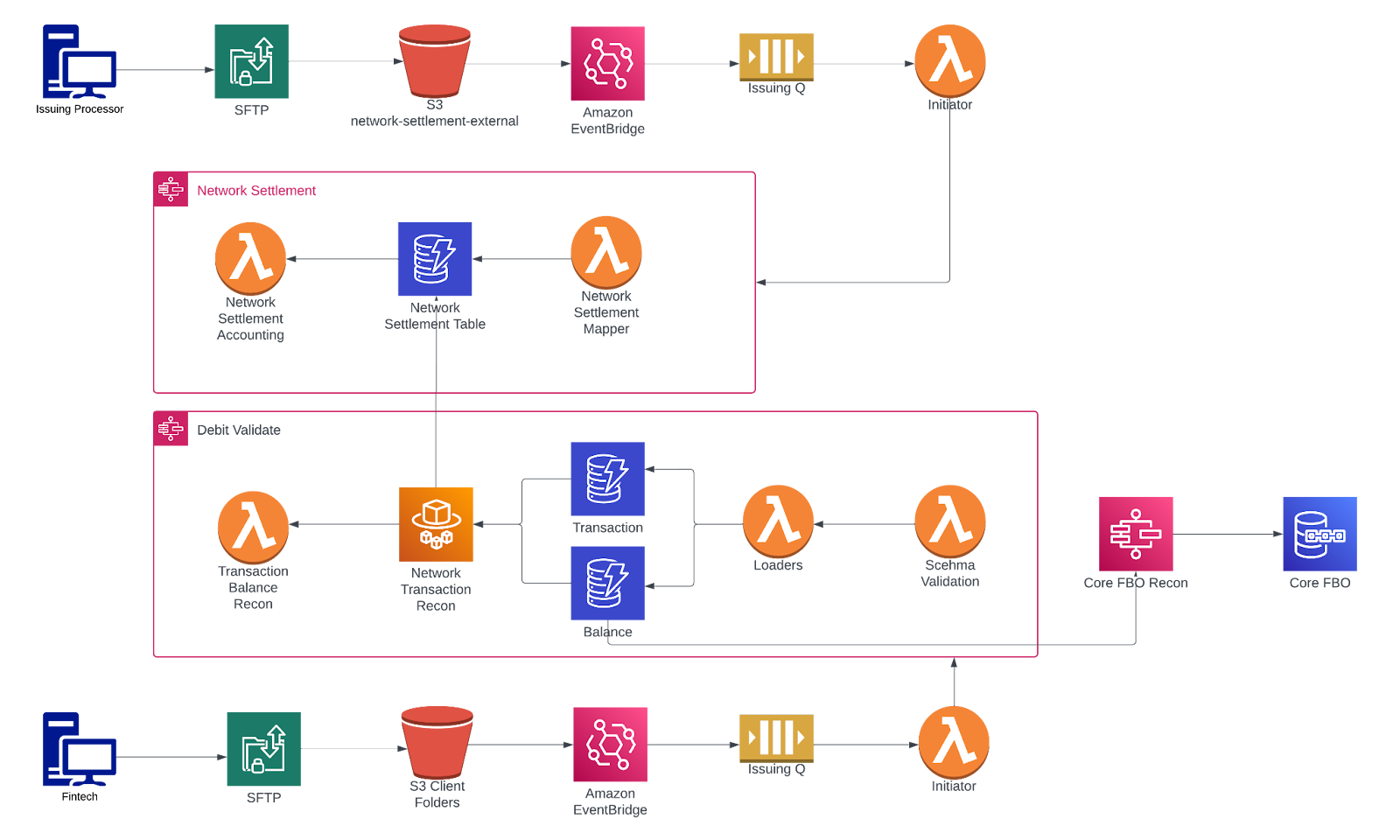

Our simplified version of our debit card flows looked like this.

n

In the top half, the issuing processor drops network files that are synced to S3. An EventBridge rule listens for new S3 events and forwards them to the issuing SQS queue. The Initiator Lambda continuously polls this queue and triggers the Network Settlement Step Function, which parses the network settlement file and stores the parsed data in the Network Settlement DynamoDB table.

On the bottom half, the fintech flow begins when the fintech partner drops the transaction and balance files. This triggers an event routed through the SQS queue to the Initiator Lambda, which then starts a separate Step Function that validates and reconciles the files against the network settlement data. This process is called network reconciliation.

On the right side, we have FBO reconciliation, which reconciles the fintech balance file with the settled amount in the FBO account, to support end-to-end accuracy across network, fintech, and bank records.

III. The Scaling Battle: Overcoming Serverless Limits

This architecture worked well initially, but as our volume grew, we hit two critical bottlenecks: compute duration, and database partitioning, which forced us to evolve our approach.

A. Optimizing Compute: The Lambda-to-ECS Migration

The most immediate bottleneck was Lambda’s 15-minute execution limit. While file splitting helped, complex reconciliation logic across large chunks (e.g., 20,000 records in a wide-column, fixed-width file) still occasionally exceeded this window.

Our solution was not a redesign, but a simple architectural switch:

- Unified Codebase: We maintained a single core reconciliation worker, a containerized application written in Go, which handled file parsing and processing.

- Dynamic Execution: We deployed this container image to both Lambda (for fast, ephemeral execution) and ECS Fargate (for long-running, memory-intensive jobs).

- Step Function Choice: The Step Function uses a Choice state early in the process. If the file size is below a certain threshold (e.g., 1GB), it targets the Amazon Lambda function. If the file is large, the Choice state invokes the Step Functions integration for ECS (RunTask) to start a dedicated Fargate task.

This approach gave us a practical balance: the cost efficiency and speed of Lambda for 90% of smaller files, and the compute headroom of Fargate for the 10% of massive, slow-to-process transactions and balance files.

B. Mastering DynamoDB for High-Throughput Writes

DynamoDB is a great choice for a database that has high-volume, low-latency requirements for transaction storage. However, its scalability is predicated on distributing I/O uniformly across partitions. We immediately ran into write throttling due to hot partitions.

Throttling occurs when a single partition key exceeds its limit (typically 1,000 Write Capacity Units (WCU) or 3,000 Read Capacity Units (RCU) per second). Our primary reconciliation access pattern was: Find all transactions for a specific program on a particular date. We had a partition key ProgramID#FileDate (e.g., PROGX#20250101) This design failed because all transactions for a massive program on a single day hit the same partition, exceeding the WCU limit and throttling ingestion.

The Global Secondary Index (GSI) Backpressure Lesson: We initially attempted to solve this by moving the high-cardinality primary key to a GSI and querying the GSI instead. However, this provided no relief. Because the GSI receives its data stream from the main table, if the primary table’s partition is already throttled, the GSI’s ingestion queue will experience GSI backpressure, ultimately limiting the write throughput of the entire table. We realized the core partition key issue had to be solved at the root table level.

Optimized Key Design: Partition Key Sharding:

Our fix was to introduce write sharding for our highest-volume customers:

- PK Sharding: We appended a calculated shard number to the partition key: ProgramID#FileDate#ShardN.

- Sharding Logic: We used a lightweight hashing algorithm (e.g., modulo) on the TransactionID to assign it to a shard (e.g., TransactionID % 10 results in a shard number between 0 and 9).

- Result: This spread the write load across ten physical partitions instead of one, instantly alleviating the hot partition issue and allowing us to ingest data at a 10x higher rate.

IV. Actionable Lessons Learned

This section summarizes patterns you can apply to your own reconciliation pipelines, with examples from our implementation.

A. System Design Lessons

Here are three system design lessons you can apply when you are building a reconciliation pipeline that has to handle retries, imperfect inputs, and multiple external systems:

-

Make retries safe by default (idempotency is the baseline): In an event-driven pipeline, retries are expected, so you should design each step to be safely repeatable. For our use case, we had to pass an idempotency key (for example, a TransactionID or MessageID) through the workflow and use it to guard every side-effecting operation. In practice, that means:

-

Workflow steps: each state transition is protected with conditional writes (for example, in DynamoDB) so the same message cannot advance or mutate state twice.

-

Core banking transactions: postings, transfers, and ledger-affecting actions are executed with explicit dedupe semantics (same idempotency key → same outcome), so a retry results in a no-op or a deterministic “already processed” response rather than a duplicate movement of funds.

-

Webhooks and outbound notifications: These are delivered at least once, so each message includes a stable, recipient-facing idempotency key (event ID) and we maintain an internal delivery ledger (attempts, acknowledgements, and final state). Recipients can safely dedupe replays, and our retries won’t trigger duplicate downstream actions.

-

Always Use a Dead Letter Queue (DLQ): Configure a SQS DLQ and a redrive policy so that malformed messages are quarantined instead of endlessly retrying and clogging the queue.

-

Do not let a service be the only source of truth for its own correctness.: Reconciliation is cross-system by definition, so build checks that validate invariants from the outside. For example, comparing transactions sent by the program against the network and their FBO was very important.

B. Operational Excellence Lessons

These operational lessons are about reducing time-to-detect and time-to-resolve by making failures visible, actionable, and easy to investigate for both your team and your partners.

- Focus on internal observability: We found that a culture of observability is superior to constant debugging. Our focus shifted from responding to errors to preempting them, which required operational discipline across the board. Key to this was identifying our hotspots early (setting strict alerts and thresholds for metrics such as SQS queue depth and DynamoDB throttling) by setting required Service Level Objectives (SLO)

- Focus on external observability: You should make reconciliation failures actionable for clients by returning clear, self-serve error messages and guidance. In our implementation, we standardized error codes and surfaced enough context for partners to resolve common issues without a support escalation.

- Invest in tooling: Build investigation tooling early so you can trace mismatches to the source file, the processing run, and the resulting writes without digging through logs. That way, triage stays fast and consistent, even when volume spikes or auditors ask questions.

- Focus on speed to get feedback: You should optimize for fast feedback loops. Serverless enabled fast launches and low dev-ops costs. After our first month of running this event-driven reconciliation system, our total cloud compute bill was less than our team’s lunch bills. This architecture enabled our small team to iterate quickly and focus entirely on the complex financial logic, rather than on infrastructure.

C. Interface Design: File vs. API

A common mistake was defaulting to file-based interfaces when an API was a better fit for the business process.

- Use the right interface for the problem: There is no right answer here; you need to think through what the right abstraction is to solve the problem. We used files only for actual batch operations where the end-of-day snapshot is the requirement (e.g., Network Settlement). We then used APIs for everything else, especially where real-time feedback or small, discrete data transfers are needed. We had to rewrite several internal interfaces from file-based uploads to HTTP APIs because the operational overhead of tracking, processing, and debugging many small files proved far more complex than a simple API call.

- Treat file schemas like versioned contracts: You should treat file schemas as versioned contracts, because small changes can break parsing and silently skew reconciliation results. In our pipeline, we introduced explicit schema validation and version-aware parsing to reduce incidents caused by upstream format changes.

Conclusion

Building a reconciliation system that scales on AWS is less about selecting serverless components and more about designing for failure, replay, and investigations. In our implementation, AWS serverless services provided a strong foundation for orchestration and durable state. The production outcomes ultimately depended on the details, especially idempotency, partition key design, and the operational signals that made mismatches quick to detect and resolve.