Pinterest engineering teams have deployed an internal Model Context Protocol (MCP) ecosystem to power AI agents that automate complex engineering tasks and integrate diverse internal tools and data sources at scale. The architecture, now running production MCP servers, a central registry, and agent integrations across developer tools, replaces ad hoc integrations with a standardized, secure, and scalable AI tool-calling substrate. MCP enables language models to call tools and access structured data using a unified client-server mechanism, allowing agents to perform tasks such as analyzing logs or inspecting bug tickets with direct access to live internal systems.

Pinterest engineers emphasize:

By explicitly choosing an architecture of internal cloud-hosted, multiple domain-specific MCP servers connected via a central registry, we have built a flexible and secure substrate for AI agents integrated directly into employees& daily workflows.

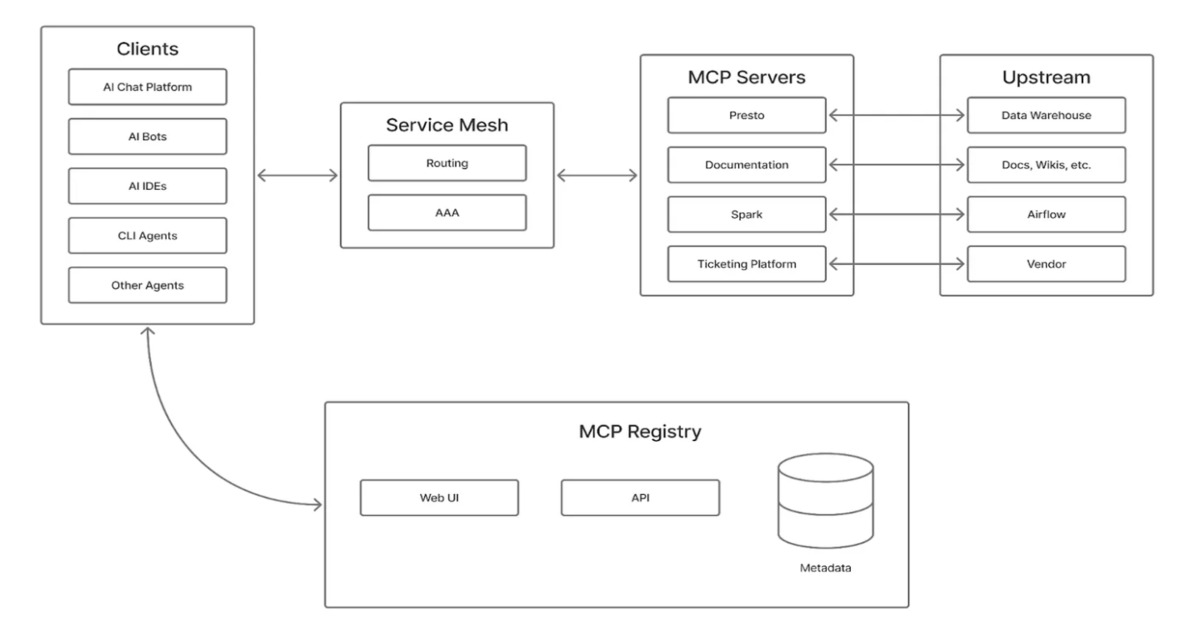

At the core of the implementation is a fleet of cloud-hosted MCP servers, each dedicated to a specific domain such as Presto, Spark, or Airflow, rather than a single monolithic service. This domain-specific approach limits context bloat, isolates tools, and allows fine-grained access control. A unified deployment pipeline manages infrastructure, scaling, and service lifecycles so engineers can focus on defining tool interfaces.

A central MCP registry acts as the source of truth for approved servers and their connectivity metadata. Both a human-friendly UI and an API allow discovery, validation, and integration into internal AI clients and IDEs. Clients consult the registry to validate permissions and server status before calling tools, enforcing governance consistently. Pinterest measures ecosystem impact through a single north-star metric: time saved. Tool owners estimate minutes saved per invocation based on lightweight user feedback and comparison to manual workflows. As of January 2025, MCP servers reached 66,000 invocations per month across 844 active users, saving roughly 7,000 hours per month.

.

Architectural diagram of Pinterest’s MCP ecosystem (Source: Pinterest Blog Post)

AI agent workflows are integrated across chat platforms and IDEs, discovering MCP servers via the registry. Agents can autonomously investigate incidents, generate contextual summaries, or propose changes. Because MCP servers can perform automated actions, Pinterest mandates human-in-the-loop approval for sensitive operations, using elicitation to confirm potentially dangerous actions before execution. Agents propose changes, and humans approve or reject them before any action is applied.

End-to-end flow of developing an MCP server (Source: Pinterest Blog Post)

Security and governance are enforced through a two-layer authorization model. End-user JWTs control human-in-the-loop access, while service-only flows rely on mesh identities. Servers implement fine-grained authorization decorators and business-group gating, restricting high-privilege operations to approved teams. OAuth flows are piggybacked on existing internal authentication, avoiding additional prompts while providing full auditability. Each MCP server must comply with the MCP Security Standard and pass review for Security, Legal/Privacy, and GenAI requirements before production use.

The architectural approach aligns with broader trends in AI-assisted automation, emphasizing secure, reliable access to live systems and structured data rather than simple NLP interfaces. The transition from concept to production-ready ecosystem demonstrates a scalable model for enterprise AI automation. Pinterest continues to expand its fleet of MCP servers, deepen integrations across engineering surfaces, and refine governance models, further boosting developer productivity while maintaining strict security and compliance standards.

![[Webinar] Stop Guessing. Learn to Validate Your Defenses Against Real Attacks [Webinar] Stop Guessing. Learn to Validate Your Defenses Against Real Attacks](https://blogger.googleusercontent.com/img/b/R29vZ2xl/AVvXsEgCypzkb6uvHuNx6LKknUqtvQFoqsr6aalztDeBKT1aaUASzfjZMZAZqExx1k0w5iKWl08lx3MxbM_FwWxAvBdZODEerioaMp8OHVvhSjC8VL3uAW9_NMniMl_niggBVhVMdDFu2324YyhW5TrK4fua1PXlrb0DweOULvNgi5mlQUZUct_dIX3OePrfqks/s1600/validate.jpg)