A complex criminal trial can span months. It generates terabytes of transcripts alongside contradictory testimonies and evidentiary motions. Currently, defense attorneys and judicial clerks spend hundreds of hours manually cross-referencing these files just to build a reliable case chronology. Throwing raw compute at the problem is a tempting fix. Modern models possess large context windows capable of swallowing this entire legal corpus. Yet, this approach fundamentally fails. Simply dumping text into a stateless prompt cannot capture the chronological mutation of facts. When a witness recants or a presiding judge suppresses a document, standard models lose the thread. They read. They do not reason over time.

This failure creates an immense opportunity. I am not proposing a digital judge. I am conceptualizing a stateful agentic research engine designed specifically for litigators. A system like this would allow legal professionals to query a living database. They could ask the proposed agent to instantly generate a factual brief tracing a specific alibi. The architecture would output a timeline guaranteed to reflect the most up-to-date rulings. I am going to outline exactly how to construct the persistent memory graph required for this tool. I want to turn raw text into a trackable state machine.

Beyond the Haystack

Frontier models boast incredible retrieval capabilities. If you ask a high-parameter model to find a specific utterance from page 4,000 of a court transcript, it will find it. Researchers routinely test this using “Needle In A Haystack” (NIAH) evaluations to confirm the model can retrieve isolated facts from massive inputs. The system excels at pinpointing these data nodes.

I spent considerable time engineering persistent memory systems for voice interfaces. My focus was on building stateful agentic capabilities to help people with cooking recipes which require long running context. Transitioning to the judicial domain amplifies this state-management requirement exponentially. A trial operates as a living state machine. A fact introduced on Monday might be invalidated by a presiding judge on Thursday. To capitalize on large context windows, we must pair them with structural hierarchy. We want the model to understand chronological precedence. Finding the needle is impressive. Tracking whether that needle remains legally admissible three weeks later is the actual engineering challenge. We have the tools to solve this.

Building the Persistent State Graph

We solve this by decoupling the text processing from the factual tracking. I propose an architecture where the language model acts as a reasoning engine rather than a mere storage facility.

Instead of processing the entire transcript at once to generate a brief, the agent processes the text sequentially to build a persistent knowledge graph. Frameworks like LangGraph use stateful, graph-based architectures to manage complex AI workflows where nodes communicate by reading and writing to a shared state. When the agent reads witness testimony, it extracts discrete factual nodes and commits them to a property graph.

Think of this graph like a detective’s physical corkboard.

When the agent reads a deposition, it pins a new fact to the board. This node represents a date or a specific event. It then draws connecting strings. We use explicit schema definitions to enforce relationship mapping. “Witness A” contradicts “Witness B”. “Document C” corroborates “Alibi D”. When a later ruling suppresses that testimony, the agent executes a state update. It does not erase the node. It changes the status property to “invalidated.”

The agent maintains a ground-truth map of the trial. When tasked with writing a legal summary for the litigator, the agent queries this graph instead of reading the raw transcript again. It retrieves only the valid, active nodes required to answer the prompt. This forces the model to synthesize structurally verified facts.

To do this, we execute a Cypher query. Cypher is Neo4j’s declarative query language, which is optimized for graphs and uses an intuitive ASCII-art style syntax to visually match data patterns and relationships. By running a Cypher query, we traverse the graph to identify nodes with conflicting timestamp properties. The computational load shifts from probabilistic text generation to deterministic graph traversal. The language model simply translates these hard mathematical relationships into natural language. This approach is highly effective. It grounds the clerk’s output in verified chronology.

The Automated Evaluator Paradigm

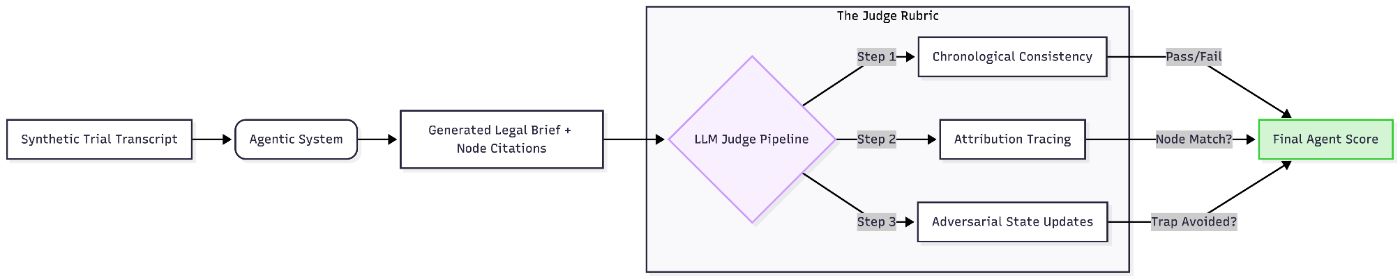

Designing this architecture is only half the equation. Proving the agent actually tracks the chronological state of the trial requires rigorous testing. In software engineering, automated evaluation pipelines are often called “LLM-as-a-judge” frameworks. In the legal domain, that terminology implies an AI issuing verdicts. We must avoid that conceptual trap. We are building an Automated Evaluator which will strictly be an internal testing pipeline.

Traditional automated testing relies on exact string matches. Did the software output the exact word “True”? Language generation is too fluid for that methodology. Instead, engineers use a frontier language model to grade the output of the system under test. We provide the Evaluator model with a strict, deterministic rubric.

A widely used example is evaluating text summarization. You feed the Evaluator an original news article and an AI-generated summary of that article. You prompt the Evaluator to score the summary from 1 to 5 based on factual consistency. It checks if the summary invented any numbers or names not present in the source text. Studies confirm that strong LLM evaluators match human grading with an agreement rate exceeding 80%. It works beautifully for static tasks. It allows teams to automatically verify thousands of responses without manual oversight.

A Judicial Evaluation Pipeline

In my work building auto-evaluations for rigorous algorithmic research, I rely heavily on these methodologies. A judicial agent requires a highly complex rubric. I propose a tripartite LLM-as-a-judge pipeline for legal AI.

First, Chronological Consistency Scoring. We feed the Evaluator a synthetic, complex trial timeline alongside the Agent’s generated legal brief. The Evaluator specifically checks whether the Agent respected the temporal flow of facts. If the Agent cites a piece of evidence that was formally suppressed in a later hearing, the Evaluator issues a hard failure.

Second, Attribution Tracing. The Agent must map every claim back to a specific node in the state graph. This node must point to an exact transcript line. The Evaluator verifies these pointers. It checks the cited node and confirms the semantic meaning matches the Agent’s output. It scores the system on its chain of custody.

Third, Adversarial State Updates. We intentionally inject logical traps into the test data. A witness provides a solid alibi. cell phone data completely invalidates it. The Evaluator determines whether the Agent successfully updated its internal graph to reflect the invalidated alibi. The Evaluator scores the reasoning trace. Did the agent recognize the contradiction and change the node status? We measure State Retention Accuracy. If the agent updates the node correctly, the accuracy score remains high.

Conclusion

Litigators and clerks cannot rely on tools that simply read fast. They require computational engines that can reason chronologically. By proposing externalized state management architectures and enforcing strict automated evaluations, we create highly capable research systems. While the massive context window holds the raw law, we are building the stateful architecture to actually organize it.

n

n