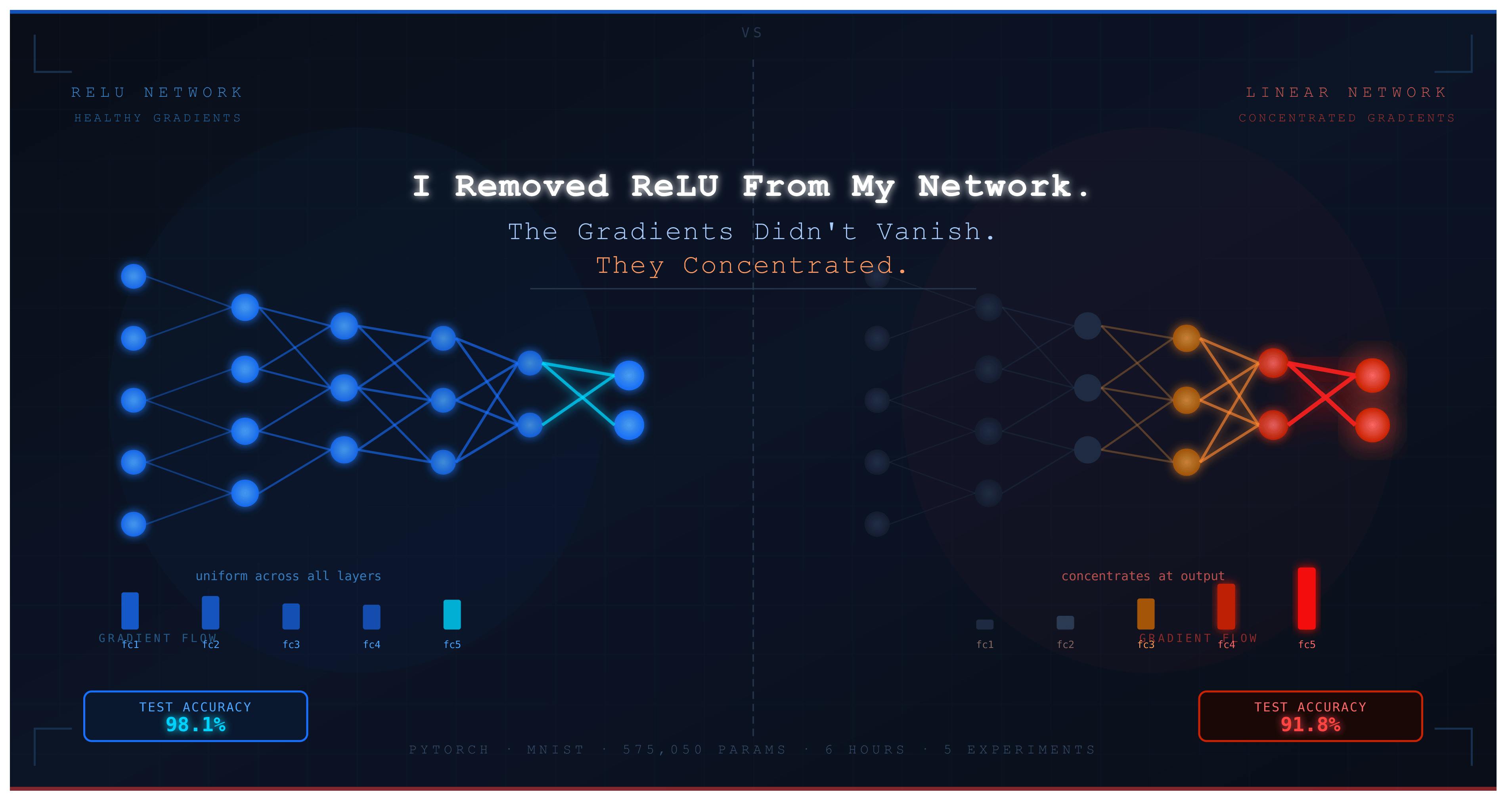

Removed ReLU from a 5-layer PyTorch MLP. The model trained without errors, loss decreased every epoch, and it still hit 91.8% on MNIST — matching single-layer logistic regression exactly. Four hidden layers with 575K parameters added zero expressive power.

The gradient data was the unexpected part. Early layers didn’t vanish — they just received proportionally less signal. By epoch 10, fc5 had a gradient norm of 1.37 while fc1 sat at 0.37. The optimizer was concentrating updates at the output end because that’s where the matrix product chain is shortest. The network quietly specialized its final layer to do all the classification work and treated the rest as a passive linear projection.

Depth made it worse, not neutral. A 10-layer linear network scored 90.93% vs 92.00% for a 1-layer one — same expressive ceiling, harder optimization landscape.

Every non-linear activation tested (Sigmoid, Tanh, ReLU, Leaky ReLU, GELU) landed between 97.4% and 98.2%. The gap between any activation and none was ~6 points. The choice of which activation barely mattered. The choice of whether to use one was everything.

The real job of ReLU isn’t gradient management. It’s making depth mean something at all.

Full experiment: 5 scripts, 3 seeds per config, 21,523 seconds on CPU. Code at : https://github.com/Emmimal/relu-experiment