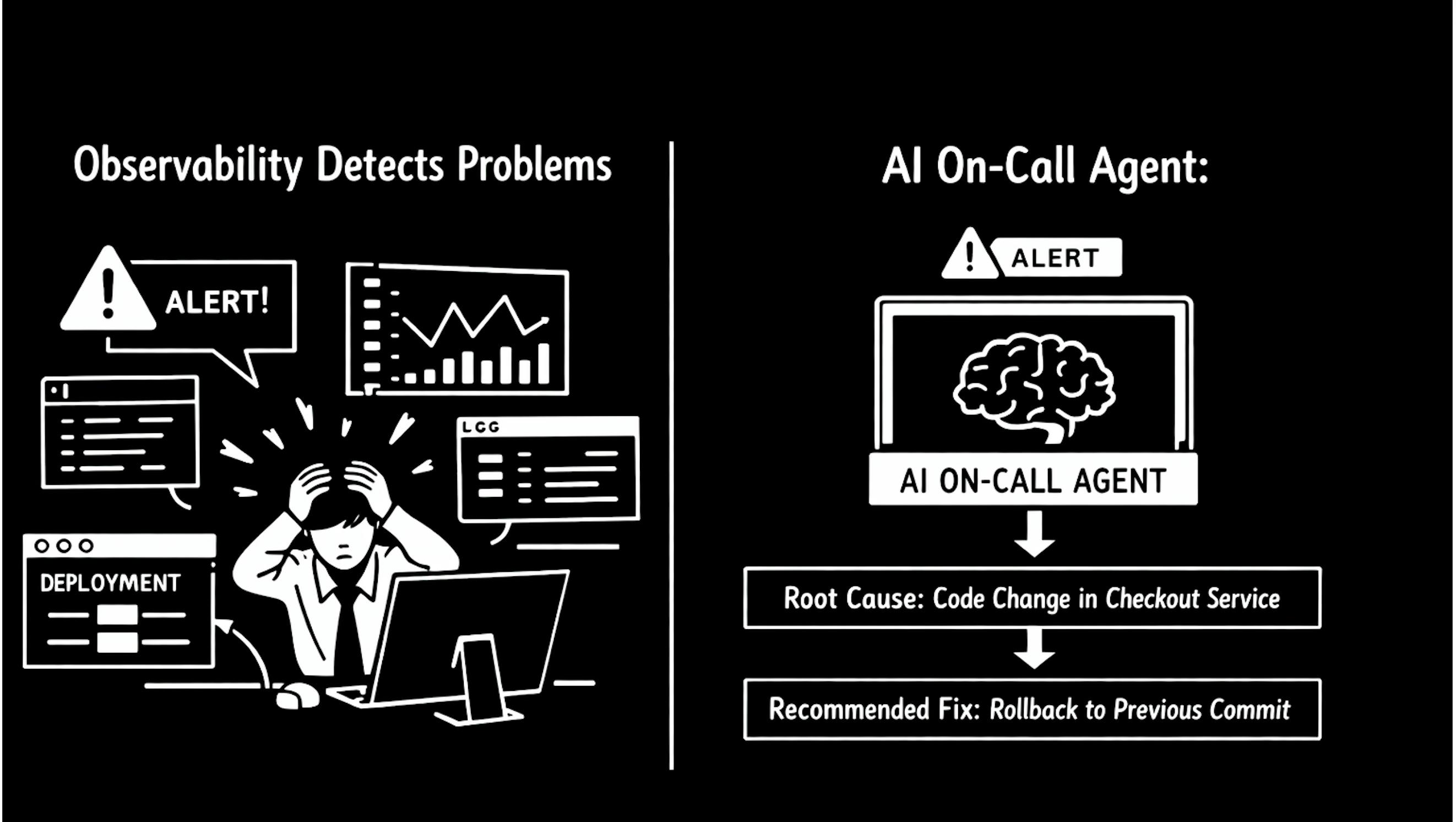

Observability tells us something is broken. Engineers still spend hours figuring out why. An AI on-call agent could close that gap.

Modern observability tools are excellent at telling us something is broken. They are far less capable of explaining why it broke.

After years of owning production services and operating distributed systems I began noticing a consistent pattern during incidents. Alerts arrive quickly. Dashboards show detailed graphs. Logs are available. Yet the most important question always takes the longest to answer.

What changed?

The real reliability gap today is not detection but causality. Observability platforms detect anomalies extremely well but they rarely explain the chain of events that caused the failure. As a result engineers still perform the hardest part of incident response manually by correlating signals across multiple systems under pressure.

Most conversations about reliability focus on better monitoring, better dashboards and more telemetry. But during real incidents engineers rarely struggle to detect a problem. The difficult part is understanding the root cause.

Modern observability solved visibility. It did not solve reasoning. Engineers still act as the correlation engine that connects repositories, deployments, dashboards, logs and incident systems during outages.

The next evolution of reliability engineering will not come from more dashboards. It will come from systems that can reason about operational change.

A Familiar On Call Incident

It is Friday afternoon and you are already thinking about the weekend. Your on-call duty is almost over.

Suddenly a PagerDuty alert fires. An incident is created. Response time for several core REST APIs in one of your key micro-services has spiked. This service sits at the center of the architecture and several other micro-services depend on it. Within minutes a calm afternoon becomes a firefighting situation.

You start investigating.

First you open Grafana to check latency graphs. Then you jump into Splunk to scan logs. You review the most recent deployment in the CI pipeline. You compare commits included in the latest release. At the same time you trace Jira stories linked to those commits hoping something stands out.

Does it sound familiar?

Most production incidents follow this pattern. Engineers jump between dashboards, logs, deployment history and issue trackers while trying to reconstruct a timeline of events.

Eventually, a database code change commit is identified as the root cause. The fix itself is simple. A small change is rolled back and the system stabilizes.

The frustrating part is that nearly two hours were spent just figuring out what actually caused the problem.

I have experienced variations of this situation many times and most engineers working with distributed systems have too. The tools we rely on are powerful but they rarely talk to each other in meaningful ways.

The Real Gap in Observability

Most engineering organizations run a similar stack.

- GitHub or GitLab for source control

- Jenkins or other CI pipelines for deployments

- Prometheus CloudWatch or ELK for telemetry

- Confluence for documentation

- Jira for issue tracking

- PagerDuty or Slack for alerts

Each system works well individually but they operate in isolation.

Code changes live in repositories. Deployments live in CI systems. Metrics and logs live in monitoring platforms. Incident knowledge lives in tickets, run-books or postmortems.

When something breaks the on-call engineer becomes the integration layer between these systems.

Observability tools detect anomalies well. They tell us latency increased or error rates crossed a threshold. What they rarely do is connect runtime behavior to the change that caused it.

So engineers reconstruct timelines manually.

- Check commits

- Check deployments

- Compare timestamps

- Read past incidents

- Search logs

Detection is automated. Reasoning is still manual.

That is the structural limitation of modern observability.

The Idea of an AI On Call Agent

What if the system itself could answer the question engineers always ask during incidents.

What changed just before the failure began?

An AI on call agent could sit above existing development and observability systems and continuously correlate signals across the engineering lifecycle.

- Repositories

- Deployments

- Monitoring signals

- Logs

- Incidents

- Documentation

Instead of only detecting anomalies the system would attempt to explain them.

When an alert fires the engineer could immediately see something like this.

A deployment to checkout service occurred eight minutes before the latency spike n Two upstream services started returning dependency failures n A similar incident occurred three months ago related to input validation logic n The most likely root cause is change introduced in a particular commit. n Recommended action is rollback of that commit.

Instead of starting from zero the engineer reviews the reasoning and validates the conclusion.

Incident response shifts from searching for context to validating hypotheses.

What Such a System Requires

Building an AI on call agent is less about clever machine learning and more about engineering infrastructure.

The system must ingest operational signals, maintain historical context, reason across that data and present evidence backed explanations.

At a high level the system requires five capabilities.

- Unified signal ingestion

- Change correlation

- Incident memory

- Evidence grounded reasoning

- Guarded automation

The architecture is conceptually simple. Signals from engineering tools are collected into a unified operational timeline that allows correlations across deployments, telemetry logs and past incidents.

Production systems already generate the signals needed to explain failures. Commits, pull requests, deployments, alerts, logs and incident updates are all events in the lifecycle of a service.

A correlation layer can analyze relationships between these events. It compares deployment timestamps with anomalies, maps service dependencies, analyzes recent code changes and checks whether similar incidents occurred previously.

Incident memory allows the system to reuse operational knowledge. Run-books, Jira tickets and postmortems can be indexed so the system surfaces past incidents that resemble the current one.

The reasoning layer then combines structured signals with historical context to produce explanations.

- Which services are affected?

- Which changes occurred before the anomaly?

- Which past incidents resemble the current one?

- Which recovery actions are safest?

Most importantly every claim links back to observable evidence so engineers can trust the reasoning.

Automation must be introduced carefully. Early versions should focus on recommendations such as suggesting rollbacks or highlighting risky changes. Over time workflows can evolve into approval based automation where engineers confirm actions before execution.

The goal is not removing humans. The goal is removing investigation delays.

From Investigation to Validation

If context arrives instantly the nature of incident response changes.

Today engineers spend much of an outage gathering information.

- Which deployment happened

- Which service failed first

- What dependencies changed

- Whether this issue happened before

An AI reasoning layer could assemble this context in seconds.

Engineers would spend less time searching for signals and more time validating conclusions and deciding on recovery actions.

Recovery becomes faster because understanding arrives earlier.

When Systems Start Explaining Themselves

Reliability engineering has evolved through several phases.

First we improved visibility through monitoring. Then we introduced observability and distributed tracing. The next step is systems that help engineers understand causality.

When infrastructure can reason about change context and consequence the role of engineers shifts as well. Instead of acting as the human correlation engine between disconnected tools engineers become validators, decision makers and architects of more resilient systems.

And perhaps for the first time being on call would mean validating explanations instead of hunting for context during a Friday evening incident or a 3 AM outage.

References

- https://sre.google/books/

- https://research.google/pubs/the-site-reliability-workbook/

- https://docs.honeycomb.io/get-started/basics/observability/introduction/