In the rapidly evolving landscape of artificial intelligence, the year 2026 marks a pivotal moment where prompt engineering transcends mere curiosity to become an indispensable skill. As large language models (LLMs) like ChatGPT, Claude, and OpenAI Codex grow more sophisticated and integrated into every facet of business and development, the ability to communicate effectively with them directly translates into tangible economic and operational advantages. This playbook delves into the advanced strategies and philosophies required to master prompt engineering in this new era, focusing on cost optimization, efficiency gains, and achieving unparalleled results across diverse applications, particularly in developer and biotech domains.

The paradigm shift isn’t just about getting an AI to respond; it’s about engineering that response to be precise, efficient, and aligned with complex objectives. The stakes are higher, and the rewards for mastery are significant. We are moving beyond simple conversational prompts into a realm where prompts are akin to code – meticulously crafted instructions that drive sophisticated AI behaviors and outputs.

The Imperative of Prompt Engineering in 2026: Beyond Basic Interaction

By 2026, the proliferation of AI has made its capabilities accessible to nearly everyone. However, the true differentiator is no longer merely access, but the skill with which these powerful tools are wielded. Advanced prompt engineering is no longer a niche skill for AI researchers; it is a core competency for developers, researchers, content creators, and business strategists alike. The reasons for this heightened importance are multifaceted, revolving primarily around economic efficiency and operational excellence.

Cost Optimization Through Token Efficiency

One of the most critical drivers for advanced prompt engineering in 2026 is cost optimization. LLMs operate on a token-based pricing model, where every input and output token incurs a cost. In a world where AI usage is scaling exponentially, even minor inefficiencies in prompt structure can lead to substantial financial burdens. A well-engineered prompt is concise yet comprehensive, providing the AI with all necessary context without extraneous information. This precision directly reduces the number of tokens processed, leading to significant cost savings, especially for high-volume applications or complex, multi-turn interactions.

- Eliminating Redundancy: Advanced prompts avoid repeating instructions or context that the model can infer or has already been provided in previous turns (in stateful conversations).

- Targeted Information: Instead of dumping large datasets, prompts guide the AI to extract or process only the most relevant information, reducing both input and output token counts.

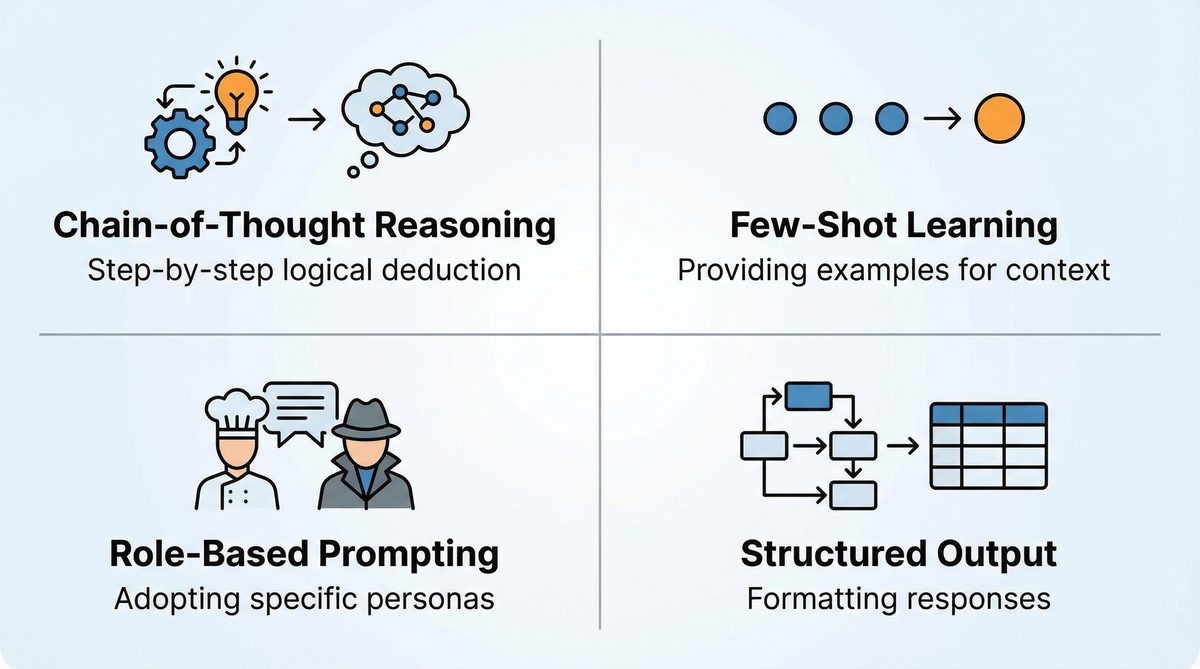

- Structured Outputs: Requesting specific output formats (e.g., JSON, XML, markdown tables) can sometimes reduce the verbosity of the AI’s response, leading to fewer tokens. This is particularly useful for programmatic consumption of AI outputs.

Latency and Rework Reduction

Beyond direct costs, the time taken for an AI to process a request (latency) and the human effort required to refine its output (rework) represent significant operational expenses. Poorly engineered prompts often result in:

- Increased Latency: Longer, less focused prompts require more processing power and time, delaying responses. In real-time applications or high-throughput systems, this can be detrimental.

- Higher Rework: Ambiguous or incomplete prompts lead to generic, inaccurate, or off-topic responses that require manual correction or multiple follow-up prompts. This iterative refinement process is time-consuming and expensive.

Advanced prompt engineering addresses these issues by front-loading clarity and specificity. By anticipating potential misinterpretations and guiding the AI towards the desired outcome from the outset, engineers can drastically reduce the need for rework and accelerate project timelines. Mastering techniques for prompt chaining and conditional logic within prompts further enhances this efficiency.

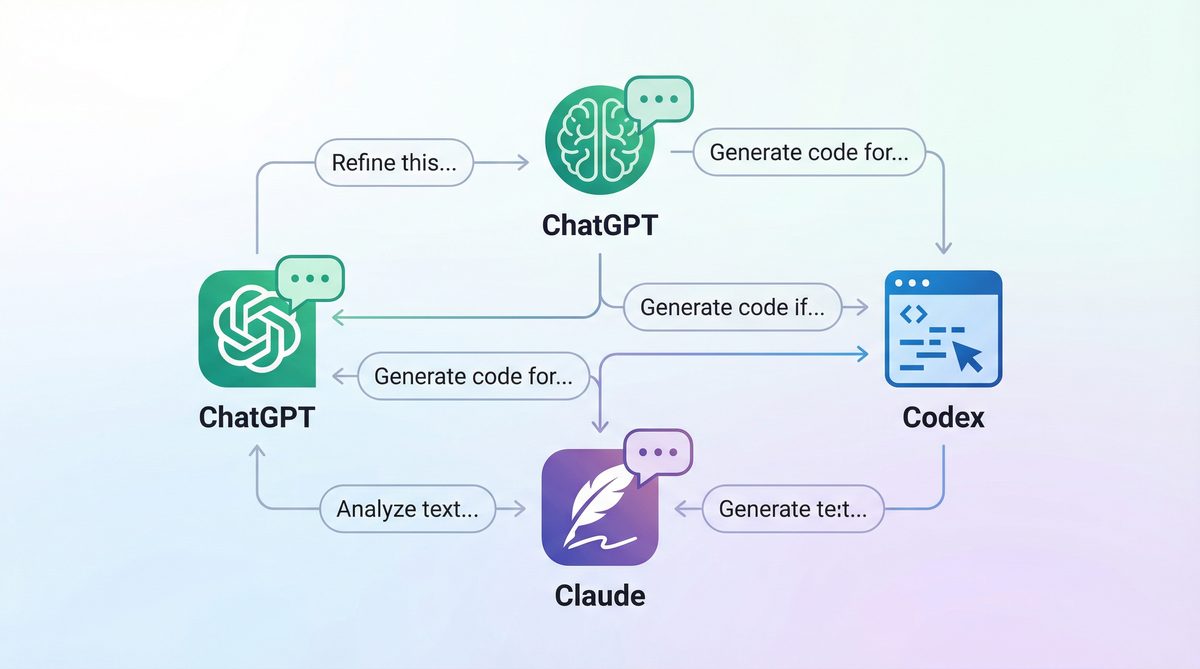

Tool Mastery Beyond Simple Chat

The AI models of 2026 are not just conversational agents; they are sophisticated tools capable of complex reasoning, code generation, data analysis, and creative output. However, unlocking these advanced capabilities requires a deeper understanding of their underlying architectures and limitations. Simple chat-style prompts are insufficient for leveraging the full power of models like Codex for code generation or Claude for nuanced textual analysis.

Tool mastery implies understanding how to integrate AI into workflows, not just as a standalone chat interface. This includes:

- API Integration: Designing prompts for programmatic interaction via APIs, where structured inputs and outputs are paramount.

- Agentic AI: Crafting prompts that enable AI models to act as autonomous agents, capable of planning, executing tasks, and self-correction, often by breaking down complex problems into smaller, manageable steps.

- Specialized Model Capabilities: Recognizing the strengths and weaknesses of different models (e.g., Claude’s long context window, Codex’s code understanding) and tailoring prompts accordingly.

Advanced Prompt Strategies for ChatGPT and Claude

While both ChatGPT and Claude are powerful LLMs, they possess distinct characteristics that necessitate tailored prompting approaches. Understanding these nuances is key to maximizing their potential.

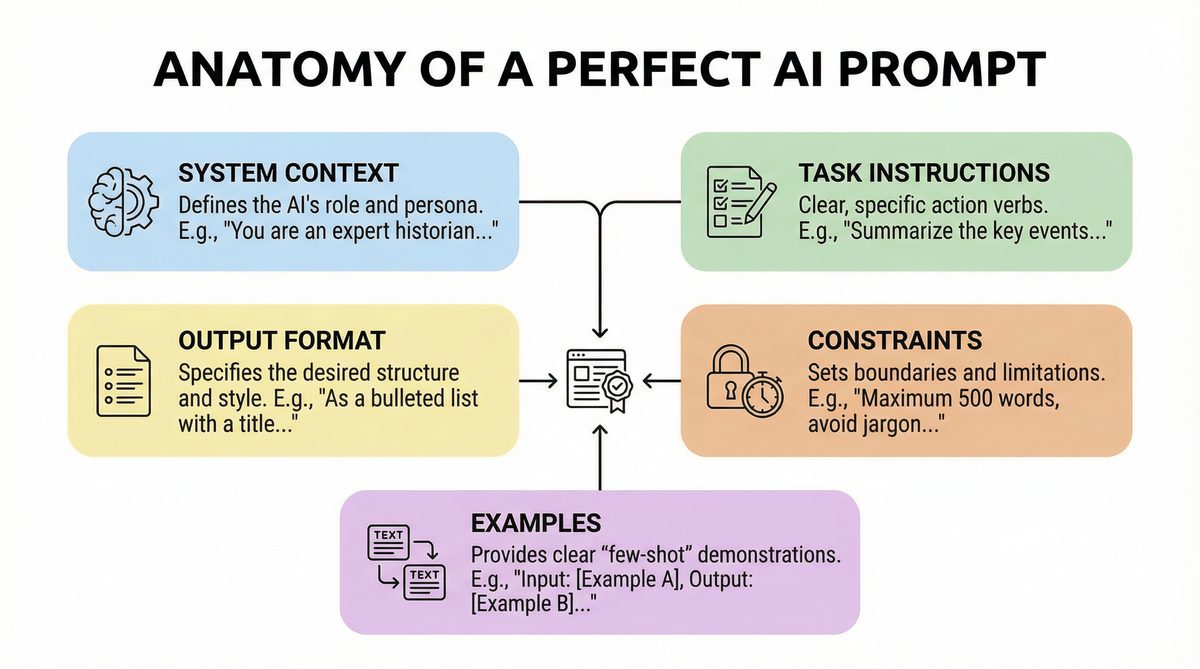

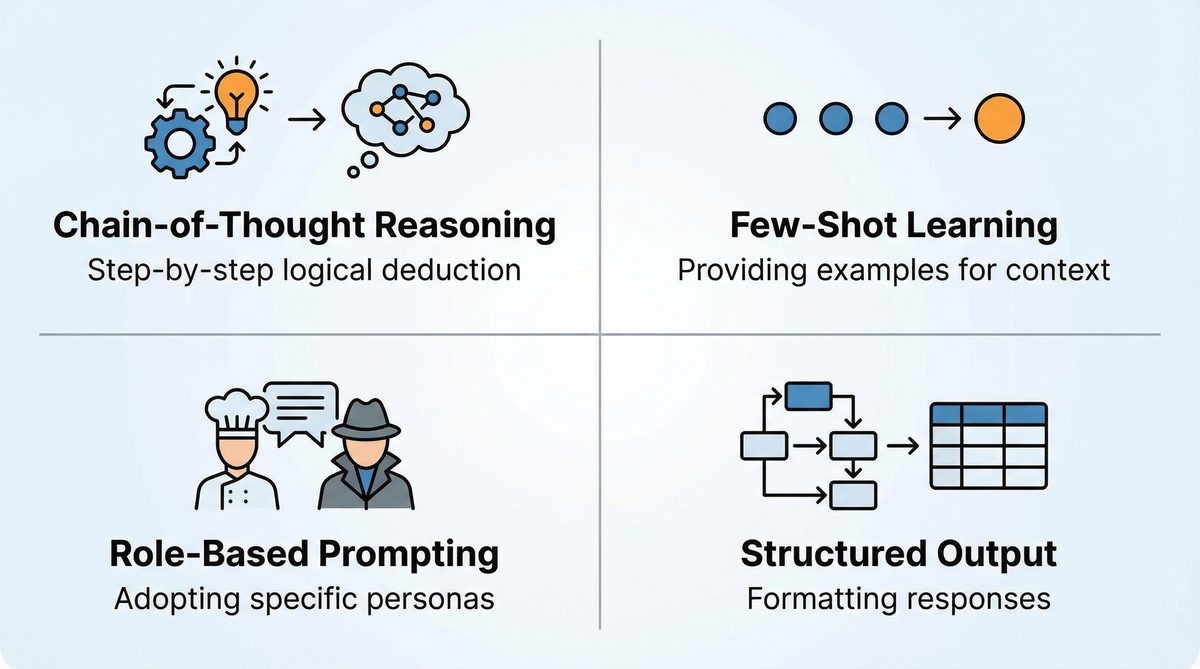

General Principles for Advanced Prompting

- Persona Assignment: Always assign a persona to the AI (e.g., “You are an expert quantum physicist,” “Act as a senior software architect”). This significantly shapes the tone, depth, and perspective of the response.

- Role-Playing and Simulation: For complex scenarios, prompt the AI to simulate a situation or role-play multiple characters. This is invaluable for scenario planning, dialogue generation, and even testing code logic.

- Constraint-Based Prompting: Clearly define boundaries, limitations, and requirements. Specify output length, format, style, and even forbidden topics or phrases.

- Chain-of-Thought (CoT) Prompting: Encourage the AI to “think step-by-step” before providing a final answer. This dramatically improves accuracy for complex reasoning tasks and can expose the AI’s thought process. For more on this, consider exploring our resources on Advanced Prompting Techniques for ChatGPT and Claude in 2026: A Practitioner’s Handbook.

- Few-Shot Learning: Provide a few examples of input-output pairs to guide the AI’s understanding of the desired task. This is particularly effective for tasks with specific formatting or stylistic requirements.

- Iterative Refinement: Don’t expect perfection in the first prompt. Be prepared to refine your prompts based on initial AI responses, providing feedback and additional context.

Specific Strategies for ChatGPT (OpenAI Models)

ChatGPT, particularly the GPT-4 series, excels in general knowledge, creative writing, and complex reasoning. Its strength lies in its vast training data and ability to understand nuanced instructions.

- Function Calling & Tool Use: Leverage ChatGPT’s advanced function-calling capabilities. Describe external tools or APIs, and prompt the model to generate the necessary function calls (e.g., for fetching data, sending emails, executing code). This transforms the AI into an orchestrator.

- JSON Schema for Output: For programmatic consumption, explicitly request output conforming to a JSON schema. This ensures predictable and parsable responses, streamlining integration into applications.

- System Prompts: Utilize the ‘system’ role in API calls to set a foundational behavior or persona for the entire conversation. This is more persistent and influential than user-level persona assignments.

- Temperature and Top_P Tuning: Experiment with these parameters to control the creativity and determinism of responses. Lower temperature for factual accuracy, higher for creative brainstorming.

Specific Strategies for Claude (Anthropic Models)

Claude is renowned for its ethical alignment, longer context windows, and strong performance in nuanced text analysis, summarization, and safe content generation. Its ability to handle extensive documents makes it ideal for research and legal applications.

- Extended Context Window Exploitation: Claude’s strength lies in its ability to process very long inputs. Use this for comprehensive document analysis, summarizing entire books, or performing detailed code reviews across multiple files. Provide all relevant background information in a single prompt to minimize context switching.

- Constitutional AI Principles: When guiding Claude, you can explicitly reference ethical principles or desired behaviors. For instance, “Ensure the output is unbiased and respectful of all viewpoints.” This aligns with its training philosophy.

- XML Tagging for Structure: Claude often responds well to instructions embedded within XML-like tags (e.g.,

, ). This helps it delineate different parts of your prompt and understand specific instructions for each section. - Emphasis on Nuance and Subtlety: For tasks requiring deep understanding of text, like identifying subtle biases or extracting complex arguments, provide detailed instructions on what to look for and how to interpret it. Claude often excels here.

Strategies for OpenAI Codex (and similar code-focused models)

Codex, the progenitor of code-focused LLMs, is designed specifically for understanding and generating code. Its derivatives are invaluable for developers.

- Docstring-Driven Code Generation: Provide detailed docstrings or comments before the code block you want the AI to complete. This gives Codex clear context about the function’s purpose, arguments, and expected return.

- Test-Driven Prompting (TDP): Provide unit tests alongside your code requirements. Prompt Codex to write code that passes these tests. This is a powerful way to ensure functional correctness.

- Refactoring and Optimization: Give Codex existing code and prompt it to refactor for readability, optimize for performance, or convert to a different language/framework. Be explicit about the desired improvements.

- Error Debugging: Paste error messages and relevant code snippets, then ask Codex to identify the bug and suggest fixes. This transforms the AI into a powerful debugging assistant.

- API Integration Code: Prompt Codex to generate code for interacting with specific APIs, providing the API documentation or schema. For advanced API integration, consider our deep dive into Advanced Prompting Techniques for ChatGPT (GPT-5.4) and Claude (Opus 4.6/Sonnet 4.6) in 2026.

Use Cases: Developer and Biotech Applications

Developer Applications

For developers, advanced prompt engineering revolutionizes the software development lifecycle.

- Automated Code Generation & Completion:

- Prompt: “Generate a Python Flask API endpoint for user authentication with JWT. Include routes for registration, login, and token refresh. Use SQLAlchemy for database interaction with a User model containing username, email, and hashed password. Ensure proper error handling and input validation.”

- Result: Rapid generation of boilerplate code, significantly accelerating development.

- Complex Bug Debugging & Optimization:

- Prompt: “I’m encountering a ‘ConcurrentModificationException’ in this Java method: [paste code]. The error occurs when iterating over ‘myList’ while adding elements in a nested loop. Suggest a robust, thread-safe solution, perhaps using a CopyOnWriteArrayList or synchronized blocks, and explain the performance implications.”

- Result: Detailed explanation of the bug, multiple potential fixes, and analysis of trade-offs.

- Code Documentation & Explanations:

- Prompt: “Act as a senior technical writer. Explain the following TypeScript code snippet to a junior developer. Focus on the purpose of the ‘useEffect’ hook, dependency array, and cleanup function in this React component: [paste code].”

- Result: Clear, pedagogical explanation tailored to a specific audience, saving documentation time.

- Test Case Generation:

- Prompt: “Given this JavaScript function for validating email addresses: [paste function]. Generate a comprehensive set of Jest unit tests covering valid formats, invalid formats, edge cases (empty string, very long string), and international characters.”

- Result: A complete suite of test cases, improving code quality and coverage.

Biotech Applications

The biotech sector, with its data-intensive and complex research, stands to gain immensely from advanced AI prompting.

- Scientific Literature Review & Summarization:

- Prompt (Claude): “You are a lead researcher in pharmacogenomics. Analyze the attached 20 research papers on CRISPR-Cas9 gene editing applications in oncology. Identify key breakthroughs, common challenges, and promising future research directions. Summarize findings into a structured report, highlighting conflicting results and novel methodologies. Focus on studies published between 2022-2025. [Attach PDF content/text of papers].”

- Result: A comprehensive, expert-level literature review, condensing weeks of manual work into hours.

- Drug Discovery & Target Identification:

- Prompt: “Act as a computational chemist. Given the molecular structure of ‘Compound X’ (SMILES string: C1=CC=C(C=C1)C(=O)O) and its known interaction profile with ‘Protein Y’, propose three novel chemical modifications to Compound X that could enhance its binding affinity to Protein Y while minimizing off-target effects. Explain the rationale for each modification, referencing relevant biochemical principles.”

- Result: Intelligent suggestions for molecular design, accelerating lead optimization.

- Experimental Design & Protocol Generation:

- Prompt: “Design a detailed laboratory protocol for a qPCR experiment to quantify gene expression levels of ‘Gene A’ in human prostate cancer cell lines (PC-3, LNCaP) following treatment with ‘Drug Z’. Include steps for RNA extraction, cDNA synthesis, primer design considerations, reaction setup, thermocycling conditions, and data analysis. Assume standard lab equipment is available.”

- Result: A ready-to-implement experimental protocol, ensuring rigor and reproducibility. For further insights into scientific prompt generation, explore Chain-of-Verification Prompting: The Advanced Technique That Eliminates AI Hallucinations in 2026.

- Bioinformatics Scripting & Data Analysis:

- Prompt (Codex): “Write a Python script using Biopython and Pandas to parse a FASTA file containing genetic sequences, calculate the GC content for each sequence, and store the results in a CSV file with sequence ID, length, and GC content. Handle potential malformed FASTA entries gracefully.”

- Result: A functional Python script for bioinformatics tasks, saving precious time for researchers.

The Future is Prompt-Driven

As we navigate 2026 and beyond, the distinction between a casual AI user and a proficient AI engineer will become stark. The latter will be the architects of innovation, leveraging advanced prompt engineering to unlock unprecedented efficiencies, accelerate discovery, and drive competitive advantage. The ability to articulate complex requirements to an AI, to guide its reasoning, and to refine its output with precision is no longer a luxury but a necessity. Invest in mastering these skills, and you will not only stay ahead of the curve but actively define the future of AI-powered workflows.

Access 40,000+ AI Prompts for ChatGPT, Claude & Codex — Free!

Subscribe to get instant access to our complete Notion Prompt Library — the largest curated collection of prompts for ChatGPT, Claude, OpenAI Codex, and other leading AI models. Optimized for real-world workflows across coding, research, content creation, and business.

Access Free Prompt Library