Google DeepMind, Alphabet Inc.’s artificial intelligence research division, introduced a new foundation robotics artificial intelligence model on Tuesday, designed as a significant upgrade for understanding and precise spatial reasoning.

The new model, named Gemini Robotics-ER 1.6 from Gemini Robotics, enhances spatial reasoning and multi-view understanding to bring greater autonomy to physical agents and robots of all kinds.

DeepMind said this model provides high-level reasoning capabilities for robotics, offering a layer for task planning and tool calling. These include native tools for Google Search to find information, vision-language-action models, and other third-party user-defined functions to extend capabilities.

Examples of improvements include precision object detection, categorization and detection – a must for robots when picking out and picking up items – especially for sorting parcels or cleaning up a messy room.

Also in relational logic, such as making comparisons, for example, identifying the smallest object in a set, or defining from-to relationships when moving object X to location Y. This is coupled with enhancements to trajectory mapping and defining the best way to grab objects.

The company also said the model works well within constraints and reasons through complex prompts like “point to every object small enough to fit inside the blue cup.”

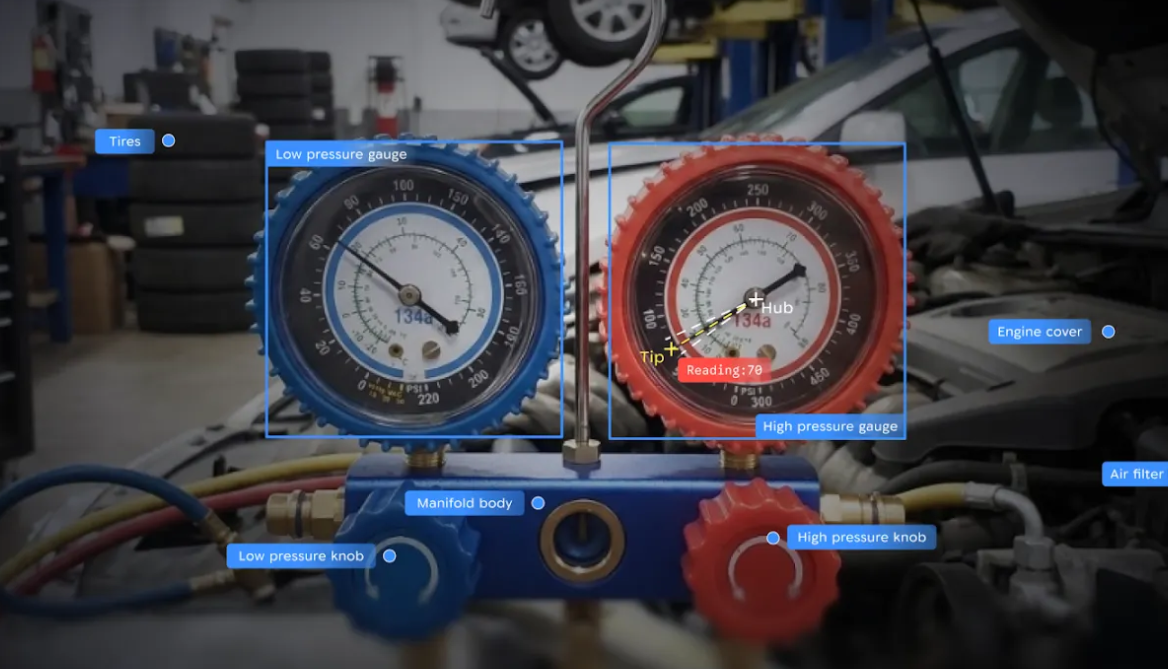

Beyond making robots move, DeepMind researchers also boosted the model’s ability to understand and read things like gauges and instruments – this requires complex visual reasoning. It’s also fundamental for operating within environments such as factories, warehouses and even domestic spaces such as houses. In many cases, gauges include needles, tick marks, finely etched numbers and more indicators (and sometimes instructions) that need to be resolved to fully understand the nature of the readings.

“Capabilities like instrument reading and more reliable task reasoning will enable Spot to see, understand, and react to real-world challenges completely autonomously,” said Marco da Silva,

vice president and general manager of Spot at Boston Dynamics Inc., the dog-like robot the company develops.

DeepMind said the Robotics-ER 1.6 achieves this level of accuracy via agentic vision, which combines visual reasoning with code execution. The model takes a snapshot of the image, resolving the fine details and then uses carefully curated code to estimate the proportions and intervals to get an accurate reading, then finally uses its reasoning engine to interpret the reading.

Starting today, developers can access ER 1.6 via the Gemini API and Google AI Studio.

Images: Google DeepMind

Support our mission to keep content open and free by engaging with theCUBE community. Join theCUBE’s Alumni Trust Network, where technology leaders connect, share intelligence and create opportunities.

- 15M+ viewers of theCUBE videos, powering conversations across AI, cloud, cybersecurity and more

- 11.4k+ theCUBE alumni — Connect with more than 11,400 tech and business leaders shaping the future through a unique trusted-based network.

About News Media

Founded by tech visionaries John Furrier and Dave Vellante, News Media has built a dynamic ecosystem of industry-leading digital media brands that reach 15+ million elite tech professionals. Our new proprietary theCUBE AI Video Cloud is breaking ground in audience interaction, leveraging theCUBEai.com neural network to help technology companies make data-driven decisions and stay at the forefront of industry conversations.