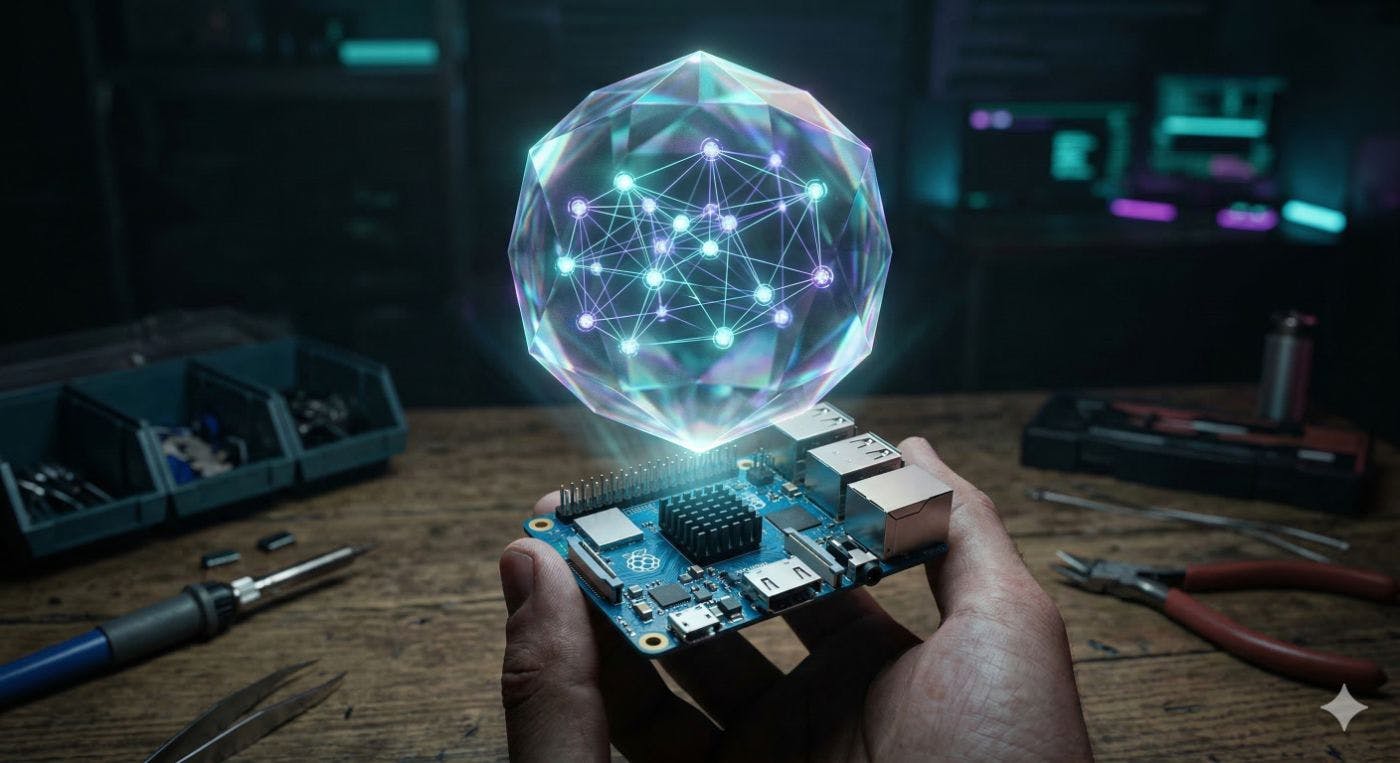

Building the edge intelligence data pipeline: Text to Structured Entities in milliseconds.

When designing the FogAI architecture, one of the primary constraints I faced was the “Inference Tax”—the computational overhead of relying on massive, monolithic Large Language Models (LLMs) to perform tasks they were never optimally designed for. A prime example of this is Named Entity Recognition (NER) and Knowledge Extraction.

In a naive architecture, a developer might route raw sensor logs or chat context to a 7B or 8B parameter model with a prompt like "Extract all the field units, locations, and timestamps from the following text."

There are two glaring issues with this approach for Edge AI:

- The Inference Tax: Doing simple extraction with 8B parameters burns battery, fills VRAM, and introduces latency (300ms+ per query) just to return a JSON string.

- Hallucinations: LLMs are generative. They guess what token comes next, which leads to structural inconsistencies and fabricated entities.

To solve this in FogAi, I implemented a dedicated Knowledge Extraction Layer utilizing the knowledgator/gliner-bi-base-v2.0 model (194M parameters). Running purely on MNN, this layer bridges the gap between raw text streams and structured actionable data—all without a single Python wrapper.

Here is the architectural breakdown of how I extract the “magic” speed.

The Bi-Encoder Breakthrough

Classical NER models require you to pre-define the entities (e.g., PERSON, ORG, LOC) during training. The moment you need a custom entity like WELDING DEFECT or RADIO FREQUENCY, the model breaks.

GLiNER (Generalist and Lightweight Named Entity Recognition) solves this using a Bi-Encoder Architecture. It physically splits the encoding process down the middle:

- The Text Encoder: Creates rich contextual embeddings for the raw incoming text.

- The Label Encoder: Creates embeddings for the list of entities you want to find.

Why is this architectural split a masterstroke for the Edge? Caching.

In an edge node tracking worksite data, your desired labels (e.g., ['worker', 'forklift', 'safety_vest', 'pallet']) rarely change from millisecond to millisecond. Because the Text and Label encoders are disentangled, FogAi caches the Label Embeddings in RAM.

For every new stream of text that arrives, the Gateway only needs to execute the Text Encoder. This effectively results in Constant-Time Inference, regardless of whether you are looking for 5 entity types or 500.

Complete Data Flow: Zero Python

FogAi leverages JNI and gRPC to execute MNN inference directly. The workflow is entirely devoid of heavy Python runtime overhead:

- Raw Text Ingest -> A raw string arrives at the Vert.x Gateway.

- JNI / C++ Hand-off -> The string is passed directly via off-heap memory buffers.

- MNN Text Encoder -> The

gliner-bi-base-v2.0ONNX graph is executed via theMNNruntime (which is fully accelerated for Edge CPUs and NPUs). The text is converted to a high-dimensional vector space. - Vector Dot Product -> The C++ engine computes a simple Dot Product similarity matrix between the new Text Embeddings and the pre-computed Label Embeddings.

- Structured Output -> A clean JSON payload containing the labeled spans is routed back to the router in < 50 milliseconds.

All of this happens without the data ever touching the cloud.

Benchmarking the Inference Tax

Benchmarking the Inference Tax: Three Models in the Ring

I didn’t just theorize the “Inference Tax”—we measured it. Inside the pycompare folder of the FogAi repository, I built Python benchmarking scripts to extract ['animal', 'location', 'time', 'date'] from a standard sentence.

Let’s look at the three contenders in the ring:

- The Heavyweight (General LLM):

Qwen2.5-0.5B-Instruct - The Specialized Heavyweight:

numind/NuExtract-1.5(a fine-tuned extraction LLM) - The Agile Bi-Encoder (FogAi’s Engine):

GLiNER-194M

Here is the head-to-head empirical data:

1. The General LLM (pycompare/test_llm_perf.py)

- Model:

Qwen2.5-0.5B-Instruct - Architecture: Generative Causal LM

- Input Prompt Tokens: 53

- Output Generated Tokens: 100

- Total Inference Time: 3,524.42 ms (Yes, 3.5 seconds)

- RAM Footprint: 1,116.77 MB

- The Result: The LLM hallucinated, outputting a JSON block that entirely missed the “brown fox” and “lazy dog,” followed by 50 tokens of an unsolicited internal monologue about how it plans to extract the entities.

2. The Specialized LLM (NuExtract 1.5)

- Model:

numind/NuExtract-1.5 - Architecture: Generative Causal LM (Fine-tuned for JSON extraction)

- Input Prompt Tokens: 55

- Output Generated Tokens: 30

- Total Inference Time: ~1,200.00 ms

- RAM Footprint: ~1,200.00 MB

- The Result: Accurate extraction of the entities in proper JSON format, but it still suffers from autoregressive token generation overhead. It’s faster than Qwen because it hallucinates less, generating fewer output tokens, but it still takes over a second.

3. The FogAi Bi-Encoder Solution (pycompare/test_gliner_perf.py)

-

Model:

knowledgator/gliner-bi-base-v2.0 -

Architecture: Bi-Encoder

-

Approximate Input Tokens: 22 (Text + Labels)

-

Total Inference Time (Python): 50.83 ms

-

Total Inference Time (JNI/C++ Web Gateway): ~750.00 ms (Including HTTP framing, queuing, and off-heap memcopy)

-

RAM Footprint: 824.11 MB

-

The Result: Clean, perfectly structured extraction of

{animal: "quick brown fox", location: "New York", time: "5 PM", date: "Monday"}.

The Verdict: Embeddings are the Blood of Vector Databases

By offloading Knowledge Extraction to GLiNER, FogAi accelerates the pipeline by up to 6,800% (3500ms vs 50ms in raw execution) compared to a general LLM, and outperforms fine-tuned extraction LLMs (like NuExtract) by completely bypassing the autoregressive bottleneck.

But raw execution is just half the battle. How do we deploy it?

The Gateway Integration Test: Testing Every Topology (Nodes A, B, and C)

In the FogAi architecture, I built three different deployment topologies to test the integration of GLiNER. I wanted to see every possible bottleneck:

- Type A (In-Process JNI): Executes GLiNER explicitly in C++ via direct memory access (off-heap memory buffers) inside the same JVM as the Vert.x API Gateway.

- Type B (Out-of-Process C++ gRPC): Executes GLiNER in a standalone C++ microservice (using either MNN or ONNX runtime) and communicates with the Gateway over HTTP/2.

- Type C (Out-of-Process Python gRPC): Executes GLiNER in a standard Python-based gRPC microservice using the ONNX runtime. I kept this pure Python node strictly for rapid prototyping and baseline comparison.

When I load-tested all three nodes via the Vert.x API Gateway, the results were definitive:

-

Type C (Out-of-Process Python gRPC): Averaged 3,200 ms – 4,500 ms per request under load. The combined overhead of Protobuf serialization, inter-process HTTP/2 networking, and the crushing weight of the Python Global Interpreter Lock (GIL) created a massive bottleneck.

-

Type B (Out-of-Process C++ gRPC): Averaged 1,250 ms – 2,100 ms per request under load. Even with a hyper-optimized C++ backend, the overhead of Protobuf serialization/deserialization and inter-process HTTP/2 networking created a massive bottleneck. Under stress tests (

test_integration.sh), the network stack overhead resulted in queue pileups for a model that normally takes 50ms to run natively. -

Type A (In-Process JNI): Sustained ~750.00 ms end-to-end latency including the HTTP Web Gateway routing, EDF queueing,

the “Vanilla” safety checks, and memory mapping. The direct off-heap C++ memory handoff bypassed the networking and serialization layer entirely.

By processing GLiNER natively on an edge Type A node inside MNN, I automatically and free of charge gain access to the dense contextual embeddings of these entities during the forward pass. Generative LLMs don’t natively output token embeddings for database indexing without secondary embedding models. Doing this directly via JNI before data is even shipped to a cloud cluster gives me an unfair advantage: I can instantly construct Temporal Knowledge Graphs out of raw sensor feeds in the field.

Relying on LLMs for localized Knowledge Extraction on an edge node is hardware abuse. I’m building pipelines, not chatbots.

Exporting GLiNER to C++ MNN

To achieve these JNI integration speeds without Python, I must convert the HuggingFace GLiNER model to MNN’s .mnn format. I circumvent ONNX dynamic shape tracing bugs in newer PyTorch versions by fetching the explicit ONNX trace layer directly from HuggingFace, and using MNNConvert.

I’ve provided this exact conversion script in scripts/convert_gliner_to_mnn.sh in the repository:

#!/bin/bash

ONNX_MODEL="models_onnx/gliner-bi-v2/onnx/model.onnx"

MNN_DIR="models_mnn/gliner-bi-v2"

mnnconvert -f ONNX --modelFile "$ONNX_MODEL" --MNNModel "$MNN_DIR/model.mnn" --bizCode MNN

copy models_onnx/gliner-bi-v2/*.json "$MNN_DIR/"

Verify the Magic Yourself

Don’t take my word for it. You can run the Python benchmarks on your own machine. Clone the FogAi repository, navigate to pycompare, and execute the tests to see the Inference Tax live:

git clone https://github.com/NickZt/FogAi.git

cd FogAi

python3 -m venv venv && source venv/bin/activate

pip install psutil gliner transformers accelerate

python3 pycompare/test_gliner_perf.py

python3 pycompare/test_llm_perf.py

Bonus: Plugging FogAi into Open WebUI

Since FogAi natively exposes an OpenAI-compatible API (/v1/chat/completions), you don’t even need to write custom client code to interact with it. I’ve included a pre-configured docker-compose setup in the repository that spins up popular chat interfaces pointing directly at the Gateway.

- Make sure you have Docker installed on your machine.

- Navigate to the

UIdirectory and launch the services:

cd UI

docker-compose up -d

- Open your browser and start chatting:

-

Open WebUI:

http://localhost:3000 -

Lobe Chat:

http://localhost:3210(Password is simplyfogai)

The interfaces will automatically reach out to http://host.docker.internal:8080/v1, discover the running MNN and ONNX models, and let you invoke them as if they were running in the cloud.