Google DeepMind researchers have introduced ATLAS, a set of scaling laws for multilingual language models that formalize how model size, training data volume, and language mixtures interact as the number of supported languages increases. The work is based on 774 controlled training runs across models ranging from 10 million to 8 billion parameters, using multilingual data covering more than 400 languages, and evaluates performance across 48 target languages.

Most existing scaling laws are derived from English-only or single-language training regimes. As a result, they provide limited guidance for models trained on multiple languages. ATLAS extends prior work by explicitly modeling cross-lingual transfer and the efficiency trade-offs introduced by multilingual training. Instead of assuming a uniform impact from adding languages, the framework estimates how individual languages contribute to or interfere with performance in others during training.

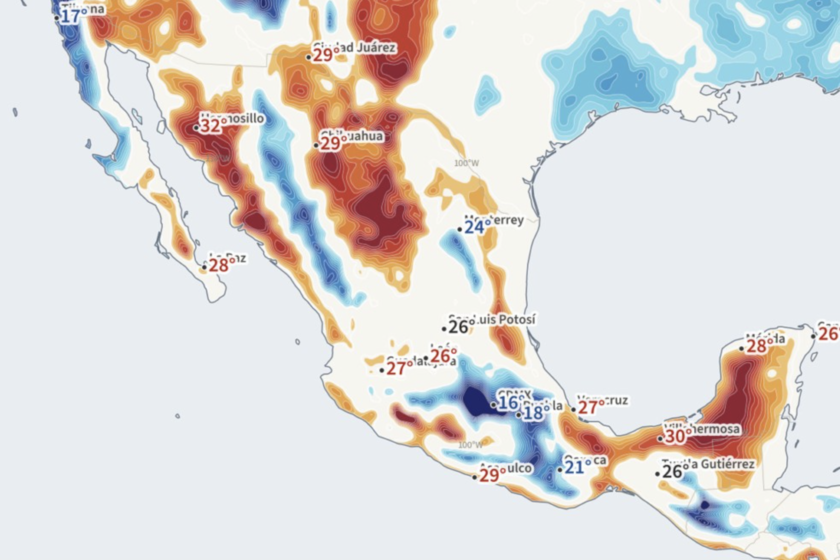

At the core of ATLAS is a cross-lingual transfer matrix that measures how training on one language affects performance in another. This analysis shows that positive transfer is strongly correlated with shared scripts and language families. For example, Scandinavian languages exhibit mutual benefits, while Malay and Indonesian form a high-transfer pair. English, French, and Spanish emerge as broadly helpful source languages, likely due to data scale and diversity, though transfer effects are not symmetric.

Source: Google DeepMind Blog

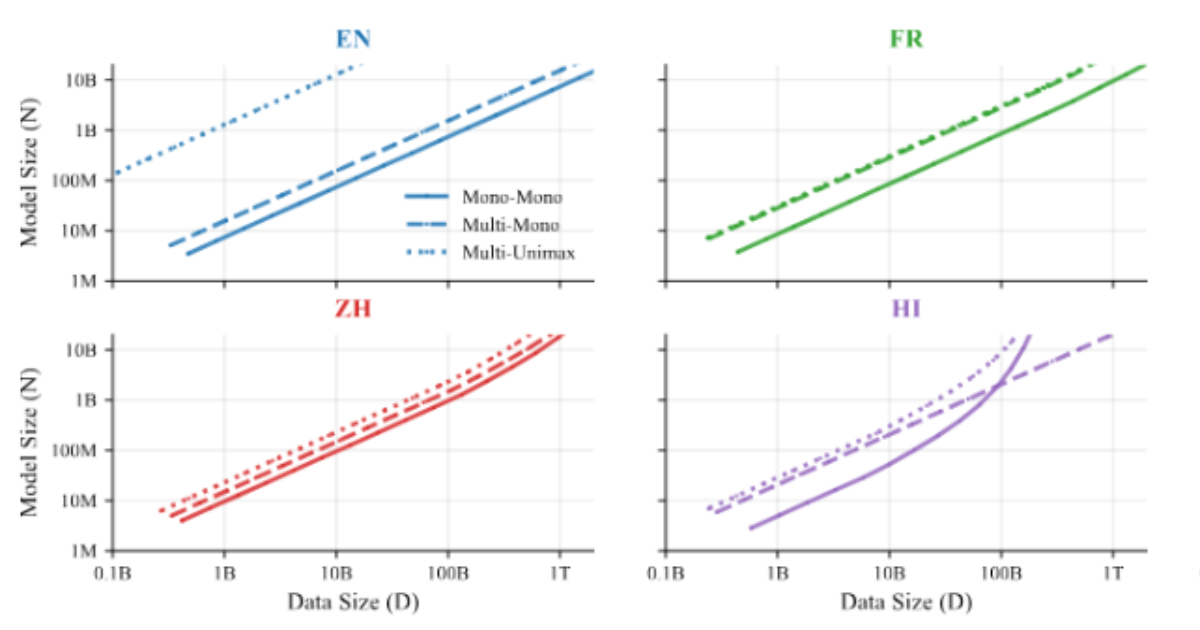

ATLAS extends scaling laws by explicitly modeling the number of training languages alongside model size and data volume. It quantifies the “curse of multilinguality,” where per-language performance declines as more languages are added to a fixed-capacity model. Empirical results show that doubling the number of languages while maintaining performance requires increasing model size by roughly 1.18× and total training data by 1.66×, with positive cross-lingual transfer partially offsetting the reduced data per language.

The study also examines when it is more effective to pre-train a multilingual model from scratch versus fine-tuning an existing multilingual checkpoint. Results show that fine-tuning is more compute-efficient at lower token budgets, while pre-training becomes advantageous once training data and compute exceed a language-dependent threshold. For 2B-parameter models, this crossover typically occurs between about 144B and 283B tokens, providing a practical guideline for selecting an approach based on available resources.

The release has started a discussion about alternative model architectures. One X user commented:

Rather than an enormous model that is trained on redundant data from every language, how large would a purely translation model need to be, and how much smaller would it make the base model?

While ATLAS does not directly answer this question, its transfer measurements and scaling rules offer a quantitative foundation for exploring modular or specialized multilingual designs.