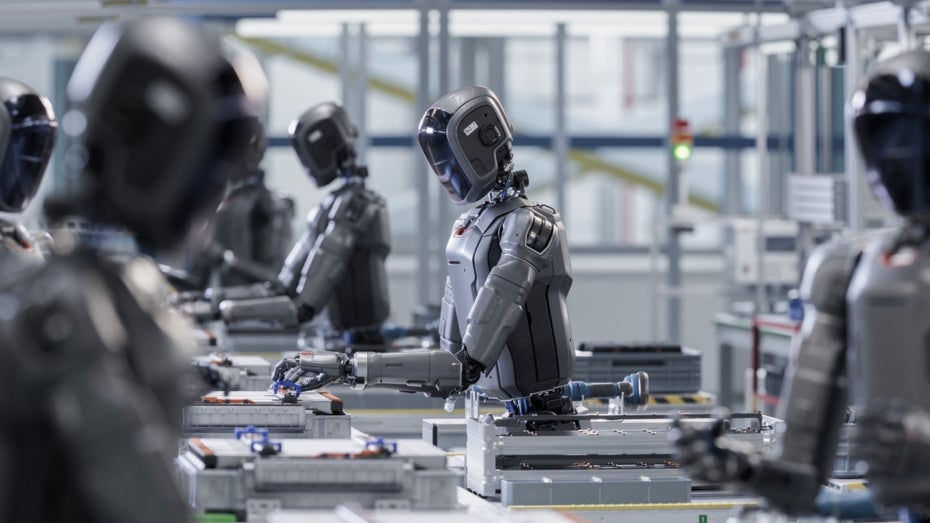

In a separate announcement, Meta CTO Andrew Bosworth announced that the company is expanding its internal data collection as part of its broader AI for Work initiative. Meta’s goal is to create a future in which AI agents do the main work. While employees guide, review and support them to improve.

Analysts see these risks

Meta’s initiative is not only likely to intensify the debate about employee monitoring and data protection: it could also be a first glimpse of how far large tech companies could go to develop systems that automate knowledge work. “Meta’s move signals a shift from automating individual tasks to replicating complete, human workflows – based on the actual behavior of the workforce,” comments Pareekh Jain, CEO of Pareekh Consulting, on Meta’s move.

Sanchit Vir Gogia, chief analyst at Greyhound Research, considers this change to be significant because Meta is no longer just documenting in order to automate. For IT leaders in companies, this type of monitoring poses a new category of risks. Ultimately, it is no longer just business data that is being collected, but also behavioral data, the analyst states: “The data protection and compliance risks are significant, especially with regard to European labor laws and the GDPR. Here it is not easily possible to record keystrokes and screen activities. Because the training data sets generated in this way contain login data, IP addresses and possibly also sensitive workflows, they are also attractive targets for attack – and thus also increase the security risks.” (fm)