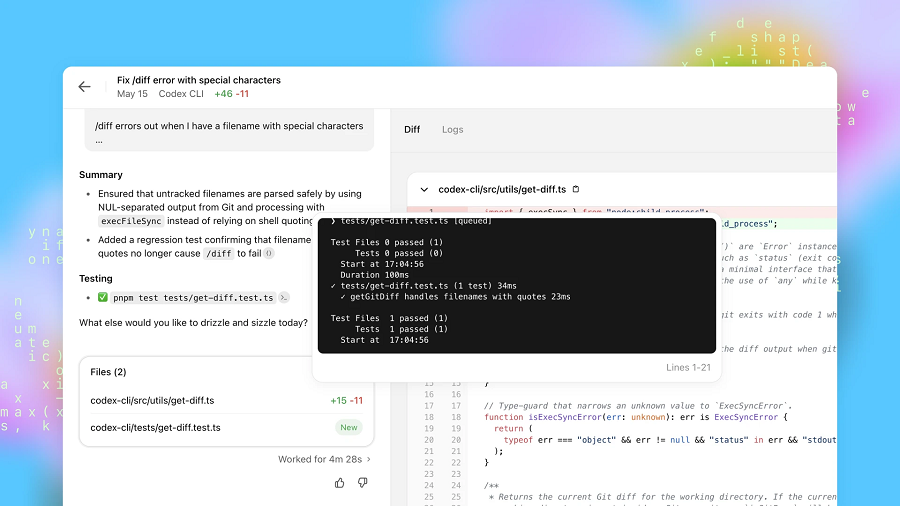

OpenAI Group PBC today debuted Codex Security, a new tool in its Codex programming assistant that can help developers find and fix code vulnerabilities.

The launch comes two weeks after Anthropic PBC introduced a competing product. Claude Code Security can analyze an application’s code base, identify vulnerabilities and suggest fixes. Codex Security works in a similar manner.

Developers can activate OpenAI’s new tool by giving it access to the code repository they wish to scan. According to the ChatGPT developer, Codex Security creates a temporary copy of the repository in an isolated container. It then studies the code files in a process that can take up to several days.

Codex Security’s analysis produces a document that OpenAI calls a threat model. It’s a lengthy natural language description of how a program works and where it may be vulnerable. An application’s threat model includes, among other details, information on interface elements that enable end-users to upload data. Such modules are particularly susceptible to cyberattacks.

Developers can customize the threat model if necessary. A user could, for example, add in more details about a particularly sensitive application component that Codex Security should prioritize. The tool uses the threat model to guide its vulnerability scans.

The model tests the flaws that it finds in a sandbox to determine whether they can be exploited by hackers. After filtering false positives, the tool ranks vulnerabilities based on their severity. For added measure, it saves logs about the flaws that didn’t pass the sandbox test. Developers can use those logs to search for vulnerabilities that may have been accidentally tagged as false positives.

Codex Security generates a remediation suggestion for each exploit that it finds. The recommendation comprises the code necessary to fix the issue and a natural language explanation. After reviewing the suggested code, developers can push it to production by clicking a button.

The new model started out as an internal tool called Aardvark that OpenAI used to analyze its own code files. Last year, the company launched a beta program that made the tool available to a limited number of customers. OpenAI says that the beta program helped it cut Code Security’s false positives by more than 50%.

The tool helped early adopters detect more than 11,000 critical and high-severity vulnerabilities. Additionally, OpenAI used it to scan a number of popular open-source tools that power its workloads. The company found 14 vulnerabilities that were severe enough to be included in the CVE database.

Codex Security is available as a research preview in the Enterprise, Business and Edu tiers of ChatGPT. Additionally, OpenAI has launched a program that will enable open-source project maintainers to access the tool at no charge.

Image: OpenAI

Support our mission to keep content open and free by engaging with theCUBE community. Join theCUBE’s Alumni Trust Network, where technology leaders connect, share intelligence and create opportunities.

- 15M+ viewers of theCUBE videos, powering conversations across AI, cloud, cybersecurity and more

- 11.4k+ theCUBE alumni — Connect with more than 11,400 tech and business leaders shaping the future through a unique trusted-based network.

About News Media

Founded by tech visionaries John Furrier and Dave Vellante, News Media has built a dynamic ecosystem of industry-leading digital media brands that reach 15+ million elite tech professionals. Our new proprietary theCUBE AI Video Cloud is breaking ground in audience interaction, leveraging theCUBEai.com neural network to help technology companies make data-driven decisions and stay at the forefront of industry conversations.