In the era of AI-assisted development, the way we interact with code is fundamentally changing. More teams are building copilots, documentation assistants, or internal LLM agents that help developers answer questions like:

“Where is this function used?”

“How does this service handle errors?”

“Which file defines this configuration?”

But for AI to answer these questions accurately and fast, it needs access to the right context, organized in the right structure. That’s where codebase indexing comes in.

Why Indexing Your Codebase Matters LLMs (large language models) are powerful, but they don’t know your codebase. Even with fine-tuning, it’s often impractical or outdated. RAG (Retrieval-Augmented Generation) is the more flexible and efficient solution: instead of trying to memorize your entire repo, it retrieves relevant context in real time.

But to retrieve the right snippets, you need to index your codebase.

Here’s why it matters:

-

Context Is Everything LLMs have a limited context window (even GPT-4o), and dumping your whole repo into a prompt is inefficient and expensive. Indexing lets you chunk and structure your code so that only the most relevant pieces are retrieved when needed.

-

RAG Relies on Semantic Search Traditional search tools match keywords. But AI agents need semantic search—based on meaning. That means your codebase needs to be embedded and stored in a vector index, so similar concepts can be found even if phrased differently.

-

Code Changes. Your Index Should Too. Software is always evolving. A good index updates when your code changes. Otherwise, your LLM is stuck in the past. Real-time or incremental indexing avoids stale answers.

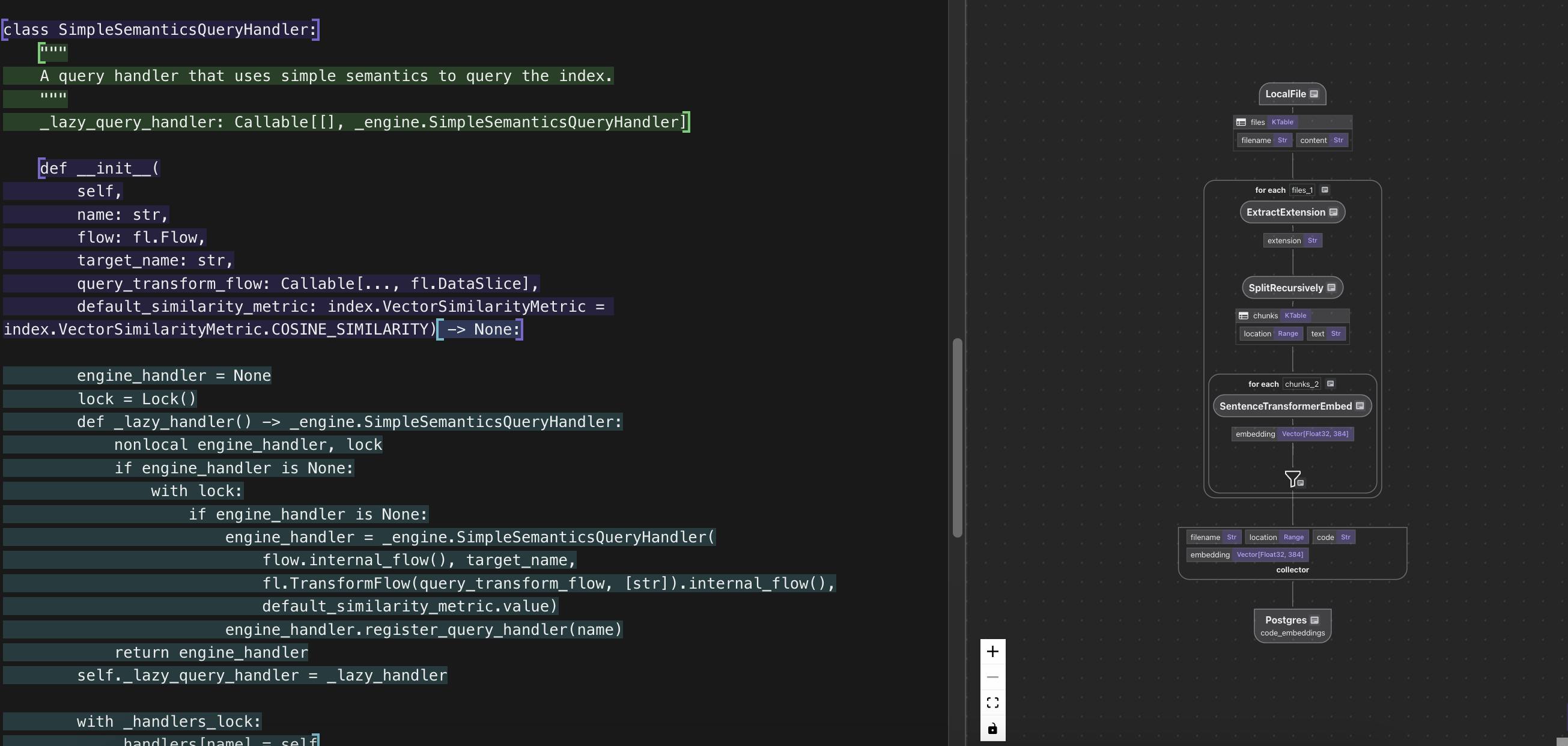

How to Index a Codebase for RAG Here’s a simplified version of how this works – by CocoIndex https://github.com/cocoindex-io/cocoindex an open source ETL framework for AI.

Only ~ 50 lines of Python on the indexing path, check it out 🚀 and would appreciate a github star.

🧩 1. Chunk Your Code Smartly Don’t just split every file into fixed-size pieces.

Use language-aware chunkers that understand function boundaries, class definitions, and comments. CocoIndex uses tree-sitter under the hood to parse code syntax accurately.

CocoIndex leverages Tree-sitter’s capabilities to intelligently chunk code based on the actual syntax structure rather than arbitrary line breaks. These syntactically coherent chunks are then used to build a more effective index for RAG systems, enabling more precise code retrieval and better context preservation.

Tree-sitter is a parser generator tool and an incremental parsing library, it is available in Rust 🦀.

Cocoindex will chunk the code with Tree-sitter. We use the SplitRecursively function to split the file into chunks. It is integrated with Tree-sitter, so you can pass in the language to the language parameter. To see all supported language names and extensions, see the documentation here. All the major languages are supported, e.g., Python, Rust, JavaScript, TypeScript, Java, C++, etc. If it’s unspecified or the specified language is not supported, it will be treated as plain text.

with data_scope["files"].row() as file:

file["chunks"] = file["content"].transform(

cocoindex.functions.SplitRecursively(),

language=file["extension"], chunk_size=1000, chunk_overlap=300)

🧠 2. Embed Your Code Each chunk is turned into a vector using an embedding model (like OpenAI’s text-embedding-3-small, Cohere, or open-source models).

These vectors represent the “meaning” of the code and are stored in a vector database.

@cocoindex.transform_flow()

def code_to_embedding(text: cocoindex.DataSlice[str]) -> cocoindex.DataSlice[list[float]]:

"""

Embed the text using a SentenceTransformer model.

"""

return text.transform(

cocoindex.functions.SentenceTransformerEmbed(

model="sentence-transformers/all-MiniLM-L6-v2"))

🔍 3. Query the index

def search(pool: ConnectionPool, query: str, top_k: int = 5):

# Get the table name, for the export target in the code_embedding_flow above.

table_name = cocoindex.utils.get_target_storage_default_name(code_embedding_flow, "code_embeddings")

# Evaluate the transform flow defined above with the input query, to get the embedding.

query_vector = code_to_embedding.eval(query)

# Run the query and get the results.

with pool.connection() as conn:

with conn.cursor() as cur:

cur.execute(f"""

SELECT filename, code, embedding <=> %s::vector AS distance

FROM {table_name} ORDER BY distance LIMIT %s

""", (query_vector, top_k))

return [

{"filename": row[0], "code": row[1], "score": 1.0 - row[2]}

for row in cur.fetchall()

]

🔄 Lastly, Keep the Index Fresh

If someone renames a function, deletes a module, or adds a new endpoint, your index should reflect that. CocoIndex supports live syncing with GitHub or local folders, automatically re-indexing changed files.

Real-World Use Cases

- Internal AI agents that answer developer questions using your codebase

- Onboarding tools that help new engineers understand systems faster

- Code review assistants that check for past patterns or anti-patterns

- Documentation generation that pulls relevant code snippets automatically

Indexing Turns Code Into Knowledge Your codebase is full of implicit knowledge—decisions, patterns, structures. Indexing makes that knowledge accessible to AI.Without it, your LLM is blind. With it, you unlock copilots that are actually helpful.

If you’re building internal agents, AI developer tools, or even just want smarter docs, start with indexing.

Explore how CocoIndex helps you do it with just a few lines of Python, real-time updates, and smart language-aware chunking, and with Rust performance.

Want help indexing your codebase or building an AI agent on top of it? Reach out—we’d love to hear what you’re working on. You can check for more step by step guide here.

Appreciate a github star at https://github.com/cocoindex-io/cocoindex!