TABLE OF LINKS

Abstract

1 Introduction

2 Related Work

3 Problem Formulation

4 Measuring Catastrophic Forgetting

5 Experimental Setup

6 Results

7 Discussion

8 Conclusion

9 Future Work and References

7 Discussion

The results provided in Section 6 allow us to reach several conclusions. First and foremost, as we observed a number of differences between the different optimizers over a variety of metrics and in a variety of testbeds, we can safely conclude that there can be no doubt that the choice of which modern gradient-based optimization algorithm is used to train an ANN has a meaningful and large effect on catastrophic forgetting. As we explored the most prominent of these, it is safe to conclude that this effect is likely impacting a large amount of contemporary work in the area.

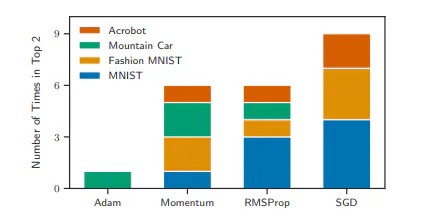

We earlier postulated that Adam being viewable as a combination of SGD with Momentum and RMSProp could mean that if either of the two mechanisms exacerbated catastrophic forgetting, then this would carry over to Adam. Thus, it makes sense to look at how often each of the four optimizers was either the best or second-best under a given metric and testbed. This strategy for interpreting the above results is supported by the fact that—in many of our experiments–the four optimizers could be divided naturally into one pair that did well and one pair that did poorly. The results of this process are shown in Figure 6. Looking at Figure 6, it is very obvious that Adam was particularly vulnerable to catastrophic forgetting and that SGD outperformed the other optimizers overall. These results suggest that Adam should generally be avoided—and ideally replaced by SGD—when dealing with a problem where catastrophic forgetting is likely to occur. We provide the exact rankings of the algorithms under each metric and testbed in Appendix D. When looking at SGD with Momentum as a function of momentum and RMSProp as a function of the coefficient of the moving average, we saw evidence that these hyperparameters have a pronounced effect on the amount of catastrophic forgetting.

Since the differences observed between vanilla SGD and SGD with Momentum can be attributed to the mechanism controlled by the momentum hyperparameter, and since the differences between vanilla SGD and RMSProp can be similarly attributed to the mechanism controlled by the moving average coefficient hyperparameter, this is in no way surprising. However, as with what we observed with α, the relationship between the hyperparameters and the amount of catastrophic forgetting was generally smooth; similar values of the hyperparameter produced similar amounts of catastrophic forgetting. Furthermore, the optimizer seemed to play a more substantial effect here. For example, the best retention and relearning scores for SGD with Momentum we observed were still only roughly as good as the worst such scores for RMSProp.

Thus while these hyperparameters have a clear effect on the amount of catastrophic forgetting, it seems unlikely that a large difference in catastrophic forgetting can be easily attributed to a small difference in these hyperparameters. One metric that we explored was activation overlap. While French (1991) argued that more activation overlap is the cause of catastrophic forgetting and so can serve as a viable metric for it (p. 173), in the MNIST testbed, activation overlap seemed to be in opposition to the well-established retention and relearning metrics. These results suggested that, while Adam suffers a lot from catastrophic forgetting, so too does RMSProp. Together, this suggests that catastrophic forgetting cannot be a consequence of activation overlap alone. Further studies must be conducted to understand why the unique representation learned by RMSProp here leads to it performing well on the retention and relearning metrics despite having a greater representational overlap. On the consistency of the results, the variety of rankings we observed in Section 6 validate previous concerns regarding the challenge of measuring catastrophic forgetting. Between testbeds, as well as between different metrics in a single testbed, vastly different rankings were produced.

While each testbed and metric was meaningful and thoughtfully selected, little agreement appeared between them. Thus, we can conclude that, as we hypothesized, catastrophic forgetting is a subtle phenomenon that cannot be characterized by only limited metrics or limited problems. When looking at the different metrics, the disagreement between retention and relearning is perhaps the most concerning. Both are derived from principled, crucial metrics for forgetting in psychology. As such, when in a situation where using many metrics is not feasible, we recommend ensuring that at least retention and relearning-based metrics are present. If these metrics are not available due to the nature of the testbed, we recommend using pairwise interference as it tended to agree more closely with retention and relearning than activation overlap. That being said, more work should be conducted to validate these recommendations.

:::info

Authors:

- Dylan R. Ashley

- Sina Ghiassian

- Richard S. Sutton

:::

:::info

This paper is available on arxiv under CC by 4.0 Deed (Attribution 4.0 International) license.

:::