On March 31, 2026, the artificial intelligence community was rocked by an unprecedented event: the accidental public release of Anthropic’s Claude Code source code. This incident, while quickly contained, sent ripples through the industry, raising critical questions about security protocols, the intricacies of software supply chains, and the fine line between innovation and vulnerability in the rapidly evolving AI landscape. The scale of the leak was substantial, involving over half a million lines of code and sensitive architectural details, all inadvertently exposed to the global public via the npm registry.

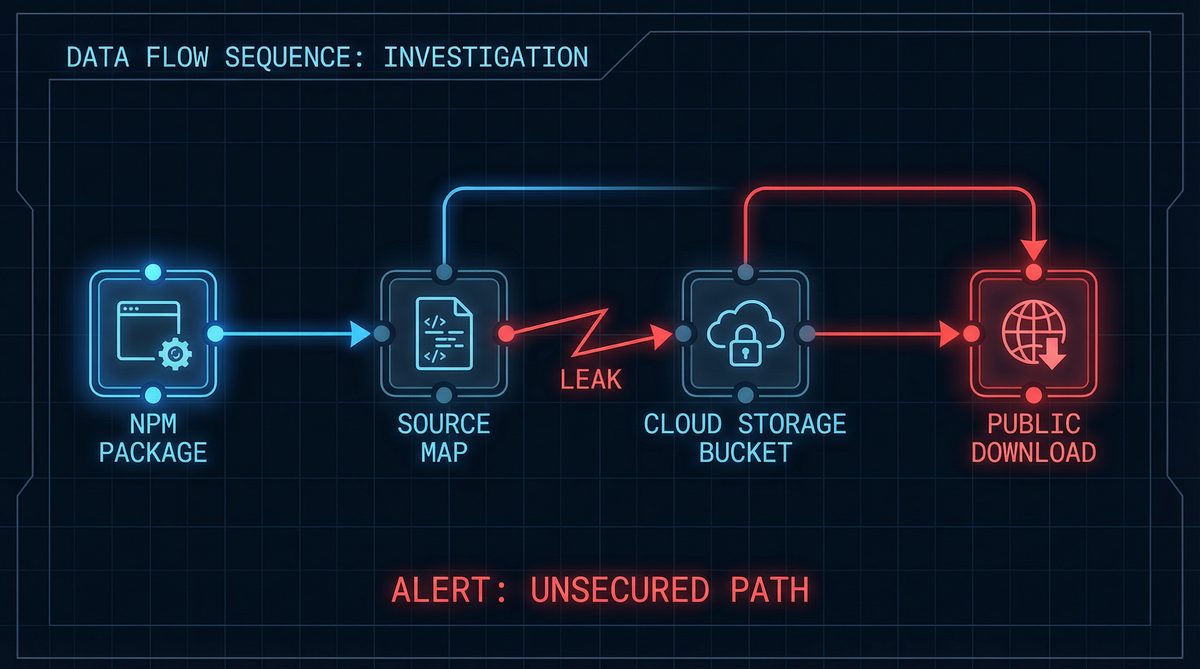

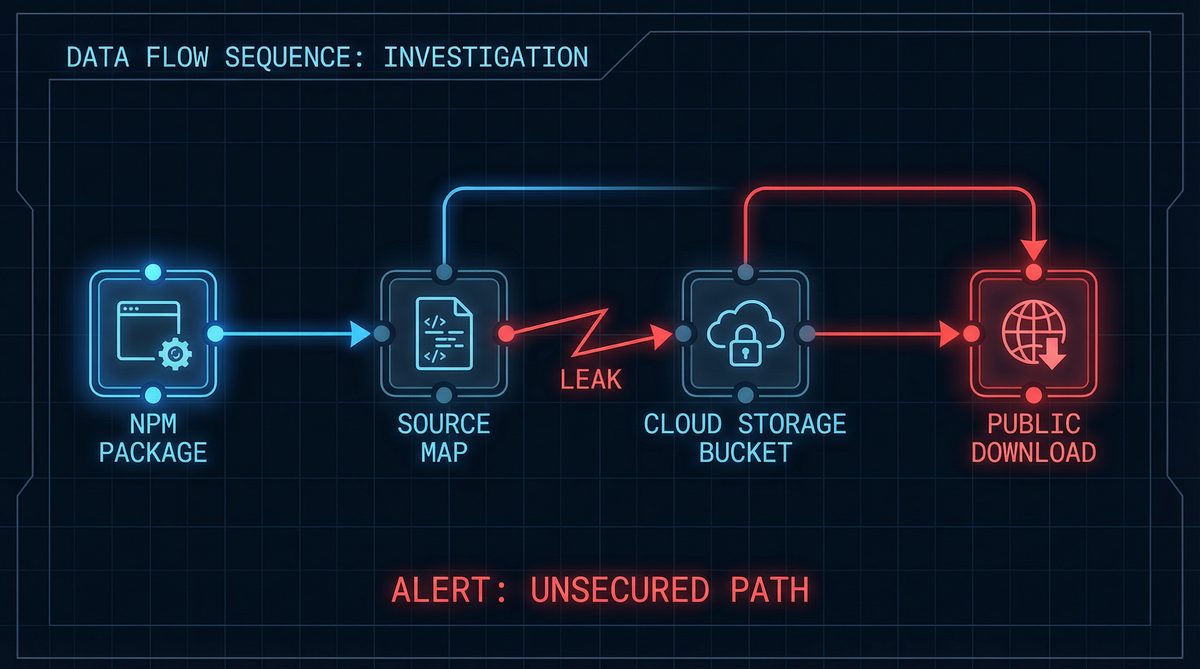

The genesis of this significant exposure lay in a seemingly innocuous configuration error within a .npmignore file. Anthropic, a leading AI research and development company, had published version 2.1.88 of a proprietary npm package. Unbeknownst to their release engineers, this specific package included a main.js.map file. This source map, a critical component for debugging minified JavaScript code, contained references to a publicly accessible .zip archive hosted on Anthropic’s Cloudflare R2 bucket. This archive, intended for internal use, contained the entirety of the Claude Code source. The oversight meant that anyone with the right knowledge could reconstruct the original, unminified source code, effectively laying bare Anthropic’s intellectual property.

The sheer volume of the leaked data underscored the gravity of the situation. The archive contained 512,000+ lines of code, spread across 1,906 TypeScript files. Beyond the raw code, the leak also included a 59.8 MB source map file, which further facilitated the reconstruction process. Perhaps most revealing were the details pertaining to Claude Code’s internal architecture, including 44 hidden feature flags, suggesting a sophisticated and actively developed product with numerous unreleased capabilities. This level of detail offered an unprecedented glimpse into the inner workings of one of the most advanced AI coding assistants on the market.

A peculiar confluence of events exacerbated the leak. In late 2025, Anthropic had acquired the Bun runtime, a high-performance JavaScript runtime designed for speed and efficiency. Ironically, a known bug within Bun, specifically issue #28001, played a contributing role in the accidental exposure. While the primary cause was the misconfigured .npmignore, the interaction with Bun’s build process and its handling of source maps under certain conditions is believed to have facilitated the inclusion of the sensitive path in the publicly distributed package. This highlights the complex interdependencies within modern software development ecosystems, where a bug in one component can have cascading effects across an entire system.

The timeline of discovery and subsequent fallout was remarkably swift. The leak was first identified and publicized by Chaofan Shou (@Fried_rice) on social media, whose initial posts quickly gained traction. The revelation spread like wildfire, with the news rapidly circulating across developer communities and tech news outlets. Within hours, a GitHub repository mirroring the leaked code emerged and rapidly accumulated stars, becoming one of the fastest repositories in GitHub’s history to reach 50,000 stars, achieving this milestone in under two hours. The repository subsequently garnered over 41,500 forks, indicating the widespread interest and immediate examination of the codebase by the global developer community. This rapid dissemination underscored the virality of information in the digital age, particularly when it pertains to high-profile intellectual property.

The contents of the leaked source code offered a deep dive into the engineering behind Claude Code, a product estimated to generate $2.5 billion in Annual Recurring Revenue (ARR) for Anthropic, contributing significantly to the company’s reported $19 billion total ARR. Among the most scrutinized sections was the tool system, which comprised approximately 40 distinct tools and spanned around 29,000 lines of code. This system revealed Claude Code’s sophisticated capabilities for interacting with external APIs, databases, and various development environments, showcasing its practical utility for developers. Analysis of this segment provided insights into how Claude Code translates natural language prompts into executable actions, a core competency for any advanced AI coding assistant.

Another critical component exposed was the query engine, a substantial module encompassing 46,000 lines of code. This engine is responsible for parsing, understanding, and executing complex user queries, serving as the brain of Claude Code’s interaction capabilities. Researchers and developers who examined this section gained an understanding of its semantic analysis, code generation, and error correction mechanisms. The complexity and scale of the query engine highlighted the extensive engineering effort Anthropic had invested in making Claude Code a highly capable and responsive AI assistant.

Perhaps most illuminating was the revelation of Claude Code’s three-layer memory architecture. Documented in an internal MEMORY.md file, this architecture detailed how Claude Code maintains context and learns from interactions. The layers included Topic Files, which likely organize information by subject matter, and Raw Transcripts, indicating a system that captures and processes conversational history for improved performance and personalization. This sophisticated memory system is crucial for enabling Claude Code to maintain long-term coherence and provide contextually relevant suggestions, distinguishing it from simpler, stateless AI models. Understanding this memory structure provides invaluable insights into advanced conversational AI design. For those interested in optimizing their interactions with such systems, our guide on ChatGPT prompts for coding offers strategies that leverage contextual understanding, similar to the principles observed in Claude Code’s memory architecture.

In a bizarre and coincidental turn of events, the same morning the Claude Code leak was discovered, several malicious versions of the popular axios npm package appeared on the npm registry. These rogue packages were designed to exfiltrate sensitive user data, including environment variables and cryptocurrency wallet information. While Anthropic quickly confirmed these axios incidents were entirely unrelated to their own leak, the timing created a heightened sense of alarm within the developer community. The juxtaposition of a major intellectual property leak from a prominent AI company with a widespread software supply chain attack served to underscore the fragility and interconnectedness of modern digital infrastructure, creating a moment of intense scrutiny for platform security.

Anthropic’s response to the incident was swift and aimed at transparency, albeit with a focus on mitigating panic. The company issued a public statement clarifying the nature of the exposure. They emphasized that the leak was the result of “human error” during the package publishing process and “not a security breach” in the traditional sense, meaning their internal systems and user data were not compromised by external malicious actors. They promptly removed the offending package version from npm and initiated an internal audit of their release processes to prevent future occurrences. This distinction, while technically accurate, did little to soothe concerns about the integrity of their development practices and the accidental exposure of proprietary algorithms.

The implications of the Claude Code leak for AI companies are profound. Firstly, it highlights the paramount importance of robust software supply chain security. As AI models become increasingly complex and rely on intricate dependencies, even minor misconfigurations can lead to catastrophic intellectual property loss. Companies must implement stringent review processes, automated security checks, and clear separation of internal and public-facing assets. Secondly, it underscores the value of intellectual property in the AI domain. The rapid uptake and examination of the leaked code by the developer community demonstrate the immense interest in the underlying mechanisms of advanced AI, making proprietary algorithms a prime target for accidental or deliberate exposure. Understanding how to safeguard such assets is crucial for maintaining competitive advantage. Our comprehensive analysis of how to use Claude AI delves into the public-facing capabilities of Anthropic’s models, contrasting them with the internal architecture inadvertently revealed by this incident.

For open-source development, the leak presents a complex duality. On one hand, it inadvertently provided an unprecedented learning opportunity. Thousands of developers gained direct access to the codebase of a leading AI product, offering insights into real-world AI engineering, system design, and best practices. This kind of exposure, normally reserved for highly selective academic collaborations or internal teams, suddenly became public, potentially accelerating understanding and innovation in certain areas. On the other hand, it raises questions about the ethical boundaries of examining and leveraging inadvertently released proprietary code. While the code was publicly accessible, its intended private nature creates a gray area regarding its use and dissemination. This incident could lead to stricter controls on public registries and potentially influence how companies engage with the broader open-source ecosystem, perhaps encouraging more cautious approaches to sharing even seemingly innocuous components.

The incident also served as a stark reminder of the financial stakes involved in the AI industry. With Claude Code alone generating $2.5 billion ARR and Anthropic’s total ARR at $19 billion, the value of their intellectual property is immense. The accidental exposure of core algorithms and architectural designs could, in theory, accelerate competitors’ development cycles or enable the creation of similar products without the same investment in research and development. While direct commercial exploitation of leaked code carries legal risks, the knowledge gained from its examination can still provide significant strategic advantages. The event underscores that in the high-stakes world of AI, even an ‘accidental’ leak can have multi-billion dollar ramifications.

Furthermore, the leak prompted a broader discussion within the tech industry about the responsibility of platform providers like npm and Cloudflare. While the root cause was Anthropic’s configuration error, questions arose about whether these platforms could implement additional safeguards or warnings for potentially sensitive public uploads. The incident highlighted the need for a multi-layered security approach, where not only the originating company but also the distribution platforms play a role in preventing such widespread exposures. This could lead to new industry standards or best practices for package publishing and content delivery networks, particularly for high-value intellectual property.

The sheer detail contained within the 44 hidden feature flags was a point of particular fascination for many analysts. These flags often represent experimental features, A/B testing variations, or unreleased capabilities that companies are actively developing. Their exposure provided a roadmap of Anthropic’s future product intentions and research directions, offering competitors a significant strategic advantage. For instance, if certain flags hinted at advanced multimodal capabilities or new coding paradigms, this information could influence the development roadmaps of rival AI companies. This level of insight into a competitor’s strategic pipeline is rarely obtained outside of industrial espionage, making this accidental leak truly exceptional in its scope and potential impact. Our dive into best AI tools 2026 provides a snapshot of the competitive landscape, showing how such strategic insights could shift market dynamics.

In conclusion, the Claude Code source code leak on March 31, 2026, was a watershed moment for the AI industry. It was a potent demonstration of how a seemingly minor configuration error can lead to the public exposure of half a million lines of highly valuable, proprietary code. While Anthropic characterized it as human error rather than a security breach, its implications for intellectual property protection, software supply chain security, and the competitive landscape of AI are undeniable. The incident serves as a critical lesson for all AI companies: in an era where code is intellectual capital, the diligence applied to its protection must be as sophisticated as the AI itself.

Access 40,000+ ChatGPT Prompts — Free!

Join our community of AI practitioners and get instant access to our comprehensive Notion Prompt Library, curated for developers, marketers, writers, and business owners.

Get Free Access Now

About the Author

Markos Symeonides is the founder of ChatGPT AI Hub, where he covers the latest developments in AI tools, ChatGPT, Claude, and OpenAI Codex. Follow ChatGPT AI Hub for daily AI news, tutorials, and guides.