AMD’s Instinct MI350P for regular PCIe 5.0 sockets is said to be primarily suitable for Agentic AI, i.e. AI agents that can automatically assist their users and take over tasks. With its GPU, the card has a few other functions up its sleeve in addition to extremely high AI computing power and a lot of memory throughput. This also includes the acceleration of current video codecs up to AV1 and the division into up to four virtual GPUs.

Read more after the ad

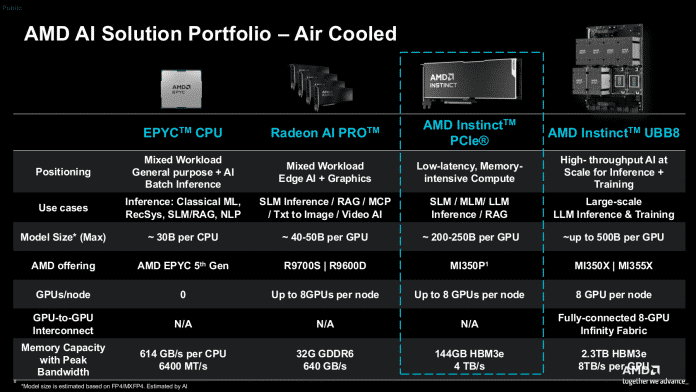

And although it could also run in normal computers, AMD is targeting server systems that the MI350P is supposed to help make AI-ready. The passive cooling of the approximately 26.7 cm long dual-slot card is designed for the strong airflow of rack servers. According to AMD, with its 144 GB of stack memory of the HBM3e type, it should be suitable for AI models with around 200 to 250 billion parameters. Workstation cards like the Radeon AI Pro 9700 with only 32 GB fail much earlier, around 40 to 50 billion parameters.

Halved sister

The MI350P shares its GPU with accelerators in the Open Accelerator Module (OAM) type Instinct MI350X/355X, but only 128 compute units are active in the MI350P, while 256 CUs calculate in the OAM models. AMD also halves the fast HBM3e stack memory from 288 to 144 GB. AMD does not state this in writing, but the image of the card shows what is obvious: the MI350P only uses one I/O die (IOD) with four compute dies (XCDs), so the GPU package is halved compared to its larger siblings.

The Instinct MI350P is intended to complement the OAM server boards and, for example, help existing rack servers to make AI leaps.

(Image: AMD)

The power consumption also drops significantly and, with a nominal TDP of 600 watts, is equal to that of an Nvidia RTX Pro 6000 Blackwell or H200 NVL, with which it is obviously intended to compete. To supply energy, AMD uses the controversial ATX connector 12V-2×6. Alternatively, the card can be set to a 450 watt mode.

To serve multiple users at the same time, there are three partitioning options: SPX, DPX and CPX. The former corresponds to full operation, with DPX two users share the resources (CUs, RAM, video and JPEG engine, L2 cache and DMA engines) equally and with CPX there are four users. In CPX mode, two partitions compete for one video and one block of ten JPEG engines each. But they should still have enough reserves, because the entire chip can manage 99 AV1 streams (1080p30, 4:2:0) and 4425 JPEG images per second in 1080p.

High computing power

Read more after the ad

AMD did not share specific performance estimates in advance, but the theoretical computing power – multiplied by the number of execution units and the clock frequency – is 2300 teraflops with FP8 precision (densely populated matrices, with sparsity the respective value roughly doubles). MXFP4 doubles this rate to 4600 Tflops, MXFP6, unlike Nvidia, does the same. This means that the theoretical computing power is a little less than half that of an MI355X. On paper, Nvidia’s H200 NVL manages around 1670 Tflops with fully populated matrices (with sparsity then 3340 Tflops).

AMD also provides an estimate of the actual throughput achieved, which also takes into account memory transfers and limitations due to power consumption. Accordingly, the Instinct MI350P is between 60 and 70 percent of its maximum throughput rates. The outlier is MXFP6 with 40 percent of the theoretical throughput, so the value only increases by a good third instead of doubling compared to the (MX)FP8.

The theoretical and practical computing power of the Instinct MI350P differs significantly from one another. The reasons include the available electrical power and the necessary storage and bus transfers.

(Image: AMD)

(csp)