AMD has presented the Ryzen AI Embedded P100 processors at the Embedded World 2026 conference, where we have also been able to present Intel and other companies. Destined for embedded systems, AI and Edge developmentoffer up to twice as many CPU cores, up to eight times more graphics processing unit processing capacity and 36% more system teraoperations.

Factory automation, physical AI in mobile robotics and other AI-driven edge applications are evolving rapidly and driving the need for computing platforms that offer real-time AI processing, deterministic performance and long-term reliability in always-on environments, explains AMD in the presentation of its new chips.

Ryzen AI Embedded P100

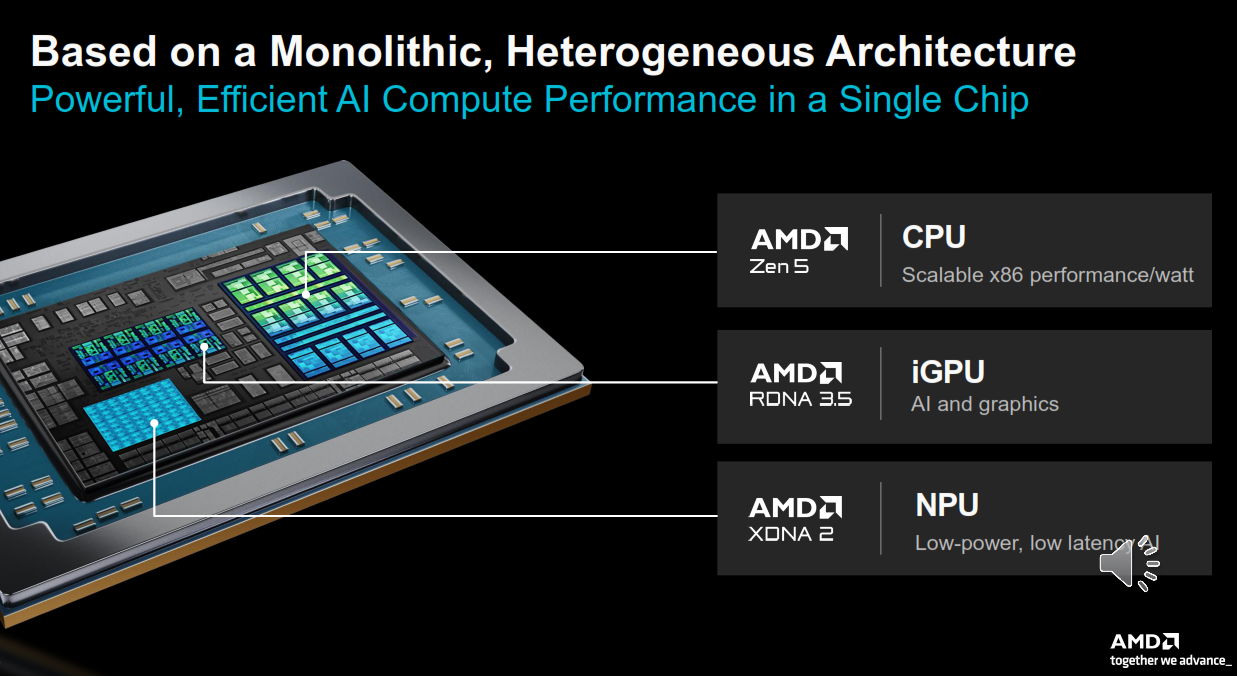

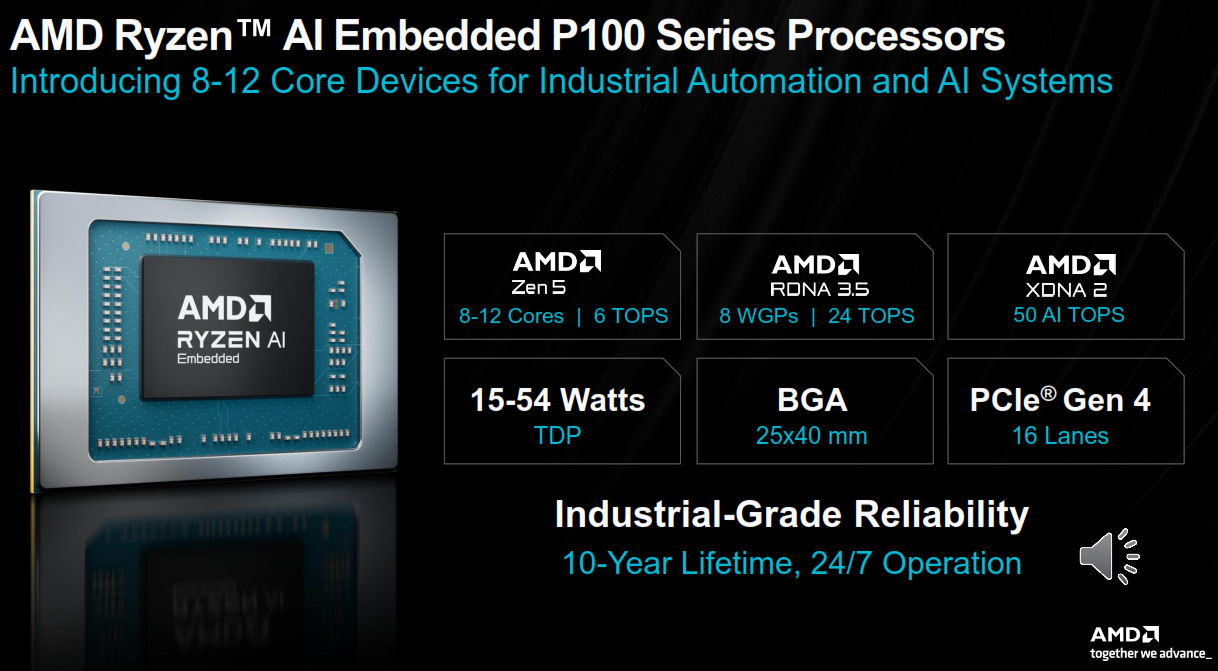

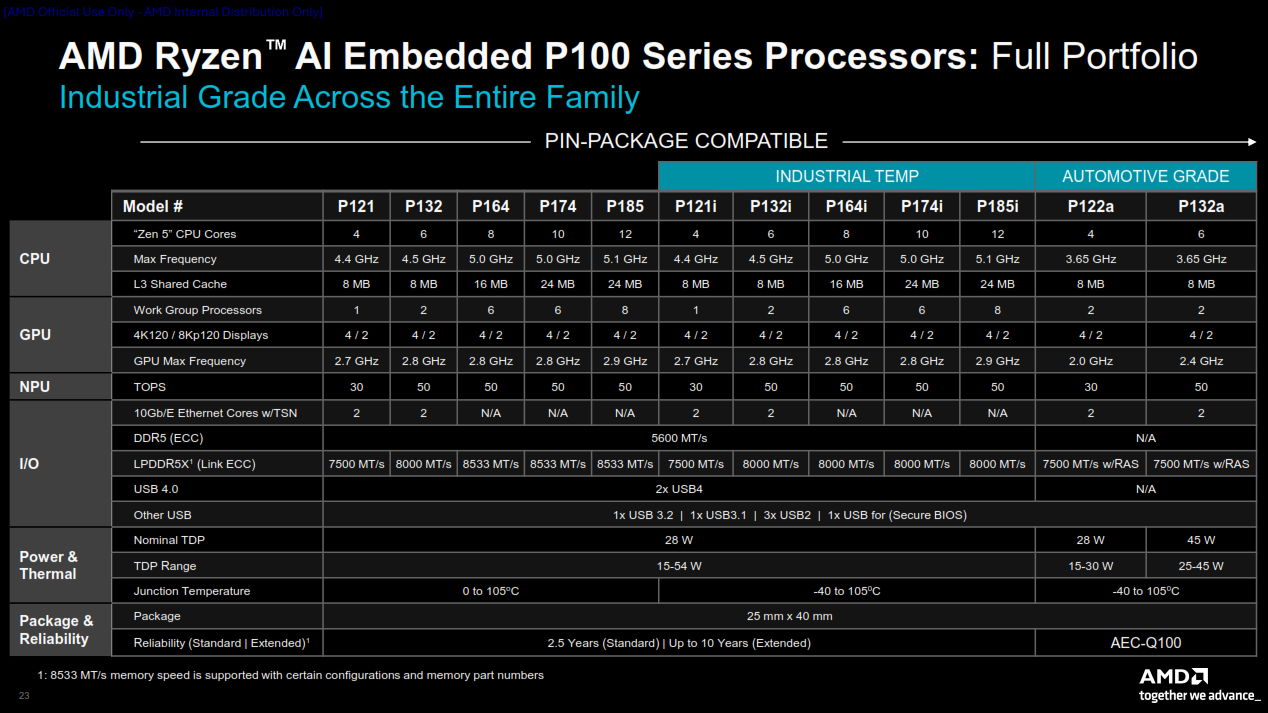

AMD wants to go beyond just another embedded processor family with long-lasting support and a robust brand. The P100 series is now presented as a scalable embedded platform, ranging from smaller designs to the most powerful with 12 Zen 5 processing cores, maintaining the same general integrated concept, configurable power configuration and a strong emphasis on pin and package compatibility across the Ryzen family.

For system integrators and industrial original equipment manufacturers (OEMs), this matters much more than catchy advertising slogans. This means that a single board design can potentially address multiple levels of performancereducing validation work, simplifying implementation and shortening product cycles.

The new Ryzen AI Embedded P100 Series have up to 12 Zen 5 processing cores, up to 80 system TOPS for physical AI acceleration, integrated AMD RDNA 3.5 graphics for real-time visualization, and a neural processing unit (NPU) based on the AMD XDNA 2 architecture for low-latency, low-power AI inference. And all on a single chip.

Scalable AI computing for demanding applications

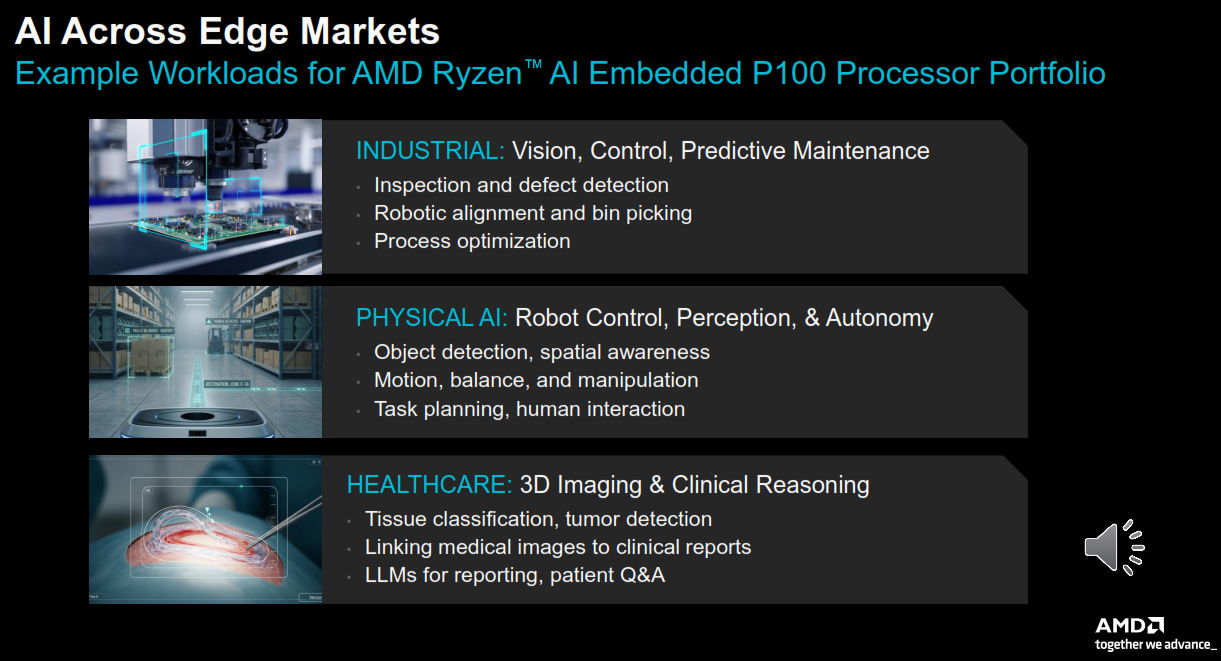

AMD frames its use in demanding applications, from industrial PCs for smart factories to autonomous robots and medical imaging devices, ensuring its new embedded x86 processors are optimized for the next generation of industrial AI and broader edge AI use cases, including:

– Intelligent machine vision for industrial PCs: The new processors enable the integration of programmable logic controllers (PLC), machine vision and human-machine interface (HMI) into a single industrial PC, while delivering the CPU performance needed for real-time inspection and process optimization. The integrated GPU and NPU accelerate multi-camera vision and comprehensive HMI panels, while enabling low-latency anomaly detection using models such as DeepSORT, RAFT-Stereo, CenterPoint, GDR-Net, PaDiM, and Llama 3.2-Vision.

– Physical AI for autonomous operations: For mobile robots, processors handle navigation, motion control, and path planning on the CPU, while the GPU processes multi-camera signals for spatial awareness, Visual SLAM, and advanced AI workloads such as vision-language-action (VLA) models. Unified memory between the CPU and GPU enables low latency for better responsiveness. The NPU provides low-power, always-on inference for object detection and scene understanding using models such as YOLO and MobileSAM.

– 3D Images of Health and Clinical Intelligence: The processors allow 3D imaging to be enhanced for ultrasound, endoscopes, tissue classification and tumor detection in peripheral environments using models such as U-Net, nnU-Net and MONAI. The processors accelerate image-to-report workflows with MedSigLIP and facilitate clinical reasoning and Q&A with Med-PaLM 2. Healthcare OEMs can consolidate imaging, AI analysis and reporting on an integrated, scalable and long-lasting x86 platform.

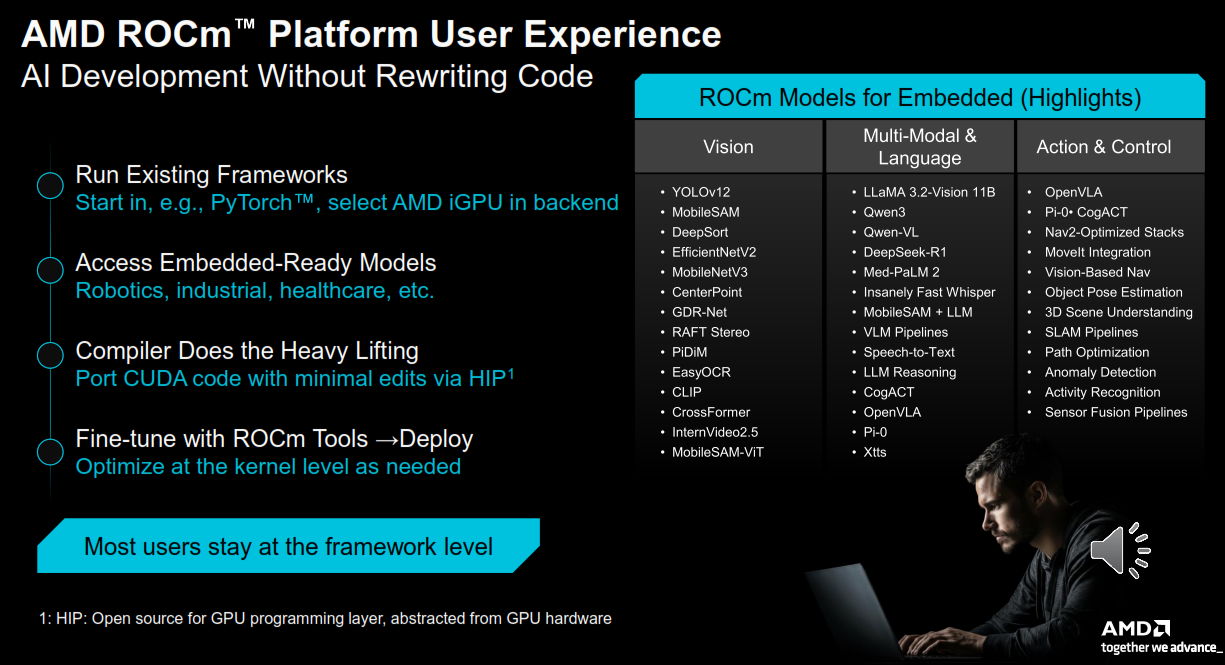

ROCm software and virtualized reference stack

Support for the AMD ROCm open software ecosystem, brings open source AI software stack and tested to integrated applications. Developers can run standard AI frameworks with open source compilers, runtimes, and libraries, with immediate access to integration-friendly models without having to rewrite code. At the programming level, the ROCm software uses the open source Heterogeneous Computing Interface for Portability (HIP), which decouples GPU programming from the hardware and eliminates vendor lock-in between the software stack and the hardware.

Tightly integrated CPU, GPU and NPU architecture enables Efficient workload partitioning and predictable latency in mixed workloads. Additionally, the use of familiar software frameworks and stacks simplifies and streamlines development and deployment across a wide range of use cases. This level of integration enables advanced computing and graphics capabilities without the need for additional external components, making it easier for original equipment manufacturers (OEMs) and system integrators to design scalable platforms.

AMD offers a compact, vertically integrated, virtualized reference stack for mixed-criticality industrial applications. Based on the Xen hypervisor, runs Linux, Windows, Ubuntu and RTOS environments in isolated domains to provide security, real-time performance, and flexibility. The result is a scalable, open architecture that simplifies the design and accelerates the development of next-generation embedded systems.

Versions and availability

AMD Ryzen AI Embedded P100 Series processors with eight to twelve cores are currently sampling, with production shipments expected to begin in July 2026. Four- to six-core models are also currently sampling, with production expected in the second quarter of 2026.