Key Takeaways

- If your system requires auditability, do not let an LLM be the sole authority for both decisions and explanations.

- Hashing and replaying structured decision artifacts is a practical way to guarantee deterministic behavior across runs.

- Model uncertainty explicitly instead of collapsing it into default decision outcomes.

- Validate explanation invariants, not just JSON schema, to prevent silent drift in decision meaning.

- Architectural clarity introduces friction, and that friction is often the cost of governance.

Why Trust-Aware Hybrid AI Systems Are Important

In exploratory applications, explanation drift may be tolerable. In systems that feed compliance reporting, operational dashboards, or downstream automation, it is not.

When decisions become stored artifacts, replayed, audited, or challenged, even small variations in explanation text can introduce ambiguity about authority and traceability.

The refactor described here did not begin as an effort to build a rule engine. It began with a replay test that exposed a deeper problem: we did not have a clear definition of what was authoritative.

The Replay Test That Exposed Explanation Drift

The issue surfaced during staging replay tests. Identical structured inputs produced stable decision labels, but slightly different explanations.

The replay test hashed structured input and compared serialized outputs across runs. To make that meaningful, serialization had to be deterministic. The Decision Packet was encoded using sorted keys, no runtime-generated fields, and no timestamps. Lists such as uncertainty_tags were normalized before hashing. Without canonicalization, replay testing would only catch obvious changes, not subtle drift. The decision label remained stable. The explanation text did not.

In one run, the model referenced contextual reasoning that was not present in the structured inputs. The decision was correct. The explanation was not grounded. That exposed the core question: if explanations cannot be traced back to structured inputs, what exactly is authoritative?

Prompt Engineering was Not the Fix

We first tried tightening prompts and lowering temperature. Variation decreased, but it did not disappear.

More importantly, prompt adjustments do not produce explicit rule traces or replayable artifacts. They influence behavior probabilistically; they do not define authority.

Reducing randomness is not the same as enforcing determinism. Determinism requires identical inputs to produce identical authoritative artifacts, not merely similar narratives.

Deterministic Authority, Constrained Explanation

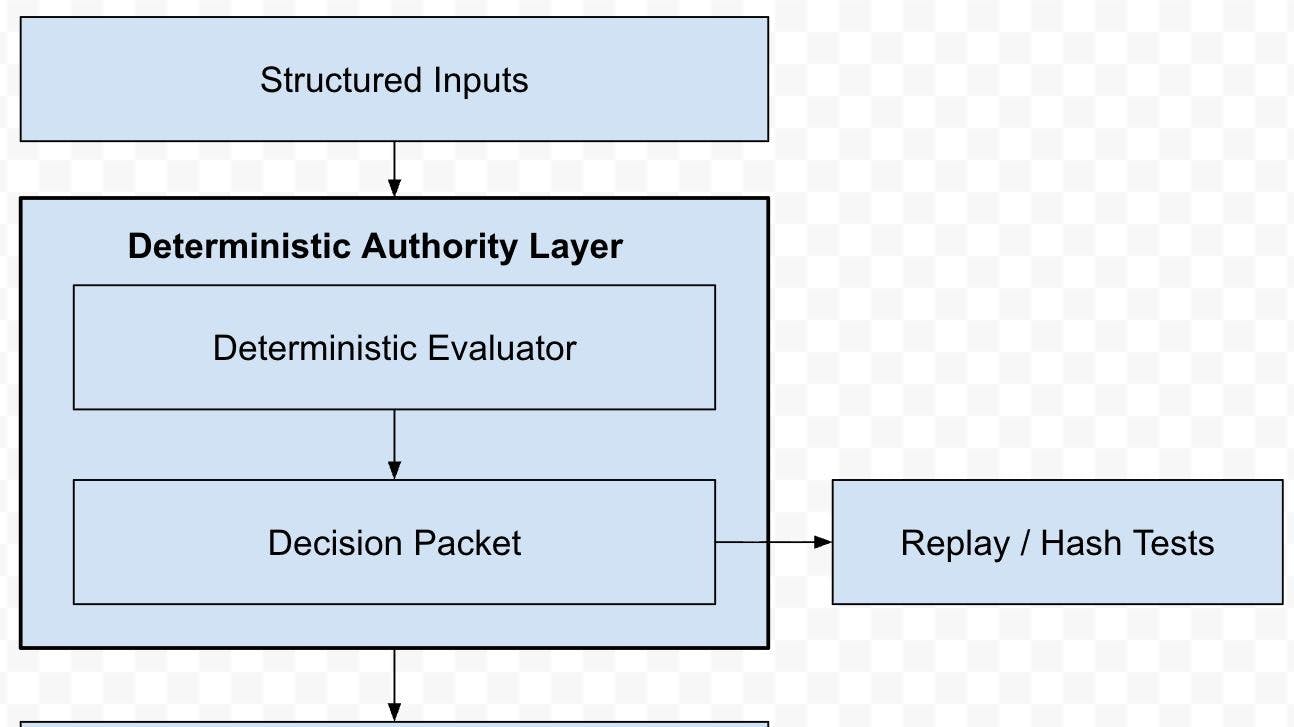

We separated authority from narrative. A deterministic evaluator produces a structured Decision Packet. The LLM renders explanations but cannot alter outcomes.

The Decision Packet became the authoritative artifact: serializable, hashable, diffable, and replayable.

The architecture is shown in Figure 1.

A typical Decision Packet looked like:

{

"decision": "DEFER",

"fired_rules": ["R2_MISSING_REQUIRED"],

"uncertainty_tags": ["UNKNOWN"],

"inputs_used": ["risk_score", "evidence_count"],

"rationale_points": [

"Required field identity_verification was missing",

"Insufficient evidence to compute the risk threshold."

]

}

This structure made reasoning explicit. The LLM no longer inferred logic from raw facts, it rendered structured conclusions.

Rule Precedence and Suppression

Rules were evaluated in a fixed priority order with explicit short-circuit behavior. Each rule operated only on structured input and emitted explicit outputs: decision modifications, uncertainty tags, or suppression signals.

The evaluator was stateless and idempotent. Identical structured input produced identical Decision Packets before serialization.

In an early iteration, R5RISKHIGH executed before R1FORCEDENY. Under certain combinations, high-risk evaluation bypassed an explicit policy override.

Unit tests for individual rules passed. Replay tests exposed the precedence defect.

Explicit ordering and short-circuit logic resolved the issue. The separation did not eliminate complexity; it exposed it.

Explicit Uncertainty and Invariant Enforcement

We stopped collapsing uncertainty into default decisions. Tags such as UNKNOWN, STALE, LOW_EVIDENCE, and CONFLICT became first-class outputs.

The explanation layer had to reference emitted uncertainty tags and could not introduce new entities or reasoning.

Example of a failing Explanation Packet:

{

"decision": "ALLOW",

"explanation": "Approved due to sufficient historical behavior trends"

}

The schema was valid. The reasoning was not grounded.

Invariant enforcement included:

- The explanation decision must match the Decision Packet exactly.

- All emitted uncertainty tags must be referenced.

- No entity, threshold, or justification absent from rationale_points or structured inputs is allowed.

These checks operated independently of the model. If invariants failed, the explanation was rejected before acceptance.

Testing After Separation

Once authority was isolated, testing shifted from comparing text to enforcing artifacts.

We implemented four categories of tests:

- Deterministic evaluator tests covering rule firing, precedence, suppression, and uncertainty tagging.

- Replay tests asserting identical structured inputs produce identical serialized Decision Packets.

- Explanation contract tests verifying schema validity, decision alignment, and invariant compliance.

- End-to-end integration tests using a local LLM runtime.

A simplified replay test:

def test_replay_stability():

packet1 = evaluate(input_data)

packet2 = evaluate(input_data)

assert canonical_hash(packet1) == canonical_hash(packet2)

Explanation compliance tests asserted that hallucinated or ungrounded content was rejected:

def test_explanation_rejects_invented_fact():

explanation = generate_explanation(packet)

assert validate_invariants(packet, explanation)

Replay tests surfaced precedence regressions that unit tests did not detect. Explanation contract tests caught schema-valid but semantically ungrounded summaries.

Correctness was redefined. It was no longer enough for the decision label to be right. The artifact, the reasoning trace, and the explanation all had to satisfy deterministic enforcement.

Tradeoffs

The boundary introduced discipline.

Developers had to write explicit rationale points rather than relying on narrative reasoning. Ambiguities in policy logic became visible earlier. Replay tests occasionally failed during refactors that previously would have gone unnoticed.

Iteration slowed initially. Prompt-only experimentation is faster than defining rule precedence and uncertainty models. But the slowdown was intentional. The boundary shifted uncertainty into a space where it could be tested and rejected deterministically.

Conclusion

Deterministic and probabilistic components can coexist, but only if their responsibilities are explicit.

Separating authority from explanation did not eliminate model uncertainty. It relocated it into a layer where it could be observed, validated, and constrained.

If you are integrating LLMs into decision paths, define authority first. Optimization comes later.

n