A bad web deploy creates a bug report. A bad Over-the-Air (OTA) update bricks thousands of devices overnight.

The software world often celebrates the “fail fast, break things” philosophy. In standard web development, a critical bug is usually just an incident report followed by a quick patch. But when software becomes the nervous system of a complex physical machine—such as Android-powered fitness equipment, medical devices, or smart-home hubs—the tolerance for “breaking things” drops to zero.

In this domain, a poorly validated Over-the-Air (OTA) firmware update is not a minor inconvenience. It can systematically disable thousands of customer devices at once, introduce safety risks, and cause immediate, irreversible brand damage. Mastering the Critical Path in this environment requires moving beyond traditional QA and adopting a rigorous, hardware-software integrated Quality Engineering (QE) discipline.

1. Navigating the Complexity of the Converged Stack

Validating a typical web application involves a relatively simple stack: frontend, backend, and database. In contrast, a connected hardware product operates on a tightly coupled, multi-layer system that demands a unified validation strategy.

- Application Layer: UI and user-facing features

- Android OS / Firmware: Kernel-level processes and OTA clients

- OTA Distribution Pipeline: Delivery system that must be fault-tolerant

- Hardware Interface (HIL): Communication with sensors, motors, and controllers

- Cloud Observability: Real-time monitoring via tools like Bugsnag and New Relic

Finally, there is the Cloud Observability layer (Bugsnag, New Relic) that ties it all together. A failure in one layer cascades across the entire system. A UI interaction might trigger a firmware command, which interacts with a motor controller, which depends on OS stability and hardware constraints.

This is not just software testing—it’s end-to-end system validation.

2. Testing the Physical World: Why HIL is Non-Negotiable

You cannot validate a physical system using mocks alone. If a software command instructs a motor to increase resistance by 20%, it’s not enough to confirm the API responded. You must validate that the physical system actually executed the command.

This is where Hardware-in-the-Loop (HIL) testing becomes essential. By plugging real hardware (or high-fidelity simulators) directly into the CI/CD pipeline, we measure more than just “Pass/Fail.” We monitor latency (command propagation speed), data integrity (UART/low-level protocol accuracy), and thermal behavior—ensuring the OS handles hardware heat without throttling.

Modern SDET teams don’t just write automation scripts—they build frameworks that interact with physical systems.

3. Shifting Left with AI-Driven Test Design

As systems scale, test creation—not execution—becomes the primary bottleneck. To solve this, we’ve integrated Generative AI directly into the “shift-left” phase. Our workflow leverages AI to parse PRDs and Figma designs to auto-generate:

- Initial test cases and boundary analysis.

- Automation scaffolding in Xray / TestRail.

- Boilerplate code for Appium / Espresso frameworks.

This allows our engineers to spend less time on repetitive authoring and more on high-risk edge cases and complex system boundaries. In practice, this significantly reduced test authoring time and improved consistency across teams—while maintaining high coverage.

4. Intelligent Failure Analysis: Eliminating the Noise

In large-scale automation, “noise” (false positives) is the enemy of velocity. Without proper triage, teams waste days chasing network hiccups instead of real regressions. We solved this by implementing an AI-based failure analysis system. When a test fails in CI, a hook automatically collects Appium logs and system Logcats. An LLM then analyzes the execution history and classifies the failure: New Defect (immediate investigation), Flaky Infrastructure (retry), or Known Issue (linked to an existing Jira ticket). This single change reduced our regression cycles from 5 days to under 24 hours by focusing engineering effort where it actually matters.

5. From High Risk to Controlled Deployment

Early in the evolution of many connected platforms, firmware and OS updates were deployed as monolithic, “all-or-nothing” events. This approach is inherently high-risk. If a regression escapes testing, it impacts the entire global fleet simultaneously.

We’ve seen the consequences of similar failures across the industry—where a single faulty update triggers widespread disruption. In connected hardware ecosystems, the stakes are even higher: failures translate into physical device outages at scale.

To mitigate this, we evolved our deployment strategy into a phased rollout architecture.

6. Engineering for Safety: The Phased Rollout Model

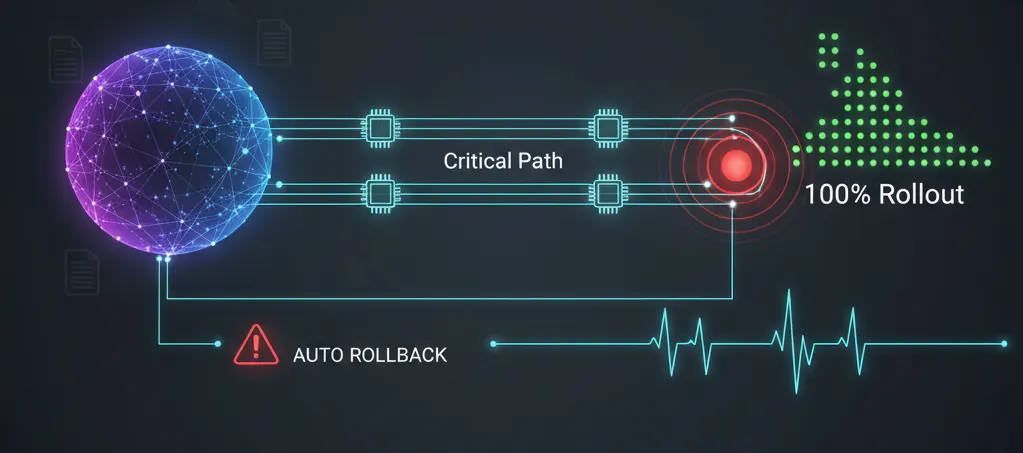

To mitigate this risk, we evolved our deployment into a Phased Rollout Architecture that transforms a release into a controlled, observable system:

- HIL Regression Dry Runs: Validating updates on physical systems against edge cases like power loss mid-update.

- Internal Cohort (~1%): Deployment to internal devices to detect environment-specific regressions.

- Gated Expansion (1% → 10% → 25% → 100%): Each phase must pass automated quality gates before progressing.

- Observability as a Kill Switch: If Bugsnag or New Relic detect a spike in Kernel Panics or ANRs, the rollout is automatically halted.

If thresholds are exceeded, rollout is automatically halted. This approach transforms deployment from a high-risk release into a controlled, observable system with built-in safeguards.

The AI-Gated QE Ecosystem & Phased Rollout Architecture.

The full system—which encompasses AI-driven test generation, HIL validation, intelligent failure triage, and phased rollout—is illustrated in the figure below.

Conclusion: Quality as a System, Not a Phase

Connected products are deeply embedded in daily life. Ensuring their reliability requires treating quality as a core engineering discipline, not a final checkbox. Software in these systems does not exist in isolation. It interacts continuously with hardware, users, and real-world conditions. By combining hardware-aware validation (HIL), AI-driven test design and failure analysis, and risk-aware rollouts, we move from reactive testing to a proactive Quality Intelligence ecosystem.

In connected systems, quality isn’t just about preventing bugs—it’s the only thing standing between a routine update and a fleet-wide failure.

n n

n