Why logs still hurt in 2026

Logs are the “truth,” but they are also the least ergonomic data format ever invented:

- Scale: GB/TB per day is normal. Human eyeballs aren’t.

- Format drift: Apache, Nginx, JVM, container runtime, app logs, vendor SDK logs… each brings its own schema (or lack of schema).

- Hidden coupling: the real root cause often lives in co-occurring messages (timeouts + pool exhaustion + CPU spikes), not a single keyword.

LLMs change the equation because you can describe intent in plain English—then force structure on output—without writing an entire parser upfront.

But the keyword is force.

A good prompt is not “Analyze these logs.” A good prompt is a contract.

The mental model: prompts as contracts, not questions

Think of your prompt like an API spec:

- Inputs: What you provide (log snippet, timeframe, schema hints).

- Tasks: What the model must compute (filter, extract, count, cluster).

- Output schema: What the result must look like (tables/JSON, deterministic fields).

- Constraints: What to ignore, how to handle edge cases, naming rules.

If you don’t specify these, the model will happily give you a novel.

Part I — Log analysis prompts that don’t hallucinate

The 5-block prompt template (battle-tested)

Use this as your default skeleton:

1) Role: You are a {domain role} specializing in {log type} analysis.

2) Context: System + timeframe + what "good" looks like.

3) Data: Paste logs or provide a schema + sample lines.

4) Tasks: Bullet list of explicit operations to perform.

5) Output: Strict schema (table or JSON). Include edge-case rules.

A small move with big impact: make the output machine-checkable (JSON or strict tables). If a human will read it later, great—humans can read JSON.

Scenario A — Incident triage: extract ERROR/FATAL, normalize, dedupe

Here’s a prompt that behaves like an on-call teammate:

Role:

You are a senior SRE. You extract incident-relevant signals from mixed application logs.

Context:

- System: Checkout Service (Java) + Redis cache + MySQL

- Goal: Identify actionable error patterns for a post-incident summary

- Time window: 2026-02-16 19:10–19:20 UTC

Data:

{PASTE LOGS HERE}

Tasks:

1) Filter only ERROR and FATAL entries.

2) For each entry, extract:

- ts, level, service/component (if present), exception/error type, resource (host/ip/url), raw message

3) Normalize error types:

- e.g., "Conn refused", "Connection refused" -> "Connection refused"

- Stack traces: keep only top frame + exception class

4) Deduplicate identical errors; count occurrences.

5) Produce top-3 error types by frequency.

Output (strict JSON):

{

"window": "...",

"total_errors": 0,

"top_errors": [

{

"error_type": "",

"count": 0,

"example": {

"ts": "",

"level": "",

"resource": "",

"message": ""

}

}

],

"all_errors": [

{

"ts": "",

"level": "",

"error_type": "",

"resource": "",

"message": ""

}

]

}

Constraints:

- Never invent fields. If missing, use null.

- Keep error_type <= 40 chars.

Why this works

- You’re not asking for “analysis.” You’re asking for extraction + normalization.

- You prevent creativity by demanding null for missing fields.

- You demand counts, which forces aggregation instead of storytelling.

Scenario B — Product analytics: compute funnels from behavior logs

For behavior logs, you care about distinct users and conversion math, not stack traces.

Role:

You are a product analyst. You compute funnel metrics from event logs.

Data:

Each line is one event:

YYYY-MM-DD HH:MM:SS | user_id=... | event=... | item_id=... | device=...

Tasks:

1) For item_id=SKU-9411, count each event type.

2) Compute unique users per event (dedupe by user_id).

3) Compute:

- view->add_to_cart

- add_to_cart->purchase

4) If denominator is 0, return "N/A" and explain.

Output:

- Table: event, events_count, unique_users

- Then: formulas + results (2 decimals)

Scenario C — Trend analysis: spot spikes, hypothesize causes (carefully)

Trend prompts fail when you let the model “explain” before it “measures.”

Make measurement mandatory first:

Tasks:

1) Identify peak windows (>= P95) and trough windows (==0).

2) Describe the trend using only the provided numbers.

3) Provide 3 hypotheses, each tied to at least one data point.

4) List 5 follow-up queries you'd run in your log tool to validate.

This keeps “maybe a deploy happened” from becoming a fairy tale.

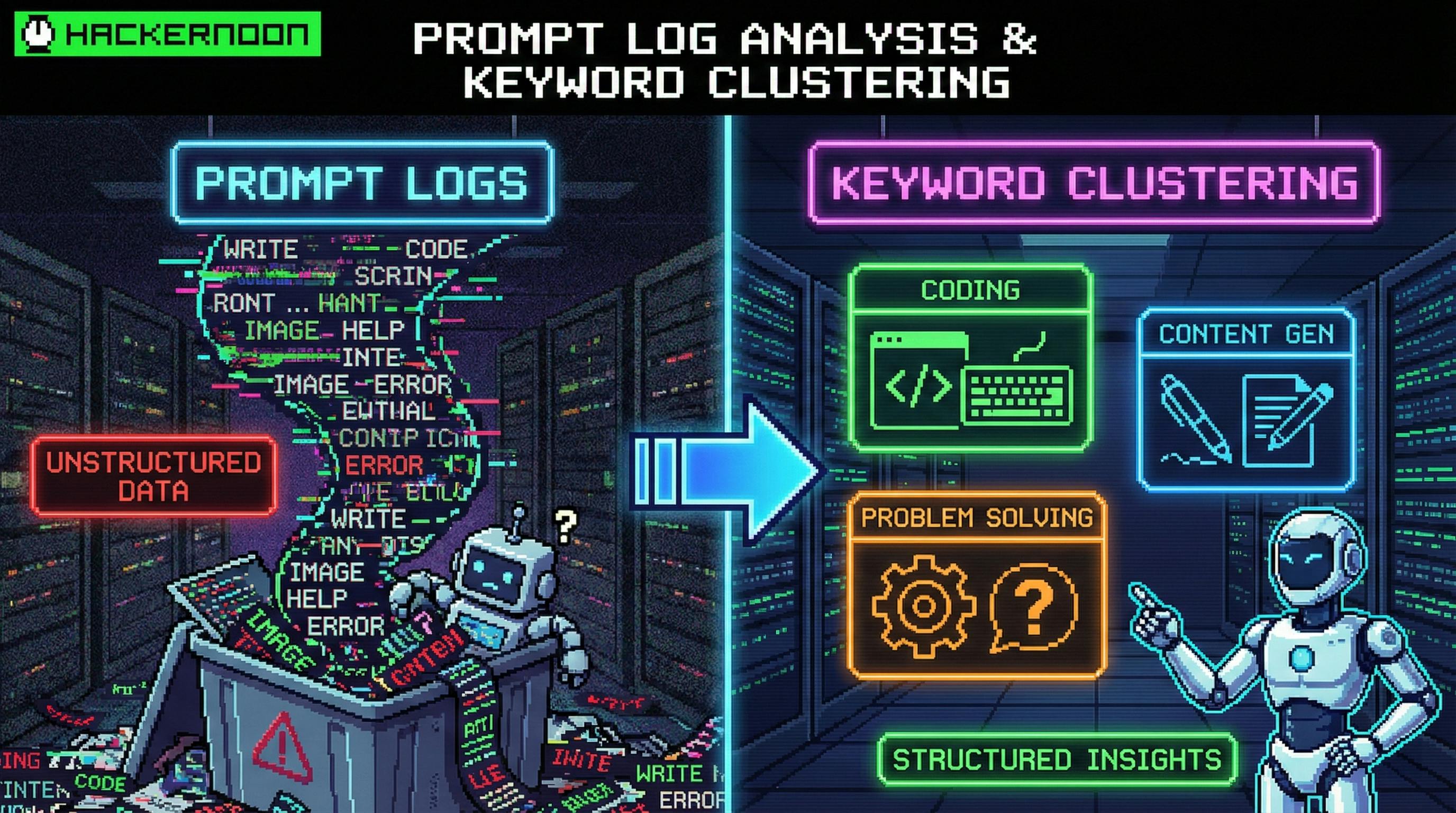

Part II — Keyword clustering that’s actually useful

Keyword clustering is where teams waste time because everyone argues about taxonomy.

So define the taxonomy.

The clustering template (6 blocks)

1) Role: You are an NLP engineer for operational logs.

2) Input: List of keywords/errors (raw strings).

3) Dimension: Cluster by {fault type | subsystem | user journey stage | time correlation}.

4) Rules:

- Each keyword belongs to exactly one cluster.

- Provide a short cluster name + description.

- If ambiguous, choose best fit and add rationale.

5) Output: JSON array of clusters.

6) Constraints: 3–7 clusters total, names <= 20 chars.

Example: cluster by fault type (ops-friendly)

Input list (intentionally messy):

DB conn timeoutMySQL: Connection refusedRedis handshake failedjava.lang.OutOfMemoryError502 Bad GatewayThread pool exhaustedNullPointerException at OrderHandlerCache timeout /cartCPU usage 99%

Prompt output should look like:

[

{

"cluster": "Resource Connect",

"keywords": ["DB conn timeout", "MySQL: Connection refused", "Redis handshake failed", "Cache timeout /cart"],

"notes": "Downstream connectivity and timeouts (DB/cache/network). Owner: SRE"

},

{

"cluster": "Code Exceptions",

"keywords": ["NullPointerException at OrderHandler", "java.lang.OutOfMemoryError"],

"notes": "Application-level exceptions. Owner: Dev"

},

{

"cluster": "Gateway/Infra",

"keywords": ["502 Bad Gateway", "CPU usage 99%", "Thread pool exhausted"],

"notes": "Edge/proxy errors and capacity saturation. Owner: SRE/Platform"

}

]

Notice what’s missing: “AI vibes.” This is directly mappable to who does what next.

The unsexy truth: you still need preprocessing

LLMs are not a substitute for:

- time-range filtering

- field extraction

- sampling

- deduplication

- join/correlation across signals

They are a substitute for writing custom logic every time the format changes.

The winning workflow is:

Tool does the slicing. LLM does the sense-making.

Advanced workflow 1 — Elastic (ELK) + prompts: reduce TB → 200 lines → clarity

A pragmatic play:

- Use your log platform to filter:

service=checkoutlevel >= ERROR@timestamp: 19:10–19:20

- Export the small subset (50–300 lines).

- Feed to the triage prompt (JSON output).

- Feed extracted

error_typestrings to the clustering prompt.

If your team is adopting ES|QL in Elastic tooling, even better: ES|QL makes it easier to do “pre-joins” (e.g., attach user tier or region) before you hand the data to an LLM.

Advanced workflow 2 — OpenTelemetry logs: pay once, analyze everywhere

If you can influence logging standards, do this:

- adopt consistent attributes (

service.name,deployment.environment,http.route,db.system, etc.) - keep messages human-readable, but ensure key facts are also structured fields

Why? Because LLM prompts become dramatically simpler when fields are consistent:

- “Extract

error.messageanddb.statement” beats “guess what this blob means.”

If your logs aren’t structured, your prompt has to become a parser.

And parsers are where joy goes to die.

Advanced workflow 3 — Python preprocessing + prompt clustering (with a twist)

When your logs are free-form, do a minimal parse to get the basics.

Here’s a slightly tweaked example that converts raw lines into JSON you can paste into an LLM prompt. (It’s intentionally small—because the goal is to reduce chaos, not build a framework.)

import re

import json

RAW = [

"2026-02-16 19:12:05 ERROR checkout Thread-17 DB connection timeout url=jdbc:mysql://10.0.4.12:3306/payments",

"2026-02-16 19:12:19 WARN checkout Thread-03 heap at 87% host=app-2",

"2026-02-16 19:13:02 ERROR cache Thread-22 Redis handshake failed host=10.0.2.9:6379",

"2026-02-16 19:13:45 FATAL checkout Thread-17 java.lang.OutOfMemoryError at OrderService.placeOrder(OrderService.java:214)"

]

PATTERN = re.compile(

r"(?P<ts>d{4}-d{2}-d{2} d{2}:d{2}:d{2})s+"

r"(?P<level>DEBUG|INFO|WARN|ERROR|FATAL)s+"

r"(?P<service>w+)s+"

r"(?P<thread>Thread-d+)s+"

r"(?P<msg>.*)"

)

def parse_line(line: str):

m = PATTERN.match(line)

if not m:

return {"ts": None, "level": None, "service": None, "thread": None, "msg": line}

d = m.groupdict()

# lightweight resource hints (optional)

d["resource"] = None

if "host=" in d["msg"]:

d["resource"] = d["msg"].split("host=", 1)[1].split()[0]

if "url=" in d["msg"]:

d["resource"] = d["msg"].split("url=", 1)[1].split()[0]

return d

structured = [parse_line(x) for x in RAW]

print(json.dumps(structured, indent=2))

Now your LLM prompt can be clean:

- extract

level in {ERROR,FATAL} - normalize

msgintoerror_type - cluster by

serviceor fault type

Common prompt failures (and the fixes)

1) “Analyze these logs” (aka: please ramble)

Fix: demand extraction tasks + strict output schema.

2) Missing context window

If you don’t give a timeframe/system context, the model can’t separate “normal noise” from “incident.” Fix: add system + window + goal.

3) Clustering dimension is vague

“Group these by relevance” is how you get 14 clusters named “Misc.” Fix: define a dimension and keep clusters to 3–7.

4) No edge-case policy

Division by zero, empty inputs, ambiguous keywords… the model will guess. Fix: specify policies: null, N/A, “create new cluster,” etc.

5) Role prompting as a crutch

A “senior SRE” persona doesn’t make outputs correct. Fix: treat role as tone only; correctness comes from tasks + schema + constraints.

A reusable “prompt pack” you can drop into your team wiki

1) Triage prompt (ERROR/FATAL → JSON)

- Use for incident summaries and handoffs.

2) Metrics prompt (events → funnels)

- Use for product/ops dashboards from sampled logs.

3) Trend prompt (time series → spikes + hypotheses + follow-ups)

- Use for “what changed?” sessions.

4) Clustering prompt (keywords → 3–7 incident buckets)

- Use for building a living error taxonomy.

Closing: what “good” looks like

If you do this right, your on-call flow changes:

- Before: “grep + intuition + Slack archaeology”

- After: “filter → prompt → structured summary → owner → next query”

Not magic. Not autonomous agents. Just a disciplined contract between you and the model.

And that’s enough to make logs feel like data again.

n