Hey Hackers!

This article is the second in a two-part series on the engineering reality of Physical AI.

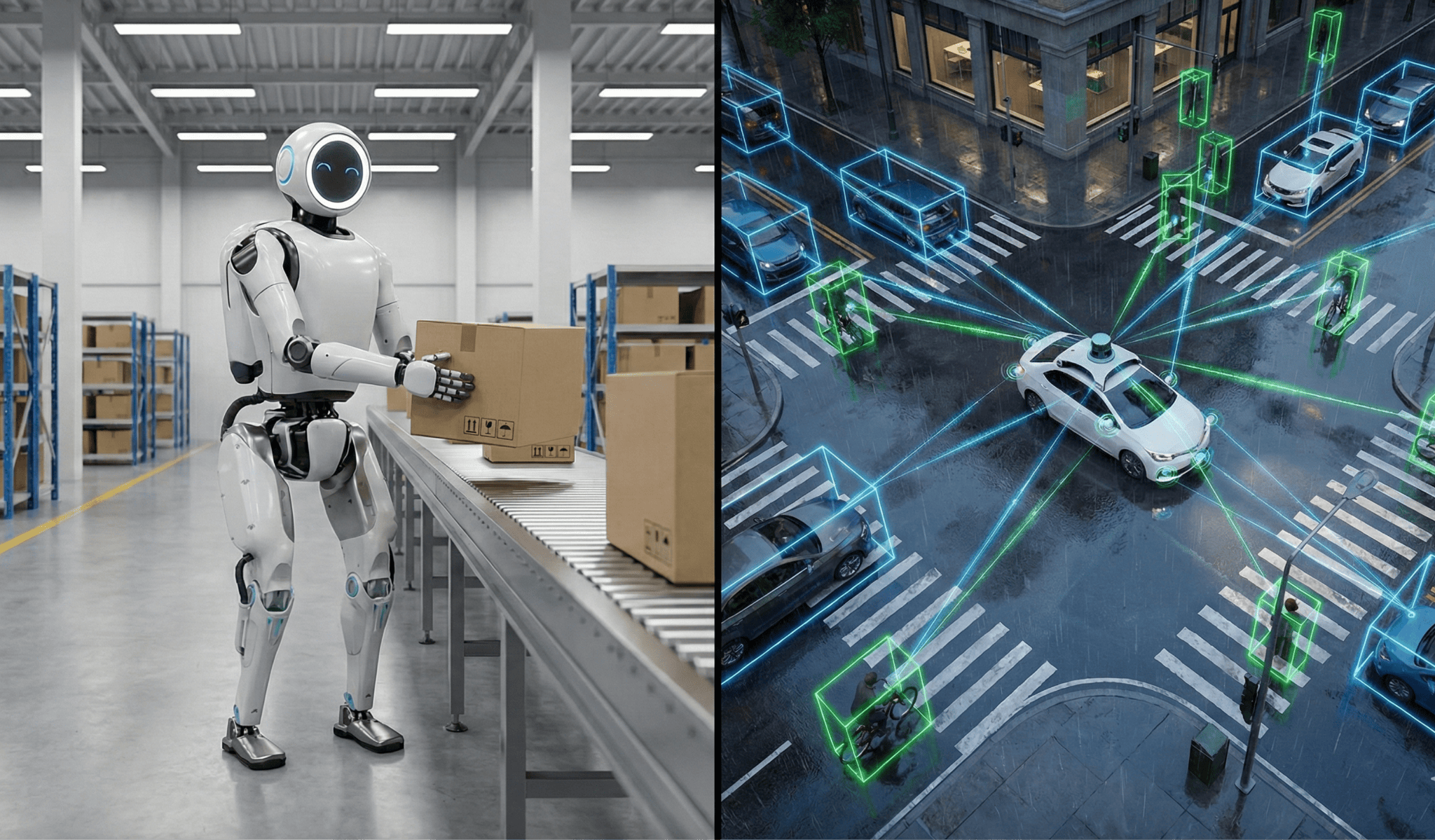

In Part 1, titled The Next Great Engineering Frontier: The Hidden Complexity of Physical AI, I discussed how the chaotic physical world, the high stakes of failure, and the Sim-to-Real gap make using embodied AI in robotics a harder problem than generating text, images, or video via an AI chatbot.

However, mastering the physics is only half the battle. The other, and arguably bigger, battle is redefining the acceptable safety of the final system itself.

As engineers deploy these systems, the industry is asking the critical question:

What is the acceptance criterion for a robot to be deemed safe enough?

One camp believes the goal is Human-Level performance. If a robot can drive as well as a licensed taxi driver, or if a humanoid can fold laundry as fast as a teenager, the product is ready. It does feel like an intuitive and logical milestone to us humans.

However, I’m here to make an argument that this belief is misleading.

Aiming for human performance is aiming for mediocrity. We humans get tired. We get distracted. We have a blind spot in the center of our retina as well as behind our heads. We let emotions affect our decision-making and are limited by our physical motor control abilities. If engineers design a robot to see like a human and react like a human, they have built an incomplete and unsafe product.

In this article, I will explain why enabling Physical AI to achieve human equivalent performance is a failure state. I will break down the math of why you cannot statistically validate a robot against a human benchmark, and why the future of safety relies on decidedly superhuman machines.

Why Listen to Me?

If you are reading one of my articles for the first time, here is my short introduction.

I am Nishant Bhanot, and I help build safety-critical systems for a living. Currently, I am a Senior Sensing Systems Engineer at Waymo. My work in autonomous systems spans the Special Projects Group at Apple, Autonomous Trucks at Applied Intuition, and driver-assist technologies at Ford.

I have seen safety validation from every angle. This includes everything from highway assist features deployed in consumer cars today to fully autonomous robotaxis navigating complex urban environments. I have learned the hard way that good enough in the lab is sometimes not good enough for the real world.

The Limits of Biological Hardware

Human eyes and hands are marvels of evolutionary engineering. They are energy-efficient and adaptable for general intelligence tasks. However, biological evolution optimized us for survival, not for high-speed deterministic safety.

When we apply these biological constraints to modern robotics, we hit fundamental human capability limits.

1. Field of View

Humans rely on foveal vision. This is sometimes also referred to as tunnel vision. We see high-resolution details only in a roughly 2 to 5 degrees central cone and the brain fills in the rest with estimation and memory. We also have a blind spot where the optic nerve connects to the retina. We must constantly saccade to track a dynamic scene as we dart our eyes around to stitch together a coherent picture.

However, a robotic sensor suite does not have this limitation. Consider a typical LiDAR unit spinning at 10 Hz. It captures a 360-degree point cloud of the world with centimeter-level accuracy. It does this simultaneously in every direction. An array of multiple cameras do not blink. They do not lose peripheral awareness just because they are focusing on a specific object.

2. Latency

When a human driver sees a brake light turn red, a complex biological chain reaction occurs. It starts with the photons from the traffic light hitting the retina. The signal travels down the optic nerve. The brain processes the image and the frontal cortex decides to act. The signal travels down the spine to the leg muscles. Only then, the foot finally moves to press the brake pedal.

Traffic safety studies, such as those from American Association of State Highway and Transportation Officials (AASHTO), typically estimate this perception-reaction time at 1 to 1.5 seconds for unexpected events. At typical highway speeds, a car can travel about half the length of a football field in that time before even beginning to slow down. Even in a best-case scenario, a fully alert human’s reaction time rarely drops below 600 milliseconds.

A silicon-based robotics control loop does not operate with the same limitations. A camera frame can be captured, processed by the neural network, and converted into a braking command in under 300 milliseconds. This is at least two times better than the best human performance standards.

3. Precision

This distinction applies to surgery as well. While the human hand is capable of incredible dexterity, it is limited by physiological tremor. Even the steadiest surgeon has microscopic shakes as fatigue sets in after hours of standing over an operating table. Precision naturally degrades.

A surgical robot does not get tired. It can scale motion down so that a 5-centimeter movement by the surgeon translates to a sub-millimeter movement of the scalpel.

The Bottom Line

When we say we want human-level safety, we implicitly accept these biological constraints. We accept that optical blind spots, high latency, and physiologically limited precision are the standard to meet.

We should not code these limitations into our machines. We should engineer past them.

The Challenge of Statistical Validation

There is a fundamental difference between Generative AI and Physical AI. If a chatbot hallucinates, it writes a bad poem. If a physical robot hallucinates, it can cause physical damage.

A humanoid robot carries heavy payloads in a warehouse. It also handles sharp industrial tools or hot components. Similarly, an autonomous vehicle is effectively a multi-ton projectile moving at highway speeds. Finally, a delivery drone delivers glassware to a backyard in a residential neighborhood. Failure in any such domains has immediate physical consequences. Therefore, the validation bar must be incredibly high.

To validate a safety-critical system, we typically look for metrics such as Mean Time Between Failures (MTBF). We test the system until we have enough data to statistically prove it meets our safety targets.

Interestingly, despite our biological limitations, humans are actually quite safe.

According to NHTSA data, human drivers in the United States suffer a fatality roughly once every 100 million miles. This creates a large denominator for any statistical comparison.

If our goal is to meet human-level safety, we force ourselves into a statistical trap. To scientifically prove that a physical AI system with human-level capability is just 20% safer than a human, you need a sample size large enough to establish statistical significance. This could mean millions of humanoid hours in the warehouse, or billions of autonomous miles on the road.

If we aim for marginal improvements over human benchmarks using human capability level AI, validation becomes extremely time-consuming.

However, the validation logic changes if we aim for Superhuman capability using systems that are orders of magnitude safer than humans. We can prove that specific classes of accidents are extremely unlikely due to the system’s inherent superior design.

The Superhuman Architecture

The tricky question is that If we cannot validate safety purely by brute-forcing miles or hours, how do we achieve it?

I believe the answer lies in Architectural Superiority.

A human driver relies almost entirely on the visible light spectrum (their eyes). Fog, glare, darkness, or other common elements of the real world can partially or fully blind them in the moment.

However, a well-architected physical AI system engineered with orthogonal redundancy is not bound by this limitation. This means the system can use different types of physics to verify the same reality.

1. Seeing the Invisible

In a scenario with heavy fog or blinding sunlight, the human eye is compromised because visible light scatters or saturates. A physical AI enabled only with cameras shares this vulnerability. However, an architecture that fuses the following sensors performs fundamentally better:

- Lidar acts as an active light source. It emits its own laser pulses to measure the exact distance with photon-level accuracy. It creates a precise 3D map of the world, regardless of whether it is high noon or a moonless midnight.

- Radar operates in the radio spectrum. It ignores moisture entirely, detecting through fog and rain to track the position and velocity of moving objects with Doppler precision.

By fusing these inputs, the system builds a world model that is robust against the very conditions that blind biological operators. In other words, the architecture enables access to information in a spectrum that humans simply cannot perceive.

2. Kinematic Degree of Freedom

This advantage extends to the physical form of the robot itself. Humans are limited by the skeletal mechanics of the primate form. Our knees only bend one way, and our torsos and necks have a limited rotation range. If a human needs to retreat from a hazard while carrying a load, they must walk backward blindly, risking a trip or collision.

A humanoid, however, can be designed with joints capable of 360-degree rotation. It can monitor hazards behind it without turning its torso and can walk backward with the same stability and safety as it walks forward.

The Bottom Line

Safety comes from building a superhuman system that has access to ground-truth data and physical capabilities that exceed the human baseline.

The Societal Acceptance Criteria

There is one final reason why aiming for human-level performance is a strategic error.

Society judges machines by a fundamentally different standard than it judges humans. If a human driver gets distracted and rear-ends a car, we call it an accident. However, if a robot makes that exact same mistake, it is labeled as a systemic failure.

Humans are judged on their nature. When a human fails, we attribute it to fatigue or distraction. When a machine fails, we perceive it as a preventable error in logic.

Due to this societal acceptance criterion, physical AI cannot just be as safe as a human. To win public trust, a robot must demonstrate a much higher level of competence that makes human-like errors impossible.

Conclusion

We are on the cusp of the greatest deployment of robotics in history. As we move from chatbots to physical bots, we must leave the human-level benchmark behind.

Unfortunately, biological evolution did not optimize us for deterministic safety. We suffer from high latency, are limited by tunnel vision, and are prone to fatigue or distraction.

To summarize :

- Statistics dictate that we cannot validate human-level safety in a reasonable timeframe.

- Architecture allows us to see the invisible and move in ways biology cannot.

- Society demands a level of performance from machines that it never asks of humans.

I believe the goal of Physical AI is not to replicate the human operator. Rather, the goal is to build a new category of operator. An operator who does not get distracted or panic. An operator that sees through the fog and moves the scalpel with sub-millimeter accuracy.

The future of a safe Physical AI is superhuman capability.

n