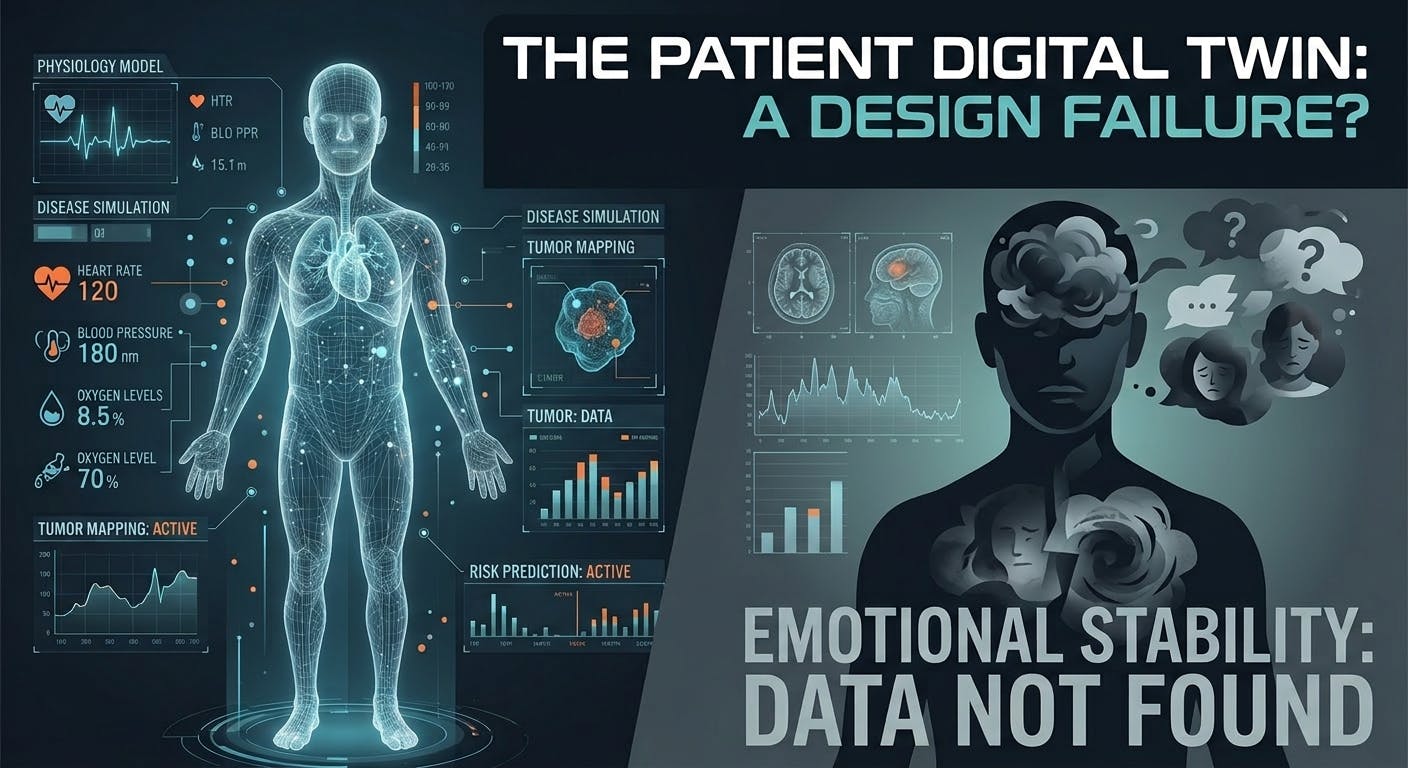

Healthcare is getting very good at building digital representations of the body.

We can model organs, simulate disease progression, track treatment response, and predict clinical risk with increasing precision. Hospitals are investing in smarter monitoring, smarter imaging, smarter decision support, and smarter workflow systems. On paper, this looks like progress. And in many ways, it is.

But there is a problem sitting at the center of all this innovation.

The patient inside these systems is still strangely incomplete.

We are building digital twins that can approximate physiology, but not fear. We can track biomarkers, but not the collapse of emotional stability that often begins long before a patient says a word. We can map the tumor, the lesion, the heart rhythm, or the sleep cycle, but we still do not know how to model what it feels like to be inside a body that no longer feels safe.

This is not a minor gap. It is a design failure.

A patient is not only a medical object. A patient is also a nervous system under pressure. A person entering treatment is dealing with anticipation, stress, confusion, helplessness, sensory overload, and often, a quiet loss of control. These are not soft side effects. They shape how people tolerate treatment, how they make decisions, how they recover, and whether they can stay psychologically connected to themselves through the process.

Yet, most healthcare technologies still treat the inner experience as secondary. Something subjective. Something too vague to measure. Something to address later, if there is time.

There usually is not.

This is one of the reasons so many advanced systems still feel emotionally primitive. They are optimized to detect, classify, and respond to clinical events. They are not built to understand destabilization before it turns into a visible crisis. They do not recognize when the patient has begun to dissociate from their own body, when fear is narrowing cognitive capacity, or when exhaustion has reduced a person’s ability to process information or participate in care.

If we are serious about digital twins in healthcare, we need to ask a more uncomfortable question: what exactly are we twinning?

If the answer is only anatomy, pathology, and workflow, then we are not building a twin of the patient. We are building a twin of the case.

That distinction matters.

A case can be managed. A patient has to live through what the case means.

This is where the next generation of healthcare design needs to evolve. The missing layer is not only more data. It is a different category of data. We need systems that are capable of registering emotional load, sensory tolerance, cognitive fatigue, and changes in self-perception. We need environments that do not simply deliver care, but help preserve the patient’s internal stability while care is happening.

That does not mean every human experience should be flattened into a metric. It means we should stop pretending the inner state is irrelevant just because it is harder to quantify.

There are already signals available to us. Voice changes, breathing patterns, body tension, gaze behavior, response latency, movement quality, orientation in space, tolerance to stimulation, patterns of withdrawal, etc.

Even the language patients use to describe themselves can reveal whether they still feel present in their body or whether they have started to retreat from it.

The question is not whether these states matter. The question is whether healthcare systems are willing to treat them as part of reality rather than as emotional background noise.

In my view, this is where immersive technology becomes especially important. Not because virtual reality is futuristic, but because it gives us a way to work with experience directly. It creates structured spaces where attention, fear, bodily awareness, and emotional regulation can be observed and supported in real time. In some cases, it allows patients to reconnect with a sense of agency before that sense disappears completely.

That is valuable because recovery does not begin at discharge. It begins much earlier, often at the moment a person starts losing contact with themselves.

This is the part many health systems still miss. A patient can be clinically stable and psychologically fragmented at the same time. The scans may improve while the person feels less and less real inside their own life. If our technologies are blind to that condition, then our intelligence is incomplete, no matter how advanced the interface looks.

I suspect this is where healthcare AI will eventually have to mature. Right now, much of the focus is on automation, efficiency, and prediction. Those are important goals. But the more healthcare becomes data-driven, the more dangerous it becomes to exclude the inner state from the model. Efficiency without psychological insight can produce cleaner workflows and worse human outcomes.

The future of care will not belong to the systems that merely know more about disease. It will belong to the systems that understand what illness does to presence, perception, and emotional continuity.

We do not need a sentimental version of healthcare technology. We need a more truthful one.

A real patient digital twin should not stop at the body. It should account for the lived strain of being a person under treatment. It should help clinicians recognize not only what is happening medically, but what is beginning to fracture internally. It should support intervention before emotional collapse hardens into disengagement, panic, or long-term trauma.

If we fail to design for that, we will keep producing impressive medical systems that remain incomplete at the human level.

And we will keep calling them intelligent, even though they still cannot see the full patient in front of them.