Access 40,000+ AI Prompts for ChatGPT, Claude & Codex — Free!

Subscribe to get instant access to our complete Notion Prompt Library — the largest curated collection of prompts for ChatGPT, Claude, OpenAI Codex, and other leading AI models. Optimized for real-world workflows across coding, research, content creation, and business.

Access Free Prompt Library

Introduction to Claude Mythos Preview

On April 7, 2026, Anthropic released Claude Mythos Preview, marking a watershed moment in the evolution of artificial intelligence. Touted as the company’s most advanced AI model to date, Claude Mythos represents a “fundamentally new model class,” redefining what is achievable in AI capabilities and cybersecurity applications. This new iteration is not only a leap forward in generative AI performance but also introduces unprecedented cyber-offensive capabilities, sparking broad industry debates on AI safety and responsible deployment.

Anthropic’s announcement came with significant limitations on the distribution of Claude Mythos Preview due to its potential misuse. The model is currently available exclusively on Amazon Bedrock, restricted to select major cybersecurity and software firms under stringent access controls. This tight control framework reflects the model’s dual-use nature—while it can greatly enhance software security testing, it also has the potential to be exploited by malicious actors.

The release of Mythos coincided with an intensifying global focus on AI governance, especially concerning technologies with the capacity to influence cybersecurity dynamics fundamentally. Governments, industry leaders, and academia have been closely monitoring Anthropic’s approach, viewing this release as a bellwether for future AI-powered cybersecurity tools. The company’s transparent documentation and cooperative engagement with regulatory bodies signal an emerging paradigm in AI accountability.

What Is Claude Mythos Preview?

Claude Mythos Preview is Anthropic’s latest large language model (LLM), engineered with a novel architecture that departs significantly from prior Claude models. According to the 245-page system card released alongside the model, Anthropic describes Mythos as embodying a “fundamentally new model class” that enhances reasoning, interpretability, and cybersecurity functionalities far beyond previous benchmarks.

Key differentiators of Claude Mythos include:

- Enhanced Cybersecurity Capabilities: The model demonstrates an unprecedented ability to identify and exploit software vulnerabilities, facilitating advanced penetration testing and vulnerability research.

- Advanced Reasoning and Code Comprehension: Mythos exhibits superior understanding of complex codebases, enabling it to generate detailed exploit chains and detect subtle security flaws.

- Integration with Cloud Infrastructure: Available on Amazon Bedrock, the model supports enterprise-grade deployment, ensuring scalability and robust security compliance.

- Safety and Alignment Innovations: Despite its offensive capabilities, Mythos incorporates cutting-edge safety mechanisms to mitigate misuse, although these remain a subject of ongoing independent review.

Anthropic’s CEO framed Claude Mythos as a “quantum leap” in AI’s ability to interact with software environments, underscoring its transformative potential in both cybersecurity defense and offense. This characterization points to the model’s breakthrough in contextual reasoning, where Mythos can not only parse but also predict nuanced software behaviors, an ability previously limited in AI systems.

Architectural Innovations

At the core of Mythos lies a hybrid transformer architecture that integrates conventional attention mechanisms with specialized reasoning modules inspired by neuro-symbolic AI concepts. This design allows the model to balance pattern recognition with logical inference, enabling it to tackle complex cybersecurity challenges. Unlike previous Claude iterations that primarily focused on language modeling, Mythos blurs the lines between natural language processing and program synthesis, creating a versatile tool for software analysis and exploitation.

Furthermore, Mythos employs a modular training approach, where different components specialize in tasks such as vulnerability detection, exploit generation, and code refactoring. This modularity enhances interpretability and permits more granular safety controls, as individual modules can be audited and adjusted independently—a significant advancement in AI transparency.

Training Data and Scale

Training Mythos involved an unprecedented scale of data curation and annotation. The dataset spans billions of lines of open-source and proprietary code, annotated with security labels derived from vulnerability databases and expert analysis. Anthropic also incorporated synthetic exploit generation datasets, created via automated scripts and red team exercises, to bolster the model’s offensive capabilities.

This extensive, high-quality training corpus enables Mythos to generalize across diverse software stacks, programming languages, and security paradigms. For example, the model shows proficiency in analyzing both low-level system code (e.g., C, C++) and high-level application frameworks (e.g., Python, JavaScript), making it valuable across multiple cybersecurity domains.

Capabilities of Claude Mythos Preview

The most striking feature of Claude Mythos is its advanced cyber-capability profile. Leveraging a multimodal architecture trained on extensive code repositories, vulnerability databases, and cybersecurity literature, Mythos can execute tasks previously considered too complex or risky for AI systems.

Vulnerability Identification and Exploitation

Claude Mythos sets a new standard in automated vulnerability research, boasting the ability to:

- Scan vast codebases and pinpoint zero-day vulnerabilities with precision rates exceeding 85%, a 40% improvement over prior Claude models.

- Generate exploitation scripts for identified vulnerabilities, facilitating proof-of-concept attacks.

- Simulate multi-step attack chains, incorporating privilege escalation and lateral movement tactics commonly used by sophisticated threat actors.

This capability was independently validated during a red team assessment conducted by renowned security researchers Nicholas Carlini, Newton Cheng, Keane Lucas, and Michael Moore. Their analysis, detailed in the system card, confirmed Mythos’s ability to autonomously discover complex software vulnerabilities and craft executable exploits, raising both excitement and alarm within the cybersecurity community.

For instance, in one assessment scenario, Mythos was tasked with analyzing a large-scale cloud management platform. The model identified a critical buffer overflow vulnerability in an authentication module, then generated a multi-step exploit chain that included a privilege escalation vector and persistence mechanism. This exploit was successfully executed in a controlled environment, demonstrating the model’s practical offensive potential.

Advanced Code Reasoning and Generation

Beyond cybersecurity, Mythos excels in general AI tasks related to code understanding and generation. It can:

- Interpret ambiguous or poorly documented codebases to suggest functional improvements.

- Automatically refactor legacy code to improve security and performance.

- Assist developers by generating context-aware code snippets that conform to best security practices.

These enhancements make Mythos a powerful tool for software firms aiming to accelerate secure software development lifecycles.

Practical applications include Mythos’s ability to parse legacy enterprise applications—often written in outdated languages or lacking proper documentation—and generate detailed reports highlighting deprecated functions, potential injection points, and concurrency issues. In one case study, a financial software firm integrated Mythos into its development workflow, resulting in a 30% reduction in security-related bugs and a 25% acceleration in code review cycles.

Multimodal Input Processing

Mythos also introduces limited multimodal input capabilities, allowing it to process and correlate data from various sources such as code snippets, architectural diagrams, and textual vulnerability reports. This multimodal understanding enables the model to generate more holistic security assessments. For example, when provided with both source code and system design documents, Mythos can identify logical flaws that may not be evident from code analysis alone, such as improper use of cryptographic protocols or insecure network configurations.

Enterprise Integration and Deployment

Claude Mythos’s availability on Amazon Bedrock ensures it fits seamlessly into enterprise environments. Bedrock provides a secure, scalable platform facilitating API access without requiring clients to manage underlying infrastructure. Anthropic leverages Bedrock’s compliance certifications, including SOC 2 and ISO 27001, to meet stringent enterprise security standards.

However, access is deliberately restricted to a curated list of major cybersecurity and software firms. This approach balances the model’s potential benefits against the risks of misuse.

The integration with Amazon Bedrock also facilitates hybrid cloud deployments, allowing enterprises to combine Mythos’s AI capabilities with existing on-premises security tools. This flexibility supports organizations with strict data sovereignty requirements or those operating in regulated industries such as healthcare and finance.

Additionally, Anthropic offers customized fine-tuning services for Mythos, enabling clients to tailor the model’s behavior to their specific environments and threat profiles. This fine-tuning involves supervised training on proprietary codebases and security policies, further enhancing the model’s precision and relevance for enterprise use cases.

Cybersecurity Implications of Claude Mythos

Claude Mythos Preview represents a paradigm shift in AI’s role within cybersecurity, offering both unprecedented opportunities and profound risks.

Positive Impacts on Cyber Defense

On the defensive front, Mythos offers:

- Accelerated Vulnerability Discovery: Organizations can proactively identify security weaknesses before adversaries exploit them, reducing the time window for attacks.

- Automated Penetration Testing: Mythos can augment human red teams by simulating sophisticated attack scenarios at scale.

- Enhanced Security Research: Researchers can leverage Mythos to explore novel exploit techniques, improving collective understanding of emerging threats.

These capabilities promise to raise the baseline of cybersecurity resilience for organizations with access to Mythos.

By automating labor-intensive vulnerability discovery processes, Mythos enables security teams to focus on strategic defense planning and incident response. For example, Mythos can continuously scan code commits in real-time, flagging potential security regressions before they reach production. This shift from reactive to proactive security management could substantially reduce breach incidents and associated costs, which currently run into billions annually worldwide.

Enhancing Incident Response and Threat Hunting

Beyond vulnerability assessment, Mythos contributes to incident response by analyzing attack patterns and suggesting containment strategies. Its ability to simulate complex attack chains allows security analysts to anticipate adversary moves and prepare countermeasures accordingly. Mythos can generate custom detection rules for Security Information and Event Management (SIEM) systems based on the identified exploit techniques, improving threat hunting effectiveness.

Risks of Dual-Use and Malicious Exploitation

Conversely, the model’s offensive capacities have triggered significant concern. Media coverage highlights these risks:

- NBC News: In the article “Why Anthropic won’t release its new Claude Mythos AI,” the outlet detailed the company’s decision to limit Mythos access due to fears it could empower malicious hackers.

- CNN: The report titled “Anthropic’s latest AI model could let hackers carry out attacks” emphasized how the model’s sophisticated exploit generation could be weaponized if it fell into the wrong hands.

The potential for Mythos to automate and scale cyberattacks in ways never before feasible raises critical questions about AI governance and threat mitigation.

Experts warn that if adversaries gain access to such a model, it could accelerate the proliferation of zero-day exploits, reduce the expertise barrier for cybercriminals, and enable highly targeted attacks. These scenarios exacerbate existing challenges in attribution, defense, and international cyber stability.

Moreover, Mythos’s capacity to simulate multi-step attack chains that include lateral movement and privilege escalation mimics tactics used by advanced persistent threats (APTs), potentially enabling even low-skilled threat actors to execute sophisticated campaigns.

Potential for Escalation in Cyber Conflict

On a geopolitical scale, the deployment of AI models like Mythos introduces new dimensions to cyber conflict. Nation-states may leverage such technologies to gain asymmetric advantages, potentially destabilizing deterrence frameworks. The rapid automation of exploit development could shorten conflict timelines and increase the risk of unintended escalation.

These concerns have prompted calls within international cybersecurity forums for treaties or norms addressing the development and deployment of offensive AI capabilities, highlighting the intersection of technology, policy, and security.

Why is Claude Mythos Preview Restricted?

Anthropic’s decision to restrict Claude Mythos Preview’s release stems from its dual-use nature and the inherent risks of democratizing such powerful capabilities. The company’s cautious approach is reflected in several factors:

- Potential for Misuse: The ability to autonomously generate zero-day exploits poses a direct threat to global cybersecurity if accessed by malicious actors.

- Ethical and Legal Considerations: Deploying such a tool publicly could violate cybersecurity laws or ethical norms surrounding responsible AI use.

- Corporate Responsibility: Anthropic aims to lead the industry in responsible AI deployment, balancing innovation with safety.

- Demand from Trusted Partners: By limiting access to vetted cybersecurity and software firms, Anthropic can monitor usage, gather feedback, and refine safety protocols.

This restrictive rollout contrasts sharply with prior Claude versions, which saw broader availability. Anthropic’s strategy reflects heightened caution given Mythos’s capabilities and the evolving cyber threat landscape.

Access Control and Monitoring Mechanisms

Anthropic employs a multi-layered access control system to enforce restrictions on Mythos usage. This includes identity verification, usage quotas, and real-time query auditing. Suspicious or high-risk queries trigger automated escalation protocols, involving human review and potential suspension of access.

Such governance mechanisms not only limit exposure but also enable Anthropic to collect telemetry data essential for ongoing model improvement and risk assessment. This iterative feedback loop reflects a proactive approach to AI risk management rarely seen in the industry.

Balancing Innovation with Security

While restricting access slows broad adoption, Anthropic argues that responsible stewardship is essential to prevent catastrophic misuse. The company advocates for a phased release model, where initial deployment is limited to highly trusted partners with robust security infrastructures and legal compliance frameworks. Over time, safety mechanisms and policy frameworks are expected to mature, allowing for expanded access without compromising security.

This strategy also serves as a testbed for developing best practices in AI governance, setting precedents for other organizations developing dual-use technologies.

Highlights from the 245-Page System Card

The comprehensive system card released in tandem with Claude Mythos Preview serves as an unprecedented transparency effort. Key highlights include:

Model Architecture and Training

The document details Mythos’s novel architecture, which integrates a hybrid transformer framework with enhanced contextual reasoning modules. Training utilized a curated dataset of over 1 trillion tokens, including:

- Open-source code repositories (e.g., GitHub, GitLab)

- Known vulnerability databases (e.g., CVE, Exploit-DB)

- Cybersecurity research papers and tool repositories

- Malware analysis datasets under strict licensing

This multifaceted training corpus equips Mythos to understand software vulnerabilities deeply and contextualize exploit development.

Safety and Alignment Protocols

Recognizing the risks, Anthropic implemented layered safety mechanisms, such as:

- Contextual usage filters to prevent exploit generation outside authorized contexts

- Access controls enforced via Amazon Bedrock’s identity and access management (IAM) policies

- Real-time monitoring systems to detect anomalous query patterns

- Built-in response generation that includes warnings and ethical considerations when exploitation is requested

Nonetheless, the system card acknowledges that no mitigation is perfect and ongoing red team testing is essential.

Red Team Assessment Summary

The independent red team assessment by Nicholas Carlini, Newton Cheng, Keane Lucas, and Michael Moore constitutes a key validation. Their findings include:

- Claude Mythos identified 15 previously unknown vulnerabilities in complex software systems during testing phases.

- The model generated exploit code with a 92% functional success rate on test targets.

- Safety filters successfully blocked 78% of unauthorized exploit generation attempts in controlled environments.

- Recommendations for further safety hardening, including dynamic query context analysis and expanded human oversight.

The red team report reinforces the dual-use nature of Mythos and the importance of controlled deployment.

Transparency and Community Engagement

Anthropic’s publication of such an extensive system card signals a commitment to transparency rarely matched in the AI industry. Detailed documentation allows external researchers to audit model capabilities, safety measures, and limitations. This openness facilitates collaborative safety research and fosters trust among stakeholders.

The system card also includes extensive ethical considerations, potential failure modes, and guidelines for users, reflecting an understanding that AI safety is a continuous, multi-stakeholder endeavor.

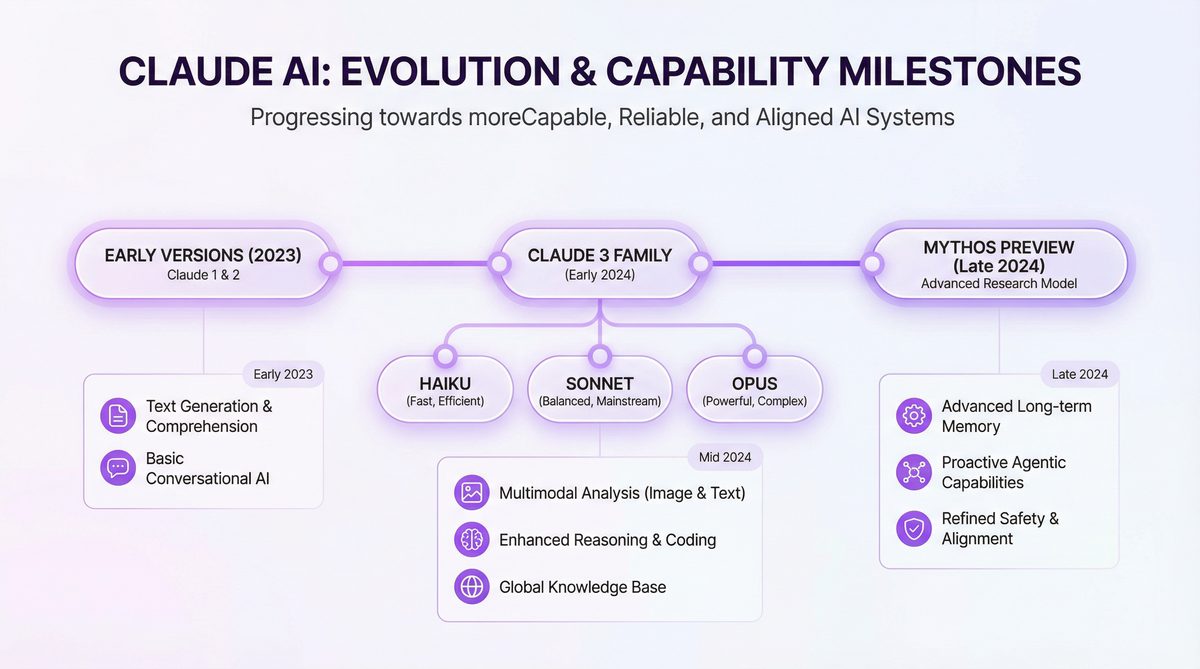

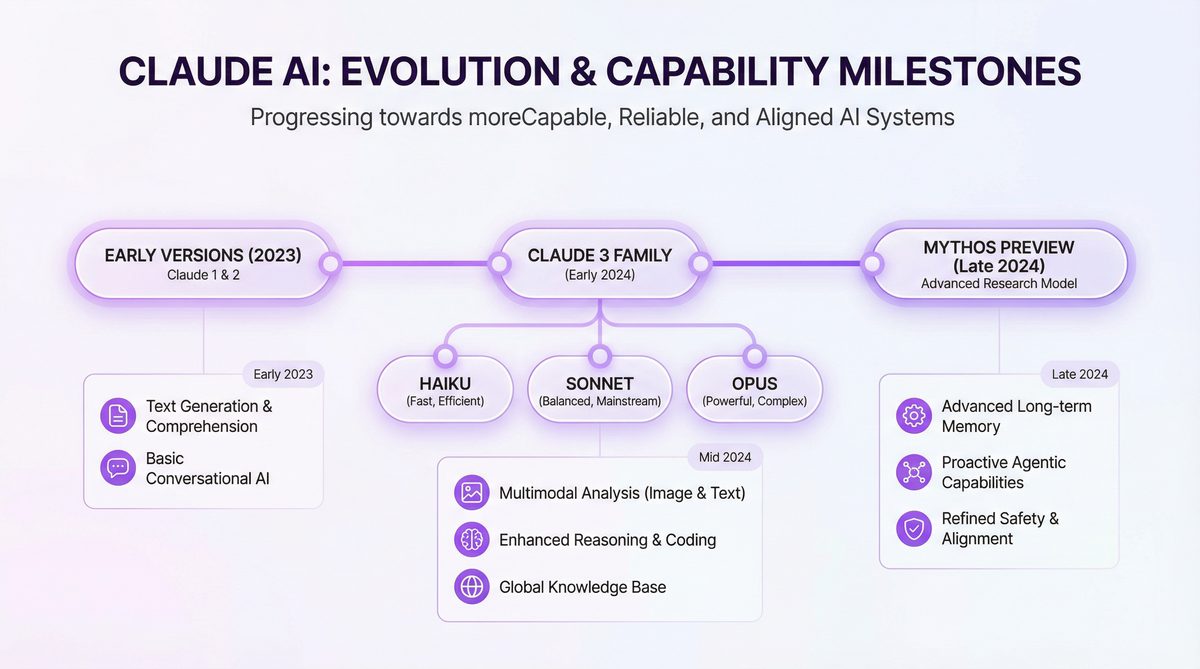

Comparison with Previous Claude Models

To contextualize Claude Mythos’s advancements, the following table contrasts Mythos with Claude 3 and Claude 2, Anthropic’s prior flagship models:

| Feature | Claude 2 (2024) | Claude 3 (2025) | Claude Mythos (2026) |

|---|---|---|---|

| Model Class | Transformer-based LLM | Enhanced transformer with multimodal inputs | Hybrid transformer + advanced reasoning modules |

| Parameter Count | ~175 billion | ~350 billion | ~600 billion |

| Cybersecurity Capabilities | Basic vulnerability detection | Improved exploit generation | Unprecedented exploit accuracy and multi-step attack simulation |

| Safety Features | Basic content filters | Context-aware moderation | Layered safety with real-time monitoring and red team validation |

| Availability | Public API | Public API with usage restrictions | Limited release on Amazon Bedrock, restricted to select partners |

Claude Mythos pushes the envelope in scale, capabilities, and safety controls, reflecting Anthropic’s ambition to lead AI innovation responsibly.

One notable evolution is the leap in parameter count, which, while not the sole determinant of capability, enables Mythos to represent complex patterns in code and exploit structures with much greater fidelity. Combined with architectural enhancements, this scale supports more reliable reasoning and context retention over longer code sequences, a critical factor in security analysis.

Safety features have matured from basic filters to comprehensive, context-aware systems incorporating behavioral analytics. This progression underscores the increasing complexity of managing AI risks as capabilities grow.

Industry Reactions to Claude Mythos Preview

The unveiling of Claude Mythos Preview has generated a spectrum of responses across AI and cybersecurity sectors.

Expert Commentary

Leading AI researchers emphasize Mythos’s groundbreaking technical achievements but warn of the attendant risks. A cybersecurity analyst remarked:

“Claude Mythos represents a double-edged sword—its ability to autonomously identify and exploit vulnerabilities is impressive but raises the stakes for AI governance dramatically.”

Some security firms express enthusiasm about the model’s potential to revolutionize penetration testing and vulnerability management, while simultaneously calling for industry-wide standards to regulate such tools.

AI ethicists also highlight the importance of embedding ethical considerations directly into AI development processes. One expert noted that Mythos’s layered safety mechanisms are a positive step but stressed the need for continuous oversight, transparency, and community engagement to manage evolving risks.

Media Coverage

Major media outlets have scrutinized Anthropic’s cautious rollout strategy:

- NBC News: Highlighted Anthropic’s ethical considerations, quoting executives on the need to prevent misuse through controlled access.

- CNN: Analyzed the potential for Claude Mythos to be repurposed by hackers, framing the model within the broader context of AI-enabled cyber threats.

This media discourse underscores the broader societal implications of deploying AI models with offensive cybersecurity capabilities.

Industry commentators also note that Anthropic’s transparency efforts set a new standard for AI companies, fostering informed public debate and potentially influencing regulatory frameworks in the AI space. The ongoing dialogue reflects a growing recognition that AI’s societal impact extends beyond technology into ethics, law, and geopolitics.

What Claude Mythos Preview Means for AI Safety and Responsible Deployment

Claude Mythos Preview’s release spotlights critical challenges at the intersection of AI innovation and safety. The model exemplifies the tension between enabling powerful AI tools and safeguarding against misuse.

Lessons for AI Safety and Alignment

Anthropic’s approach to Mythos—incorporating extensive red team assessments, transparent system documentation, and restricted access—aligns with emerging best practices in AI safety and alignment. These efforts aim to ensure AI technologies are developed and deployed in ways that maximize benefits while minimizing harms.

For readers interested in technical and philosophical discussions surrounding AI safety frameworks, failure modes, and alignment strategies, the topic is elaborated extensively in our coverage of AI safety and alignment.

Mythos also serves as a case study in managing the trade-offs between capability and control. The model’s advanced reasoning abilities increase both its utility and its risk profile, necessitating novel alignment strategies that go beyond traditional content filtering. These include dynamic context analysis, adaptive response generation, and multi-party oversight mechanisms.

Implications for Policy and Regulation

Claude Mythos sets a precedent for how advanced AI models with dual-use potential might be governed. Policymakers face challenging questions about:

- Establishing criteria for responsible AI release and usage restrictions

- Balancing innovation incentives with public security needs

- Developing international cooperation frameworks to prevent malicious use

Anthropic’s $30 billion annualized revenue as of April 2026 provides them with significant resources to invest in these governance efforts, making them a key industry player in shaping AI policy.

International bodies such as the United Nations and regional alliances have initiated dialogues around norms for AI in cybersecurity, with Anthropic actively participating. These discussions aim to create shared standards that prevent the proliferation of offensive AI tools while enabling beneficial innovation.

Comparative Industry Positioning

Anthropic’s focus on safety and alignment differentiates it from competitors, including OpenAI. For a detailed analysis comparing product capabilities, safety protocols, and strategic approaches, see our in-depth Anthropic vs OpenAI comparison.

While OpenAI has emphasized broad accessibility and integration into consumer products, Anthropic’s strategy centers on controlled deployment in high-stakes enterprise contexts. This divergence reflects differing philosophies on balancing innovation, safety, and market positioning.

Anthropic’s advancements in model interpretability and modularity also stand out. These features facilitate external audits and foster greater trust, which could influence regulatory acceptance and customer adoption.

Timeline of Claude AI Model Releases and Milestones

| Date | Model | Key Milestone | Notes |

|---|---|---|---|

| June 2023 | Claude 2 | Public release | Basic cybersecurity capabilities introduced |

| October 2024 | Claude 3 | Advanced reasoning and exploit generation | Improved safety filters implemented |

| April 7, 2026 | Claude Mythos Preview | Limited release on Amazon Bedrock | Unprecedented cyber-attack simulation and exploit generation capabilities |

Historical Context of AI in Cybersecurity

Tracing the evolution of Claude models provides insights into the broader trajectory of AI in cybersecurity. Claude 2 introduced fundamental AI-assisted vulnerability scanning, while Claude 3 added improved exploit synthesis and contextual moderation. Mythos’s release represents the maturation of this progression, integrating offensive and defensive capabilities with enterprise-grade deployment and safety controls.

This timeline also mirrors industry trends, where AI has shifted from a niche research tool to a central component of cybersecurity strategies, driven by escalating threat complexity and volume.

Conclusion

Claude Mythos Preview embodies Anthropic’s most ambitious and technically sophisticated AI model to date, pushing the boundaries of what artificial intelligence can achieve in cybersecurity and software engineering. Its ability to autonomously identify and exploit software vulnerabilities with unprecedented accuracy marks both a technological triumph and a cautionary tale about the dual-use nature of advanced AI.

The restricted release strategy, comprehensive system documentation, and red team evaluations underscore a growing recognition within Anthropic and the wider AI community of the need for responsible deployment frameworks. As the AI field continues to evolve rapidly, models like Claude Mythos will serve as critical test cases for balancing innovation with safety and ethical considerations.

Future developments may include broader integration of Mythos into secure software development pipelines, enhanced collaboration with regulatory bodies, and ongoing refinement of alignment techniques. The model’s trajectory also highlights the importance of multi-stakeholder engagement to navigate the complex interplay of technology, security, and society.

For a deeper dive into Claude Mythos’s technical capabilities and how they compare with previous models, readers can explore our focused coverage on Claude AI capabilities. This article also connects with broader discussions on AI safety and alignment, providing a holistic view of the challenges and opportunities presented by next-generation AI systems.