As artificial intelligence models have grown exponentially in their capabilities, the methods we use to interact with them have evolved from simple, static prompts to sophisticated, dynamic systems. In 2026, the paradigm shift from traditional prompt engineering to what is now called context design represents a critical leap forward in harnessing the full potential of AI models like ChatGPT (GPT-5.4) and Claude (Opus 4.6). This guide explores this evolution, defining context design, its core principles, practical techniques, and why it is indispensable for enterprise AI adoption.

The Evolution Timeline: From Basic Prompts to Context Design

The journey from early prompt engineering to context design can be mapped across a few key milestones:

- 2022 – Basic Prompts: Initial AI interactions relied on static, single-turn prompts, often requiring precise wording to coax the desired output.

- 2023 – Chain-of-Thought Prompting: Users began guiding models to articulate reasoning steps, improving transparency and accuracy.

- 2024 – Memory and History Integration: Conversations started incorporating session memory, enabling multi-turn dialogues to maintain coherent context.

- 2025 – Context Linking: Models could dynamically reference external documents and previous interactions, linking disparate data points.

- 2026 – Context Design: The present state, where entire ecosystems of data and instructions are orchestrated dynamically within prompts, adapting continuously as interactions unfold.

This timeline reflects a gradual but decisive shift from static communication towards dynamic, evolving ecosystems of information that maximize AI effectiveness.

What is Context Design?

Context design is the practice of orchestrating multiple, evolving data streams and instructions within a prompt to create a fluid information ecosystem that updates as the AI interaction progresses. Unlike traditional prompt engineering, which treats prompts as fixed inputs, context design treats prompts as living, adaptive constructs.

It involves:

- Aggregating data from multiple sources (user inputs, external documents, databases, memory)

- Structuring instructions that adapt based on the current interaction state

- Leveraging dynamic context windows that evolve rather than static snapshots

Think of it as designing a multi-layered conversation environment where the AI continuously updates its understanding and instructions based on real-time data flows. This approach enables more accurate, relevant, and creative AI outputs.

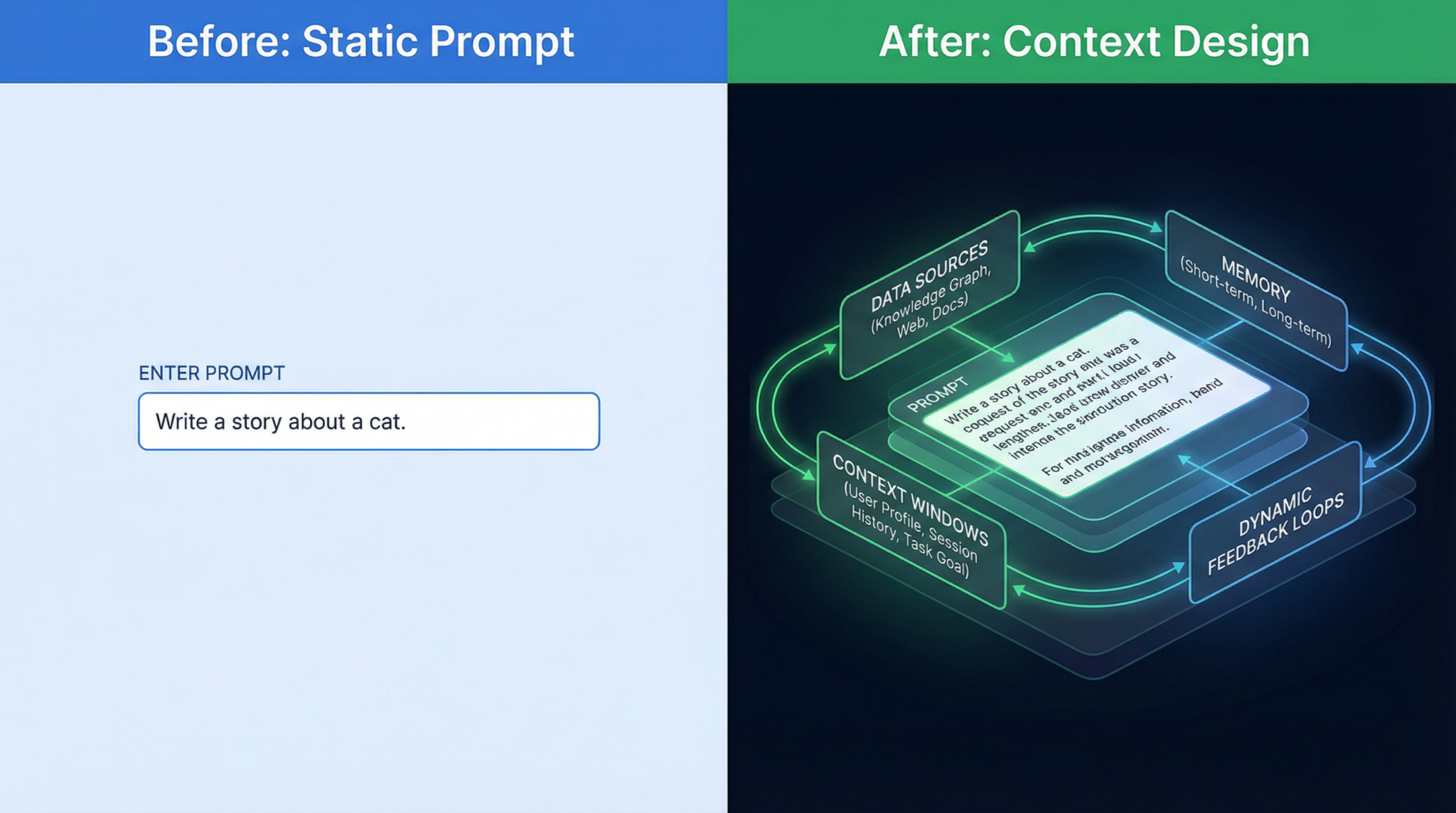

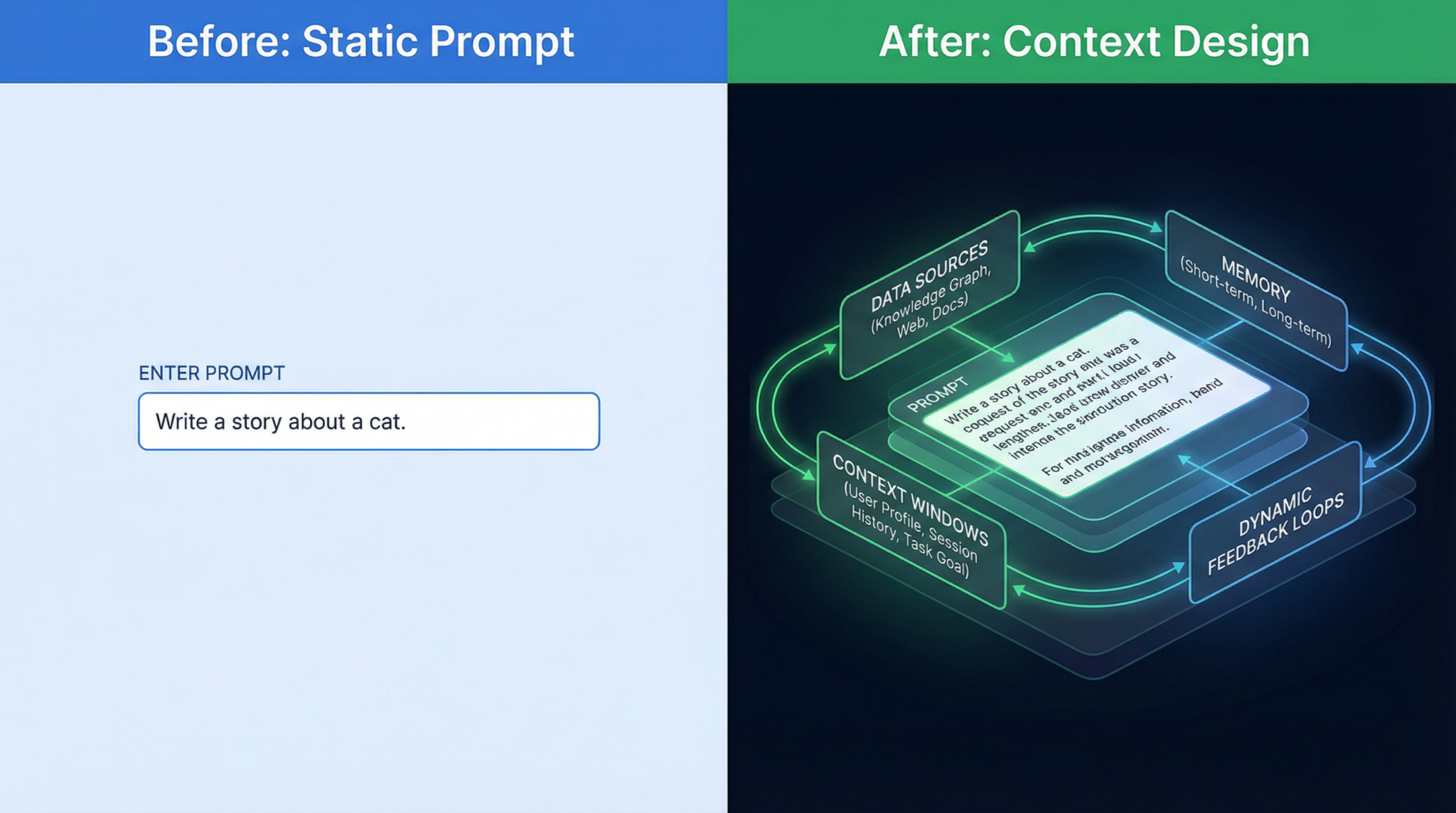

The Shift: Static Prompts to Dynamic Information Ecosystems

Traditional prompts were often single-turn and fixed: a user provides a question or command, and the AI responds. This works well for simple tasks but hits limitations for complex workflows requiring persistent awareness and adaptive instructions.

Context design moves beyond this by:

- Maintaining and updating context windows dynamically rather than sending fixed prompt snapshots.

- Orchestrating multiple inputs—such as user history, external knowledge bases, and real-time data—to inform the AI.

- Allowing the prompt itself to evolve through meta-instructions that refine AI behavior as the interaction unfolds.

These capabilities form a foundation for advanced applications like real-time decision support, multi-agent collaboration, and enterprise-grade automation.

Chain-of-Thought Prompting Techniques provides detailed insights into the intermediate step of 2023 that laid groundwork for today’s context design by scaffolding AI reasoning within prompts.

Five Core Principles of Context Design

Successful context design hinges on five interrelated principles that govern how dynamic prompts are structured and maintained:

- Dynamic Context Windows

Instead of sending a static text snapshot, context windows are continuously updated to reflect new information, user inputs, and evolving AI states. This dynamic windowing prevents information overload and keeps the AI focused on relevant data. - Multi-Source Data Orchestration

Context design integrates data from diverse sources—internal memory, APIs, databases, documents, and live feeds—combining them seamlessly within the prompt environment to enrich AI understanding. - Adaptive Instruction Layering

Instructions are layered and adapted on-the-fly based on current context, user preferences, and evolving goals. This layering helps the AI prioritize tasks, apply domain-specific rules, and switch modes dynamically. - Memory-Aware Prompt Architecture

The prompt architecture actively manages AI memory, deciding what to retain, forget, or highlight. Memory awareness ensures continuity across sessions and reduces redundancy. - Agent-Native Prompt Patterns

Prompts are designed to leverage agent capabilities native to the AI platform, such as invoking plugins, calling APIs, or performing reasoning chains. This approach unlocks advanced functionalities embedded in models like GPT-5.4 and Claude Opus 4.6.

Why These Principles Matter

By adhering to these principles, AI applications can:

- Maintain relevance and coherence over extended interactions

- Reduce hallucination and irrelevant outputs through better data integration

- Scale across complex workflows by modularizing prompt elements

- Enable seamless collaboration between human users and AI agents

Memory and History Integration in Large Language Models explores how memory-aware prompt architectures from 2024 evolved and now serve as a cornerstone of context design.

Practical Techniques for Implementing Context Design

Below are actionable techniques tailored for modern AI models such as ChatGPT (GPT-5.4) and Claude (Opus 4.6), designed to operationalize context design principles.

1. System Prompt Architecture with Conditional Assembly

System prompts now act as dynamic templates that assemble instructions conditionally based on the current session state or user role. For instance:

{

"system_prompt": [

{"condition": "user_role == 'analyst'", "content": "Prioritize data accuracy and detailed explanations."},

{"condition": "task == 'summary'", "content": "Focus on concise, high-level overviews."},

{"default": "Maintain a professional tone and verify sources."}

]

}This approach allows the AI to switch behavioral modes seamlessly without reprogramming the prompt manually.

2. Dynamic Context Windowing Strategies

To manage token limits effectively, implement rolling context windows that prioritize recent and relevant information. For example, a sliding window might keep:

- Latest user inputs

- Key facts extracted from prior interactions

- Critical instructions or constraints

Automated summarization of older content helps compress context to preserve essential details.

3. Retrieval-Augmented Prompting

Incorporate external knowledge by querying databases or document stores during the prompt assembly phase. For example:

Retrieve top-3 relevant documents from knowledge base for query: "Quarterly sales analysis"

Insert summaries into prompt before user question

Ask AI to answer using retrieved data onlyThis technique dramatically reduces hallucinations and enhances factual accuracy.

4. Chain-of-Verification to Reduce Hallucinations

Prompt the model to verify its outputs step-by-step, cross-checking against known data sources integrated via multi-source orchestration:

Step 1: Generate initial response

Step 2: Cross-verify each claim with external data

Step 3: Highlight any unverifiable points for user reviewSuch chains increase transparency and trustworthiness, especially in enterprise contexts.

5. Meta-Prompting for Self-Improving Instructions

Use meta-prompts that ask the AI to critique and improve its own instructions or clarifications:

“Review the previous instructions and suggest improvements for clarity and efficiency.”This iterative self-refinement empowers more robust and adaptable prompt structures over time.

Retrieval-Augmented Prompting Best Practices covers detailed methodologies and tooling recommendations for integrating external data sources effectively within prompts.

The Claude Code System Prompt Leak: A Case Study in Dynamic Prompt Assembly

In late 2025, the leaked Claude Code system prompt revealed how Opus 4.6 leverages dynamic prompt assembly at scale. The leak showcased:

- A modular prompt architecture that conditionally includes data and instructions based on real-time user inputs and task requirements

- Multi-layered context processing pipelines that merge memory, external retrievals, and agent instructions before final AI consumption

- Meta-instruction frameworks that enable Claude to self-modify prompts for improved accuracy and tone

This revelation solidified the industry’s understanding that context design is not a theoretical concept but a practical, implemented reality powering next-generation AI interactions.

Real Examples: Static Prompt vs. Context Design

Example Task: Generate a Market Analysis Summary

Before (Static Prompt)

“Provide a summary of the current market trends in renewable energy.”Limitations: No additional context, no recent data, and no instruction on style or depth.

After (Context Design)

{

"system_prompt": "You are an expert market analyst specializing in renewable energy.",

"context": {

"user_profile": "Senior analyst",

"recent_data": "Retrieved 5 latest market reports from Q1 2026",

"instructions": "Summarize key trends, highlight risks, and suggest actionable strategies in a concise format."

},

"interaction_state": "First summary request in session",

"task": "market_analysis_summary"

}The AI dynamically assembles relevant data and instructions, adapts output depth for the user role, and updates context windows for follow-up queries.

Example Task: Customer Support Response

Before

“Respond to the customer complaint about delayed shipment.”After

{

"system_prompt": "You are a customer support agent with access to order database.",

"context": {

"customer_data": "Order #12345, shipped 5 days late due to supply chain issues",

"company_policy": "Offer 10% discount for delays over 3 days",

"tone": "Empathetic and professional"

},

"task": "compose_response"

}This structured, dynamic prompt ensures the AI response is personalized, policy-compliant, and context-aware.

Tools and Frameworks for Context Design Implementation

Several modern frameworks and tools facilitate context design workflows:

- LangChain (v1.9+): Supports dynamic prompt templates, memory management, and retrieval augmentation across multiple LLMs.

- PromptLayer: Enables version control and conditional prompt assembly with integrated analytics for iterative improvements.

- OpenAI Functions and Plug-ins: Provide agent-native capabilities to embed API calls and dynamic data retrieval directly within conversations.

- Claude’s Context Manager API: Allows developers to orchestrate multi-source context flows and adaptive instruction layering programmatically.

Combining these tools with custom orchestration layers allows enterprises to deploy sophisticated context design architectures tailored to their workflows and compliance requirements.

Why Context Design is Critical for Enterprise AI Adoption

Enterprises demand AI solutions that are:

- Reliable: Ensuring factual accuracy and reducing hallucinations through dynamic verification chains

- Scalable: Managing complex workflows and multi-agent collaboration with adaptive prompts

- Customizable: Tailoring AI behavior dynamically to user roles, domains, and evolving business needs

- Compliant: Embedding policy constraints and audit trails within prompt architectures

Context design addresses these imperatives by moving beyond brittle, one-off prompt hacks towards resilient, maintainable AI interaction ecosystems. Enterprises gain:

- Higher ROI through improved AI output quality and operational efficiency

- Faster onboarding of AI assistants with role-specific and domain-aware prompt assemblies

- Greater user trust due to transparency and verification mechanisms embedded in context flows

Enterprise AI Best Practices outlines strategic approaches to integrating context design methodologies within large-scale business environments.

Access 40,000+ AI Prompts for ChatGPT, Claude & Codex — Free!

Subscribe to get instant access to our complete Notion Prompt Library — the largest curated collection of prompts for ChatGPT, Claude, OpenAI Codex, and other leading AI models. Optimized for real-world workflows across coding, research, content creation, and business.

Access Free Prompt Library

Conclusion

The transition from traditional prompt engineering to context design marks a fundamental transformation in how humans and AI collaborate. By orchestrating dynamic, multi-source data ecosystems and adaptive instructions, context design unlocks the true potential of AI models like GPT-5.4 and Claude Opus 4.6 for complex, real-world applications. For AI practitioners, mastering these principles and techniques is no longer optional but essential for building next-generation AI solutions that are accurate, efficient, and enterprise-ready.

Author: Markos Symeonides