The End of “Press 1 for Support”

“Your call is important to us.” Few phrases frustrate customers more. Long wait times, rigid IVR menus, and repetitive prompts have defined customer service for decades—and not in a good way. That experience is now being redefined.

Customer service automation has long promised faster support and lower costs. Until recently, however, most automated systems delivered the opposite: deep menu trees, limited speech recognition, and hardcoded logic that forced users into predefined paths.

This is changing with the rise of LLM-powered AI voice agents. These systems can handle open-ended, multi-turn conversations at scale, including low-quality audio inputs, with significantly greater flexibility than traditional IVR systems.

AI voice agents are increasingly capable of handling real customer interactions, completing tasks that were previously limited to human representatives. This article outlines a production-oriented architecture for real-time AI voice agents, focusing on system design, reliability, and compliance considerations.

Traditional Call Centers No Longer Meet Modern Demand

Despite incremental improvements, traditional call centers continue to face structural challenges: long hold times, abandoned calls, high staffing costs, inconsistent service quality, and increasing customer expectations.

Organizations in regulated industries have begun piloting AI voice agents to address these issues. In one deployment within the insurance domain, voice agents were introduced to handle inbound Medicare-related inquiries, initially focused on after-hours traffic. The system achieved near-complete call coverage while increasing the proportion of callers progressing toward plan selection.

These results suggest that automation can reduce friction at critical decision points when implemented correctly.

In early-stage deployments, user feedback consistently highlighted a key difference from legacy IVR systems: interactions felt more natural, responsive, and informative.

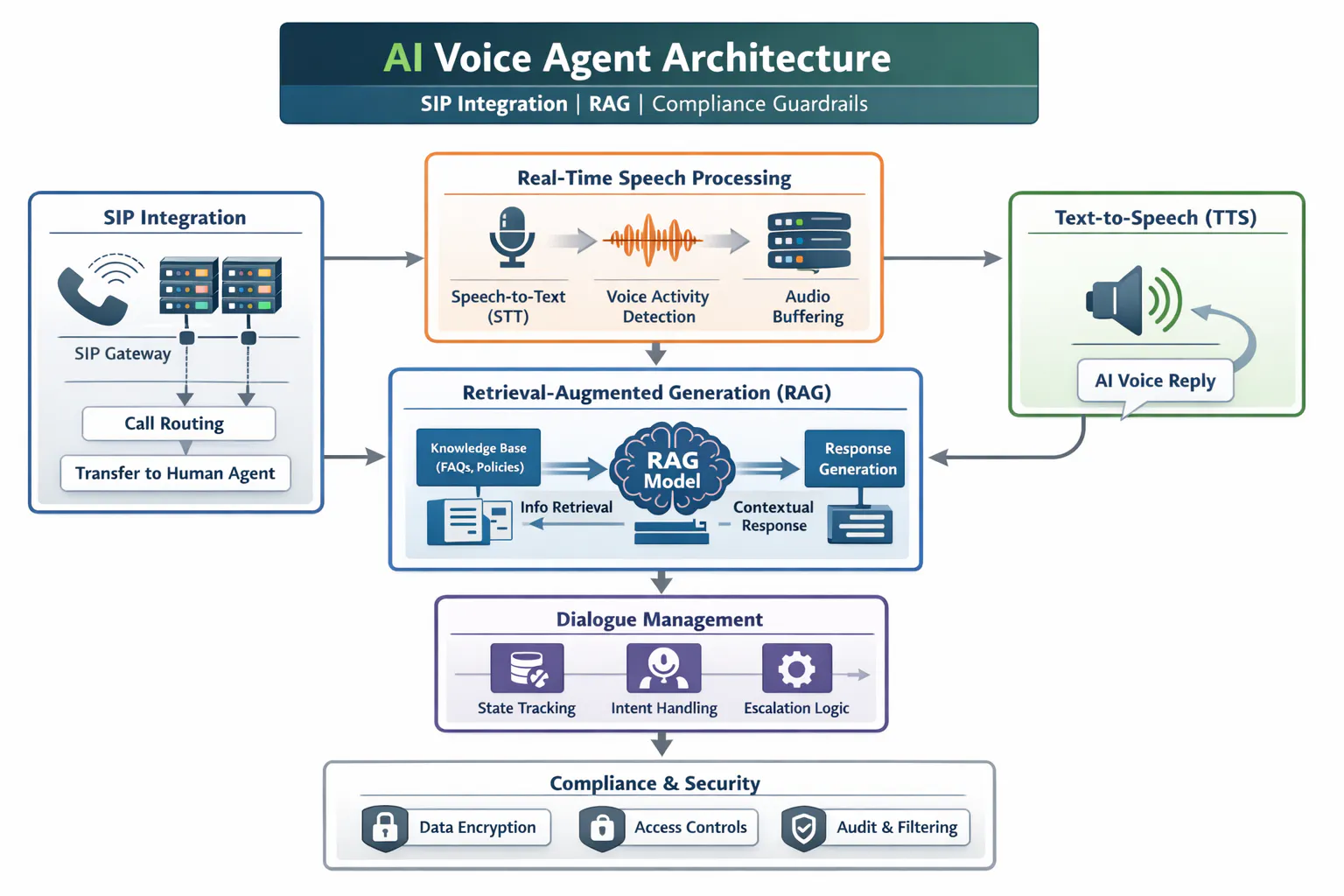

Architecture: How a Real-Time AI Voice Agent Actually Works

Creating reliable AI voice agents requires a modular, low latency architecture that bridges telephony systems with modern AI.

- SIP Integration

The first challenge is connectivity. The agent must integrate directly into existing telephony infrastructure via Session Initiation Protocol (SIP) gateways or SIP-enabled cloud phone systems.

At this layer, the system:

- Accepts incoming calls from carriers or enterprise PBXs

- Streams voice packets into the AI processing pipeline

- Maintains call state and manages transfers

- Enables escalation to human agents when needed

Without this integration the agent remains a backend compute service and not an operational call center participant.

| # Example: FastAPI endpoint receiving audio stream (from SIP gateway) n n from fastapi import FastAPI, WebSocket n import asyncio n n app = FastAPI() n n @app.websocket(“/audio-stream”) n async def audiostream(ws: WebSocket): n await ws.accept() n print(“Call connected”) n n try: n while True: n audiochunk = await ws.receivebytes() n # Forward audio to processing pipeline n processaudio(audiochunk) n except Exception as e: n print(“Call ended:”, e) n n def processaudio(chunk: bytes): n # Send to STT pipeline (next section) n pass |

|—-|

-

Real-Time Speech Processing

Once audio arrives, real-time processing with the range of best quality calls and poor quality ones with background noises/low voice is essential:

-

Streaming Speech-to-Text: Converts incoming speech to text with minimal delay. Technologies like Whisper or custom speech models can yield sub-second results.

-

Voice Activity Detection: Identifies when a user has finished speaking to avoid interruptions.

-

Buffering and Codec Handling: Ensures consistent quality despite network variability.

In systems I’ve worked on integrating, I’ve observed that optimizing this layer is one of the most critical engineering tasks.

| # Using a streaming speech-to-text model like Whisperimport queue n import threading n import whisper n n audioqueue = queue.Queue() n model = whisper.loadmodel(“base”) n n def audioproducer(chunk: bytes): n audioqueue.put(chunk) n n def transcribeworker(): n buffer = b”” n while True: n chunk = audioqueue.get() n buffer += chunk n n # Simple chunk-based processing (replace with streaming STT in prod) n if len(buffer) > 32000: # ~2 sec audio n result = model.transcribe(buffer) n print(“User said:”, result[“text”]) n buffer = b”” n n threading.Thread(target=transcribe_worker, daemon=True).start() |

|—-|

- Retrieval Augmented Generation Approach

Powerful LLMs alone are not enough as they can hallucinate or ‘invent’ facts. To address this leveraging a RAG based approach. This includes adding domain specific knowledge sources. For example, a Call Screener AI agent would need knowledge on information to be gathered whereas a Customer Service AI agent would need context and facts specific to the business cases. Relevant results from the knowledge base are fed inot the LLms prompt context resulting in answers grounded in factual data.

It is even correct to say that this approach is a must especially in highly regulated industries like health insurance.

from sentence_transformers import SentenceTransformer

| # Sample knowledge base n docs = [ n “Medicare plans include Part A, B, C, and D.”, n “Part D covers prescription drugs.”, n “Eligibility begins at age 65.” n ] n n model = SentenceTransformer(“all-MiniLM-L6-v2”) n n # Create vector index n embeddings = model.encode(docs) n index = faiss.IndexFlatL2(embeddings.shape[1]) n index.add(np.array(embeddings)) n n def retrieve(query: str, k=2): n qemb = model.encode([query]) n distances, indices = index.search(np.array(qemb), k) n return [docs[i] for i in indices[0]] n n # Example usage n context = retrieve(“What does Part D cover?”) n print(context) |

|—-|

- Dialogue Management and State Tracking

Conversations are multi turn and contextual and a stateful dialogue manager tracks user intent and history. It stores interim data and importantly detects when a task is complete and can move to the next step or needs escalation. The agent needs to ensure it can handle multiple steps in sequence like comparing plans, explaining benefits, going back etc.

When designing these agents/systems, ensuring robust stat handling prevents disjoined exchanges which were a common failure point in early voice agent prototypes. n

| # Simple state machine for multi-turn flows:class CallSession: n definit(self): n self.state = “START” n self.data = {} n n def handleinput(self, userinput): n if self.state == “START”: n self.state = “COLLECTINTENT” n return “How can I assist you today?” n n elif self.state == “COLLECTINTENT”: n self.data[“intent”] = userinput n self.state = “PROCESSING” n return f”Got it. Let me check that for you.” n n elif self.state == “PROCESSING”: n self.state = “COMPLETE” n return “Here’s what I found…” n n return “Let me connect you to an agent.” n n # Example n session = CallSession() n print(session.handleinput(“Hi”)) n print(session.handle_input(“I need a Medicare plan”)) |

|—-|

- Content, Output validation and Filtering

The knowledge base and sources for RAG must be controlled, versioned and monitored regularly. Open internet data or unexpected LLM outputs are unacceptable. Thus limiting hallucination risk and ensuring regulatory alignment.

n Generated responses, during QA/testing need to be validated and ensured to add safe fallbacks, escalate to a human etc. Per compliance/best practices customers should be informed that they are speaking with an AI agent, building trust and transparency. Collecting feedback at the end paves way for system improvements and enhancements.

- Technical and Operational Challenges

Deploying real time AI voice agents in production introduces several interconnected challenges where systems must minimize latency across speech-to-text, retrieval, model inference and text-to-speech. To maintain natural conversational flow, they must handle speech variability such as accents, background noise and domain specific terminology through continuous tuning and adaptation. For our use case of Medicare age customers, this was a profound challenge that needed to be overcome. n n The agents need well designed escalation logic to determine when complex or sensitive interactions should be handed off to human agents and must integrate seamlessly with legacy CRM and telephony infrastructure often requiring middleware to bridge modern AI components with established enterprise systems.

Overall Architecture

Conclusion

AI voice agents are not just an upgrade to existing systems. They represent a fundamental shift in how humans interact with technology.

The real innovation isn’t just the language model. It’s the infrastructure around it: seamless SIP integration, real-time speech processing, grounded intelligence through RAG, and rigorous compliance guardrails. Together, these components turn a powerful model into a reliable, production-ready system.

We are moving from a world of scripted interactions to one of dynamic, intelligent conversations. Businesses that invest in getting this architecture right won’t just reduce costs – they’ll redefine customer experience.

The future of customer service won’t ask you to “press 1.” It will simply understand you. AI Voice agents are not just automation, they are a transformational interface between people and technology.